Note: Please note that this user manual may not always reflect the current state of the software version. With software updates, it may happen that the present description is no longer accurate in some points. In this case, please contact us directly or use the current version of the user manual, which you can find on our website www.a-eberle.de.

Publisher:

A. Eberle GmbH & Co. KG

Frankenstraße 160

D-90461 Nuremberg

Phone: +49 911 62 81 08 0

Fax: +49 911 62 81 08 99

Email: info@a-eberle.de

Internet: www.a-eberle.de

The company A. Eberle GmbH & Co. KG assumes no liability for damages or losses of any kind resulting from printing errors or changes in this user manual.

Likewise, the company A. Eberle GmbH & Co. KG assumes no liability for damages and losses of any kind resulting from the use of the software or from devices modified by the user through the software.

Copyright 2025 A. Eberle GmbH & Co. KG. All rights reserved.

The user manual summarizes all important information for installation and operation. Read the user manual completely and only use the product once you have understood the user manual.

This user manual is intended for trained professionals as well as trained and certified operating personnel. The content of this user manual must be made accessible to the persons responsible for the installation and operation of the system.

Structure of the warnings

Warnings are structured as follows:

| Type and source of danger! | |

| Consequences of non-compliance. |

| Signal word |  Measure to avoid the danger. Measure to avoid the danger. |

Grading of the warnings

Warnings differ according to the type of danger as follows:

| Warns of an imminent danger that will result in death or serious injury if not avoided. |

| Danger |

| Warns of a potentially dangerous situation that will result in moderate or minor injury if not avoided. |

| Warning |

| Warns of an imminent danger that will result in death or serious injury if not avoided. |

| Caution |

| Warns of a potentially dangerous situation that will result in property or environmental damage if not avoided. |

| Note |

| Tips for proper handling of the software and recommendations. |

| Note |

Instructions

Structure of the instructions:

Instruction for an action.

Instruction for an action.

Result indication if necessary.

Result indication if necessary.

Lists

Structure of bulleted lists:

Level 1

Level 2

Structure of numbered lists:

Level 1

Level 1

Level 2

Level 2

For the safe and correct use of the software, also observe the additionally supplied documents as well as relevant standards and laws.

Keep the user manual, including the applicable documents, readily available near the system.

Installation file

License agreements

License file (JSON file)

WebPQ® database software including PostgreSQL database for fully automated processing and evaluation of power quality data in your IT environment! Basic versions always include 3 units – unlimited expansion possible via add-ons.

Automated reading of measurement data from PQI-LV / PQI-DA smart and PQI-DE devices via SSH and CCCI interface

Web server for visualizing measurement data and disturbance records in level-time diagrams / histograms / bar charts / vector diagrams / ITIC / and more

Responsive live data display from one or more devices simultaneously

Automated alarm management in case of disturbances

Automated reports according to EN50160 and IEC61000-2-2 / IEC61000-2-4 / IEC61000-2-12, switchable to up to 65 standard templates

Parameterization and management of measuring devices via the web server

IT security through a comprehensive user rights management system with audit logging, password policies, and more

Visualization of measurement data from PQI-D and PQI-DA devices

Integration of WinPQ into WebPQ® to support PQI-Ds

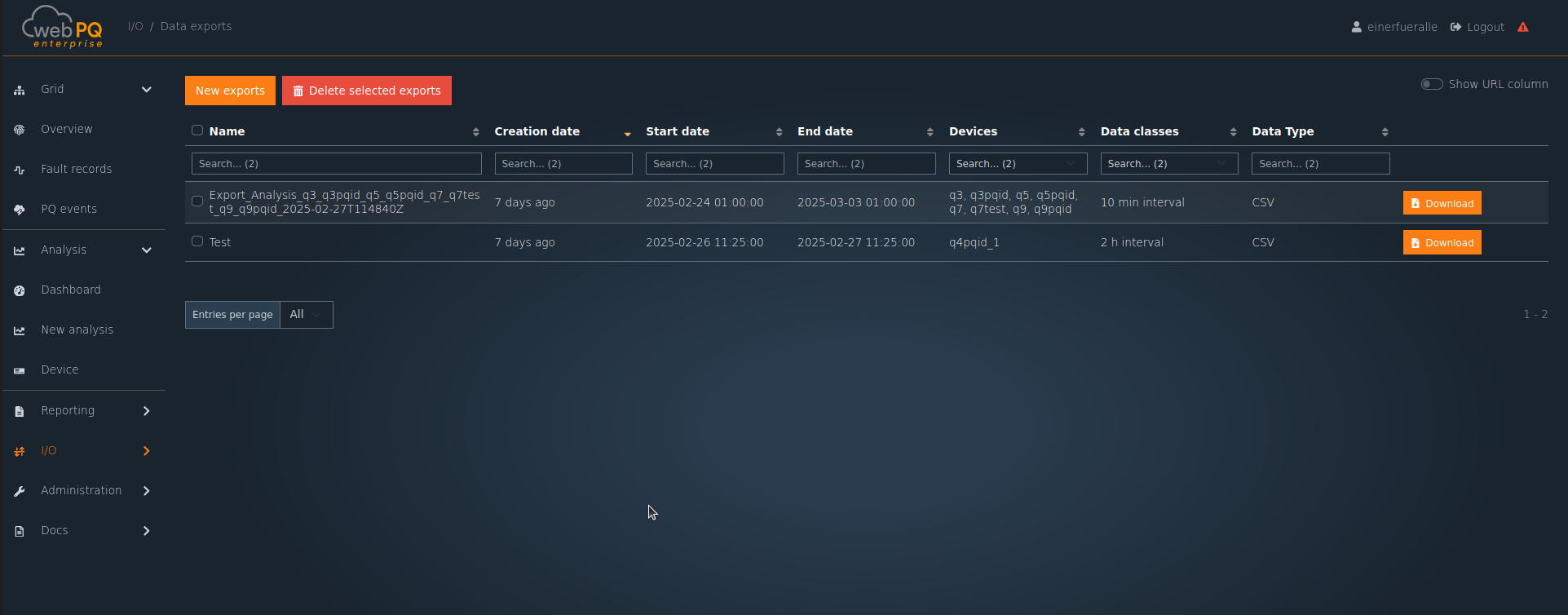

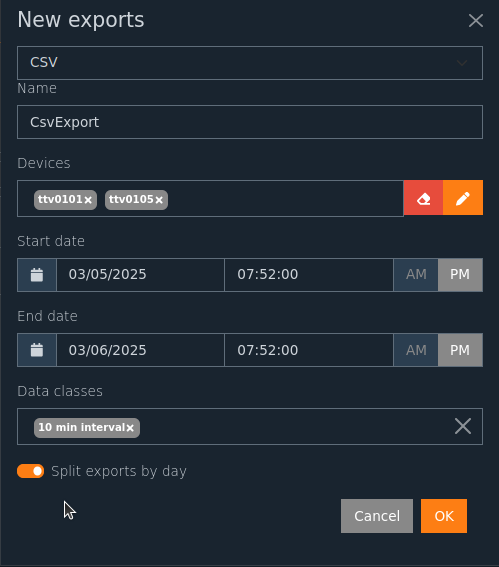

CSV and Comtrade export functionality

| License Type | Description | Article Number |

|---|---|---|

| WebPQ Basic | incl. 3 units for up to 30 units with basic functionality | L.900.9266.10 |

| WebPQ Professional | incl. 3 units for up to 100 units with professional functionality | L.900.9266.20 |

| WebPQ Enterprise | incl. 3 units for systems > 100 units with enterprise functionality | L.900.9266.30 |

WebPQ Initial Order - Number of Units

| Number of Units | Article Number |

|---|---|

| 10 Units | L.900.9266.1010 |

| 30 Units | L.900.9266.1025 |

| 50 Units | L.900.9266.2050 |

| 100 Units | L.900.9266.2100 |

| 500 Units | L.900.9266.3500 |

| 1000 Units | L.900.9266.3599 |

Available WebPQ Add-ons

| Add-on Name | Article Number |

|---|---|

| WebPQ Add-on "Fleet Management" | L.900.9265.32 |

| WebPQ Add-on "Nequal Export" | L.900.9265.34 |

Additional Units

| Number of Units | Article Number |

|---|---|

| WebPQ Add-on 10 Units | L.900.9266.60 |

| WebPQ Add-on 50 Units | L.900.9266.61 |

| WebPQ Add-on 100 Units | L.900.9266.62 |

Maintenance Agreements

| Maintenance Agreement | Article Number |

|---|---|

| WebPQ Maintenance Agreement Annual | L.900.9066.10.01 |

| WebPQ Maintenance Agreement One-time | L.900.9066.10.02 |

Publisher:

A. Eberle GmbH & Co. KG

Frankenstraße 160

D-90461 Nuremberg

Windows 10 64-bit

Windows 11

Windows Server 2016

Windows Server 2019

Windows Server 2022

Windows Server 2025

WebPQ: Chrome, Firefox, Microsoft Edge, Safari

PostgreSQL 14.*

MS-SQL (ab Version 2022)**

*PostgreSQL is supported from WinPQ > 6.2. **Individual order process and license key are necessary! Consultation required.

If WebPQ is to be operated with MS-SQL, the MS-SQL server must be configured to support Mixed-Mode Authentication. This is necessary so that WebPQ can access the database. Alternatively, a Windows user with appropriate permissions can be used for database access. This user must then be stored as a user in the WebPQ service.

CPU: 4 cores

RAM: 8 GB memory

Storage: two partitions - 20 GB for WebPQ installation and an additional 1 GB per year per connected measuring device (using standard profiles)

Network: Ethernet adapter for communication with TCP/IP PQ devices

Display: Remote desktop connection or monitor with at least 1280 x 1024 pixel resolution

Browser: Chrome, Firefox, Microsoft Edge, Safari

SMTP Mail Server: acces to a mailserver from the application for alerting, notifications, and user management

Hardware: Server designed for 24/7 operation / Virtual server system (more cost-effective!)

CPU: CPU with 6 or more cores

RAM: 8 GB or more memory

Storage: two partitions - 20 GB for WebPQ installation and an additional 1 GB per year per connected measuring device (using standard profiles) with redundancy and automatic backup

Disk: Solid-state disk for the database on a second partition

Network: Ethernet adapter with high data throughput for communication with TCP/IP devices

Display: Remote desktop connection or monitor with high resolution (e.g., 1920 x 1200 pixels)

Browser: Chrome, Firefox, Microsoft Edge, Safari

SMTP Mail Server: acces to a mailserver from the application for alerting, notifications, and user management

Running WebPQ in a terminal server environment is technically possible, but generally not required, as WebPQ is provided as a web application via an integrated web server. Installation is performed directly on the terminal server; the configuration directory is stored by default in the system folder %programdata%.

Administration Notes:

Initial configuration and administrative adjustments of the WebPQ backend application should only be performed by a user with local administrator rights via remote desktop connection. This ensures that configuration changes are made consistently and securely.

Access rights to the configuration directory should be assigned restrictively to prevent unauthorized changes by other users.

User Access Model:

Use of WebPQ (user management, device management, data analysis, and other functions) is exclusively via a web browser. Users do not require direct access to the terminal server or backend application, but connect to the WebPQ web interface over the network.

The terminal server environment does not affect operation of the web interface. Users can access WebPQ from any supported device (PCs, notebooks, thin clients) with a compatible browser.

Simultaneous access by multiple users to the web interface is possible, as WebPQ is designed for multi-user operation.

Access to the web interface is via the web server URL, which is provided to users—this can, if necessary, also be local to the terminal server (e.g., https://localhost:8443).

Technical Recommendations:

The terminal server must have sufficient resources (CPU, RAM, network bandwidth) for running WebPQ and any additional applications.

Permissions and security settings of the %programdata% directory should be reviewed.

Network configuration and firewall rules must allow access to the WebPQ web server; the path to the web interface should be communicated to users.

For optimal performance, use the latest browser versions on client devices.

PDF reader

Installed browser: Chrome, Firefox, Microsoft Edge, Safari

Database tools such as DBeaver (universal for all supported databases) or PG Admin for PostgreSQL

for the WebPQ WebServer: SSL certificate for secure communication in PEM format - see Informationen about SSL- Certificates

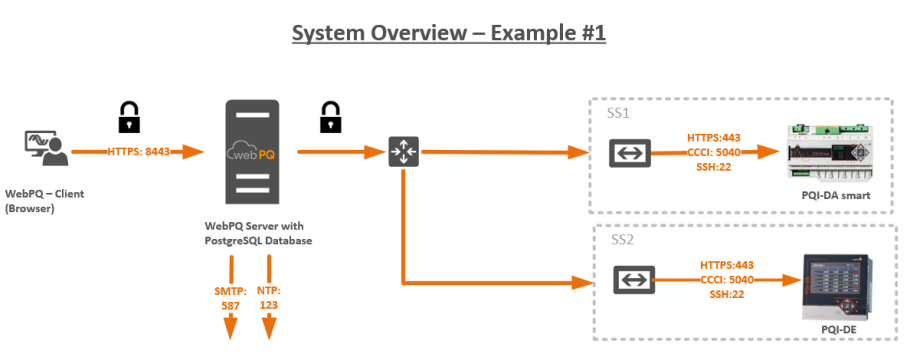

WebPQ Server

Virtual machine or PC where WebPQ runs as a service along with the database (PostgreSQL).

WebPQ Client

Host where the actual analysis of measurement data and management of measuring devices takes place via a browser. This host is often found in the office network.

SMTP Server

Mail server necessary for alerting and functions such as password reset, system messages, and automated alerting.

NTP Server

NTP server for synchronizing devices and the WebPQ server.

| It is also possible to install the database on another server! |

| Note |

Required Port Numbers for Communication:

Releases in gateways and firewalls must be configured from row to column (e.g., from the WebPQ Client, port 8443 - HTTPS must be enabled to the server to allow data usage on the client).

| WebPQ Server | WebPQ Client | Database Server | PQI-D (REG-COM) | PQI-DA smart / PQI-DE / PQI-LV | NTP Server | SMTP Server | Webserver of Device PQI-DA smart / PQI-DE / PQI-LV | |

|---|---|---|---|---|---|---|---|---|

| WebPQ Server | x | x | 5432 PostGre, 3306 MySQL | 8000 TCP, 1111 TCP | 5040 TCP-CCCI, 22 SSH | 123 NTP | 587 STARTTLS, 465 SSL/TLS | 8443 HTTPS |

| WebPQ Client | 8443 HTTPS, 1701 … 170X ParaPQID | x | x | x | x | x | x | x |

| Database Server | x | x | x | x | x | x | x | x |

| PQI-D (REG-COM) | x | x | x | x | x | x | x | x |

| PQI-DA smart / PQI-DE / PQI-LV | x | x | x | x | x | 123 NTP | x | x |

| NTP Server | x | x | x | x | x | x | xx | x |

| SMTP Server | x | x | x | x | x | x | x | x |

| Webserver of Device PQI-DA smart / PQI-DE / PQI-LV | x | x | x | x | x | x | x | x |

* 1701...170X: Range for specific ports for PQI-Ds, depending on configuration.

Typical Data Volumes in Communication

Connecting a measuring device to the WebPQ database generates approximately 20 MB per week in the standard configuration. Since the readout process is continuous, the data transfer requires a minimum speed of only 200 kbit/s.

The following checklist is used to collect system information and requirements for the WebPQ installation to ensure smooth and fast service. Please fill in the relevant fields and send the completed checklist to your contact at A. Eberle.

Are you already using WinPQ?

☐ Yes ☐ No

Which WinPQ version are you currently using? ______________________

Please provide your WinPQ license number or license details: ______________________

Which database type are you currently using?

☐ MSSQL ☐ MySQL ☐ PostgreSQL ☐ Other: _____________

Which version of the database is in use? ______________________

What is the size of your database (in GB)? ______________________

Where is your database located?

☐ Local ☐ Server ☐ Other: _____________

Do you have a backup routine in place?

☐ Yes ☐ No

If yes, please briefly describe where the backups are stored: ______________________

For a smooth process, please create a complete system backup (snapshot/backup) before installation. Please confirm: ☐ Yes ☐ No

How many devices are integrated into the system? ______________________

Which device types do you use?

☐ PQI-DA Smart

☐ PQI-DA DE

☐ PQI-DA LV

☐ PQI-D

☐ PQI-DA

How many users will work with the system? ______________________

Note: The sum of devices and users determines the required number of units for WebPQ. If you have any questions, please contact your A. Eberle representative.

Is an NTP server available? ☐ Yes ☐ No - IP address: ___________

Does your server meet the current system requirements for WebPQ? See System Requirements ☐ Yes ☐ No

Which operating system is installed on the server? ______________________

Have the required ports for communication with the WebPQ database and devices been enabled?

☐ Yes ☐ No

If yes, please specify which ports have been enabled: ______________________

Is it a physical or virtual server?

☐ Physical ☐ Virtual

What type of hard drive is installed in the server?

☐ SATA ☐ SSD

Is the server joined to a domain? ☐ Yes ☐ No

Is the server designed for 24/7 operation? ☐ Yes ☐ No

Is an SMTP server available for alerts and user management? Recommended function

☐ Yes ☐ No

Are the access credentials available? ☐ Yes ☐ No

Server address: ______________________

Port: ______________________

Username: ______________________ (Required for commissioning – please do not provide here!)

Password: ______________________ (Required for commissioning – please do not provide here!)

Encryption: ☐ TLS ☐ SSL ☐ None

Which partitions are available on the server (e.g., C:, D:, etc.)? It is recommended to store the database and temporary files on separate partitions. C: Size: _______________ D: Size: _____________

Is a server migration planned or desired?

☐ Yes ☐ No

Do you want to integrate new devices into the system?

☐ Yes, number ______ ☐ No

Is remote access via TeamViewer or another tool possible?

☐ Yes ☐ No

Are the access credentials available? ☐ Yes ☐ No

Follow the operating manual.

Always keep the operating manual during installation.

Ensure that only trained personnel operate the software.

Ensure that the software is only operated only in its original state.

Ensure that the software runs in a secure system operation.

Ensure that the software is regularly backed up.

Ensure that the latest version of the software is always installed.

| For information on patch management, register on the homepage in the customer center or contact your sales partner! |

| Note |

The product WebPQ is exclusively used for the evaluation of Power Quality measurement data and energy measurement data in the power grid at low, medium, and high voltage levels. If the software is used in a manner not specified by the manufacturer, it may potentially cause damage!

The installation of WebPQ is carried out in several steps, including installation, administrative configuration, and initial setup. As of 2025, with WebPQ taking over many functions from WinPQ, various installation options and operating modes are available.

This option is suitable if the basic functions of WebPQ, which continue to evolve, are sufficient and there are no special requirements for export formats or integration of devices from the classic platform (PQI-D / PQI-DA).

Required if PQI-D or PQI-DA (see illustration in the software) needs to be integrated.

Required if special reports from WinPQ are needed.

Required if special exports, such as PQDIF, are necessary.

Required if PQ Box data needs to be imported into the database.

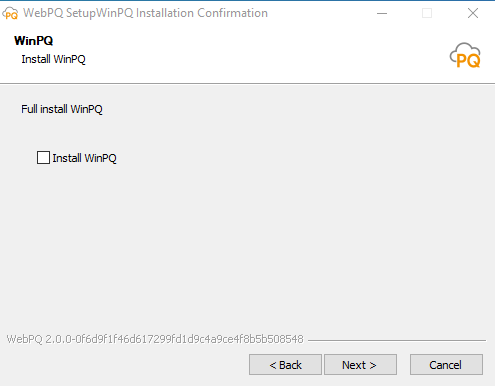

WinPQ software is always included in the license and delivery of WebPQ software and can be selected or deselected during installation.

A separate uninstallation assistant is available for uninstalling WinPQ software, which is described here.

| Please make sure to check the compatibility information and versions of the supported databases in the chapter System Requirements |

| Hint |

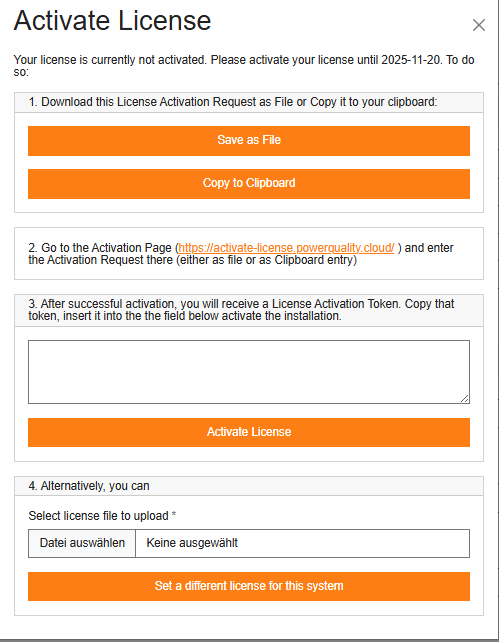

Starting with WebPQ version 2.1 the installation must be activated with an Activation Key. Until activation a notice dialog or the activation window is shown at the top. The software can be used for a maximum of 30 days without activation; within this period the Activation Key must be applied.

The activation file is generated using the hardware ID of the host and is only valid on this host. No direct internet connection is required for activation, but you must transfer a file from the host to another PC with internet access to activate the key at https://activate-license.powerquality.cloud/.

If the host hardware changes later, the stored activation is no longer valid and the activation process must be repeated. In that case, support may first need to reset the previous activation.

Proceed as follows:

Download the file LicenseActivationRequest.json via the button Save to file or copy its content via the button Copy to clipboard.

Open https://activate-license.powerquality.cloud/ and submit the activation request (either upload the file or paste the copied content).

After successful activation you receive an activation token for the license. Copy this token and paste it into the field to activate the installation, or alternatively use the file selection dialog to choose the activation file you downloaded from the activation page.

If you plan a system migration or the host hardware changes, a new activation is required. Repeat the process described above. For questions contact our support at pqsys-support@a-eberle.de.

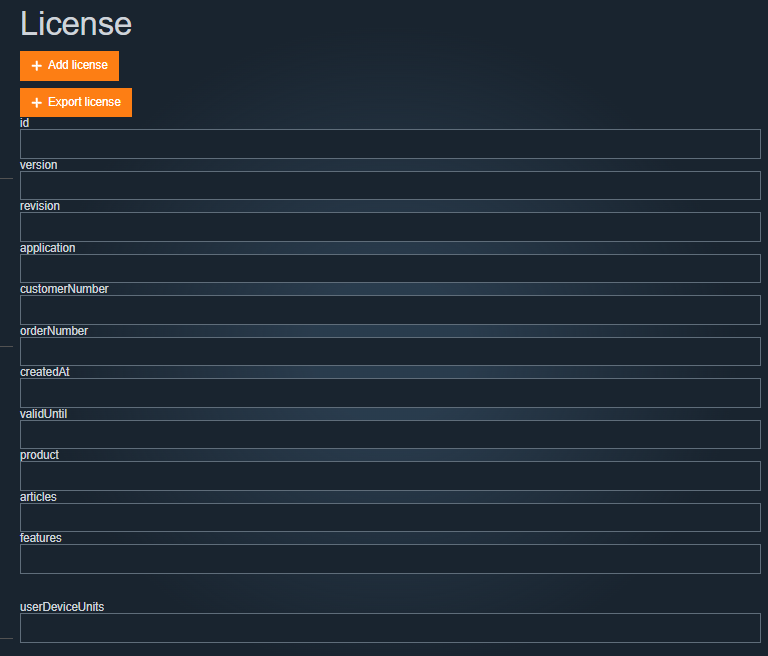

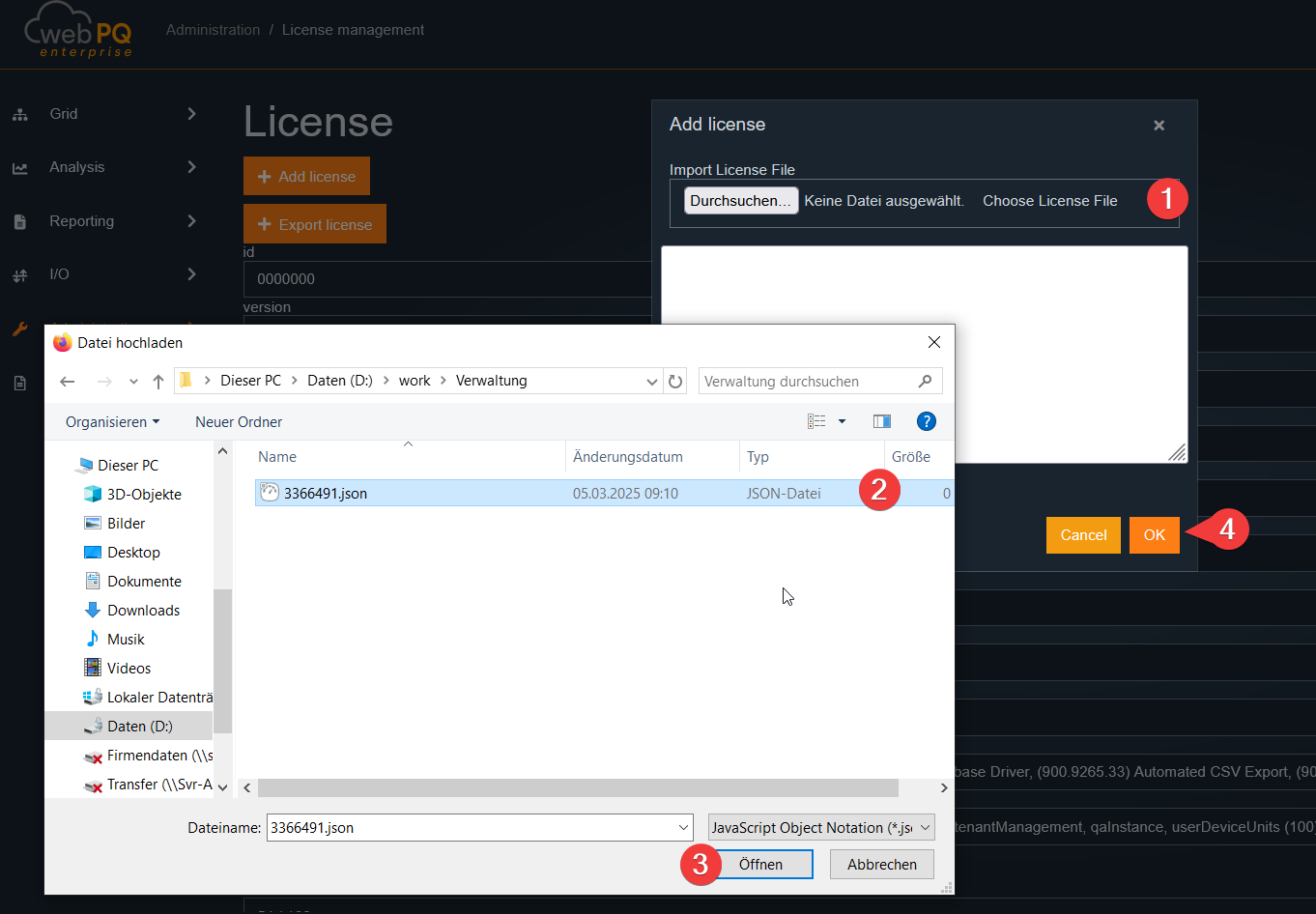

For later license replacement, renewal, or reactivation details inside the running application, see the License Management chapter.

WebPQ Installation with Database (PostgreSQL) - Standard Case

The PostgreSQL database is installed directly on the host along with the WebPQ application. This installation type is particularly recommended for small to medium-sized systems (up to 200 devices). The PostgreSQL database is also installed during setup. Individual directory structures can be adjusted during installation.

WebPQ Installation Without Database and Connection to an Existing Database or Installation of a PostgreSQL Database on a Dedicated Server

This option is suitable if an existing database infrastructure is to be used or if the PostgreSQL database is installed on a separate server.

WebPQ Installation with Parallel WinPQ Installation with or Without a Database

This installation type should be selected if WinPQ software functions need to be retained. It must be decided which instance (WinPQ or WebPQ) will handle communication with the devices.

The WebPQ software is delivered securely via our portal https://software.a-eberle.de using a dedicated one-time access with SHA256 hash sum verification. Please verify the checksum for security purposes.

The installation process starts by double-clicking the "WebPQ_Setup_x.y.z.exe" file. The installation must be confirmed in the User Account Control with "YES." Next, a language selection for the assistant must be made.

Acceptance of the license terms is required. More details on the terms and maintenance contract can be found in the delivery documents.

A target directory for installation can be specified. All necessary operational data will be stored in this directory. User-specific data can be found in %programdata%/aeberle/webpq.

A license file is required for the software to operate. This JSON-format file is provided during initial delivery or with a maintenance contract. The JSON file can be selected via "Browse" and is validated by the assistant. If the file is lost, please contact A. Eberle with your order and customer number.

If the WinPQ software is to be installed, it must be selected here. Information on why this might be necessary can be found under Operating WinPQ Software Parallel to WebPQ.

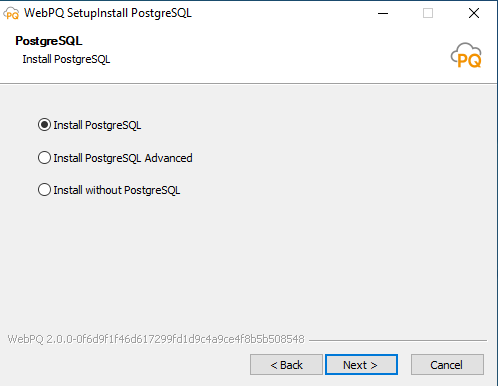

The appropriate mode must be selected based on the Installation Variants.

Install PostgreSQL

In this mode, the following steps are performed:

The PostgreSQL database is installed with the following default parameters:

| Parameter Name | Value |

|---|---|

| Directory | C:\ProgramFiles\ WebPQDatabase |

| Port | 5432 |

| Database Service Name | WebPQDatabase |

| WebPQ Server Service Name | WebPQServer |

| WebPQ Client Service Name | WebPQClient |

The database is installed with the following default passwords and users:

| Role | Username | Password |

|---|---|---|

| Administrator | PQDBA | admin |

| User | PQID | PQID |

| The default passwords should always be changed to avoid significant security risks. It is recommended to use the advanced installation to set custom passwords. |

| Note |

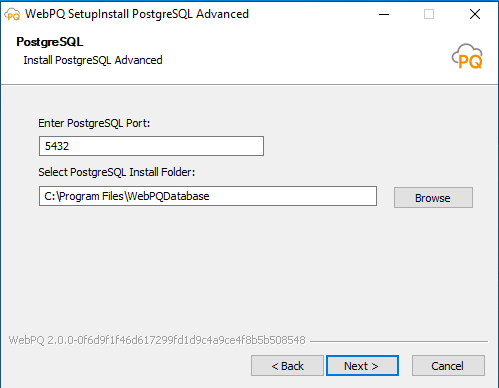

In this mode, PostgreSQL is installed with custom settings:

Port number for database access

Target directory for the database (e.g., on another drive like an SSD)

Custom usernames and passwords for "Database Administrator" and "Database User"

This mode is suitable if an existing database is used, either running on another server or already available on the host. After completing the assistant, the following connection details must be available. Pay attention to case sensitivity in parameter names!

Database name

Username with database access

Password for the database user

Database schema name

For installations with a dedicated PostgreSQL server, an additional continuously updated backup mirror can be set up. In this setup, a second PostgreSQL server runs as a replica of the production system.

A replication server increases resilience, but it does not replace a classic backup strategy.

The recommended setup is therefore a combination of:

one primary server for production,

one backup server as a continuously updated mirror,

and additional classic backups for retention, version history, and restoration of older data states.

Replication helps protect against server or hardware failure. Classic backups additionally protect against operator errors, logical data errors, and accidental deletion of data that would otherwise also be replicated.

You need:

one primary server with PostgreSQL and WebPQ,

a second backup server or virtual machine,

PostgreSQL on both systems,

a working network connection between the two servers,

and an administrator with PostgreSQL knowledge.

The backup server is a continuously updated copy of the primary server. Changes from the production system are transferred automatically. During normal operation, the backup server is read-only.

Server A = Primary server / production system

-> WebPQ application

-> PostgreSQL primary database

-> active write operations

Server B = Backup server / mirror

-> PostgreSQL replica

-> continuous transfer of changes from Server A

-> read-only operation during normal useOn the primary server, open the file postgresql.conf and set at least the following values:

wal_level = replica

max_wal_senders = 5Then open the file pg_hba.conf and allow replication access for the backup server or the corresponding network. Example:

host replication replicator 192.168.1.0/24 md5Then create a user for replication:

CREATE ROLE replicator WITH REPLICATION LOGIN PASSWORD 'password';Restart PostgreSQL on the primary server afterward.

Stop PostgreSQL on the backup server.

Then clear the PostgreSQL data directory on the backup server. No old data residue should remain there.

After that, copy the database base state from the primary server using pg_basebackup:

pg_basebackup -h SERVER_A_IP -D /var/lib/postgresql/data -U replicator -P -RReplace SERVER_A_IP with the address of the primary server and adjust the target directory to your PostgreSQL installation.

Start PostgreSQL on the backup server. Synchronization begins automatically after startup.

On the primary server, the replication status can be checked, for example, with:

SELECT * FROM pg_stat_replication;If the backup server appears there as an active connection, replication is running.

Changes on the primary server are transferred automatically to the backup server.

The backup server remains read-only during normal operation.

The backup server is intended as a failover reserve and not as an additional working server.

If the primary server fails, the backup server can be manually promoted to the new primary server:

pg_ctl promoteAfter that, the former backup server continues operating independently as the primary server.

There is no automatic failback to the original primary server. If the old primary server is to be used again later, it must be set up again as a replica or as a new primary system.

Streaming replication does not replace regular file-based backups or PostgreSQL backups.

Before updates and larger system changes, additional backups should still be created.

For sizing, architecture, or recovery strategy questions, coordinate the database configuration with your IT department or system integrator.

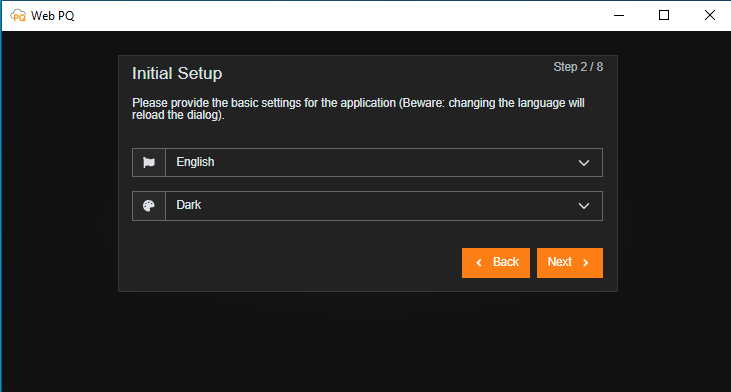

The WebPQ must be initially set up with an administrator in the application layer and certain basic settings such as global password policies. The initial setup must always be performed when a new database connection to an existing WinPQ database is created in the administrative layer! Normally, the initial setup dialog appears automatically after the installation.

The basic settings are divided into eight areas:

Welcome page

Language and layout

Time zone setting

Password policy

User identification and data

Privacy policy

Backup of the encryption key

Summary

Data adoption

Explanations of the sub-areas:

The language and layout are stored in the user account but can be adjusted again after login.

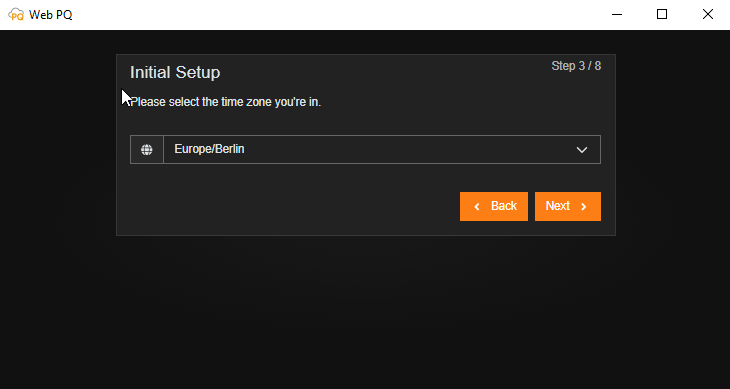

The user's time zone must be set to display times correctly in different time zones. Each measuring point also receives its own individual time zone. The user can choose between different time zone views.

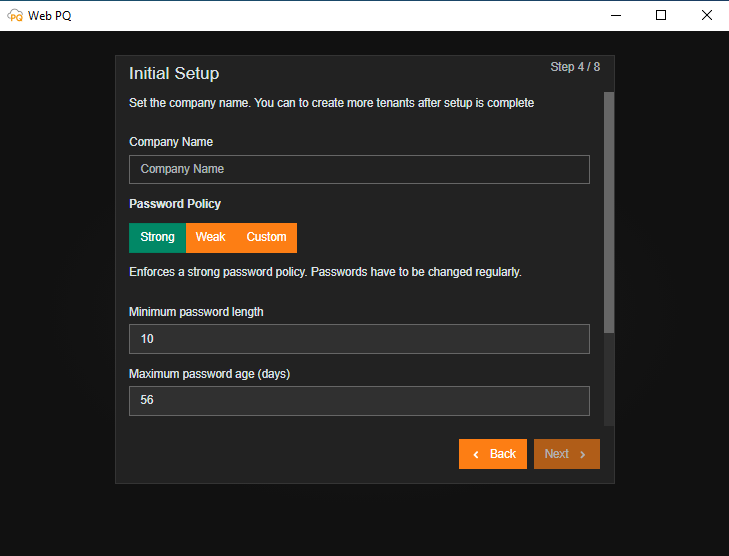

In the area of KRITIS, password policies per company are usually set by central IT. The setting here applies to all created passwords within the further administration. The setting can be changed individually afterward. See also: Tenant Settings

The following settings are possible:

Minimum password length

Password expiration time

Number of lowercase letters

Number of uppercase letters

Number of special characters

Number of digits

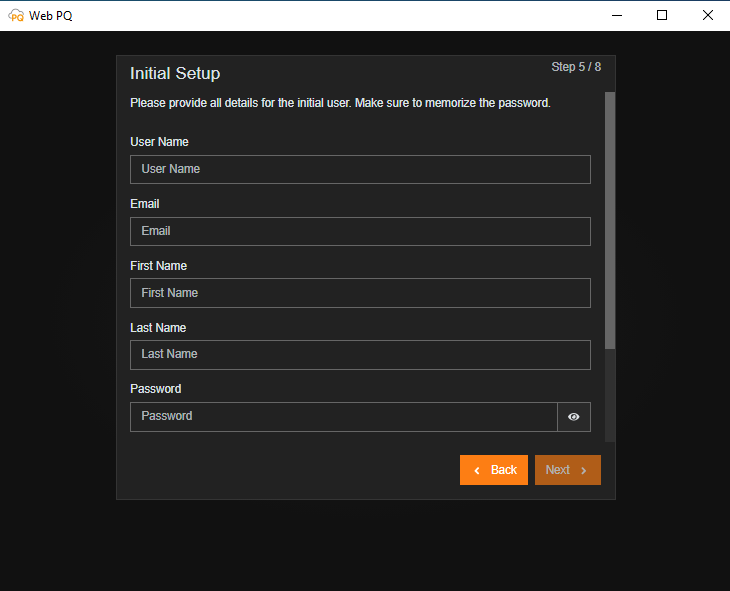

Here, the main administrator of the system is defined. The used passwords must be kept secure. Recovery of the root account is only possible with data loss.

| The entered administrative password must be kept secure and must not be lost! Recovery without data loss is not possible! |

| Note |

The static Swagger and OpenAPI assets of WebPQ are not public download URLs. The customer API description at /swagger.html, /swagger.json, and /integration-swagger.json requires an authenticated WebPQ session or a valid bearer token.

For administrators and developers this means:

open the developer documentation from inside the authenticated WebPQ application when you need the current API entry points,

use the shown Swagger UI or JSON description only after login or with an explicit API token,

optional public user documentation in a deployment does not make the Swagger or API endpoints public.

If an SMTP server is configured in the advanced settings, the password can be recovered using the password reset function with the entered email address.

Information on password reset can be found under: Forgot Password

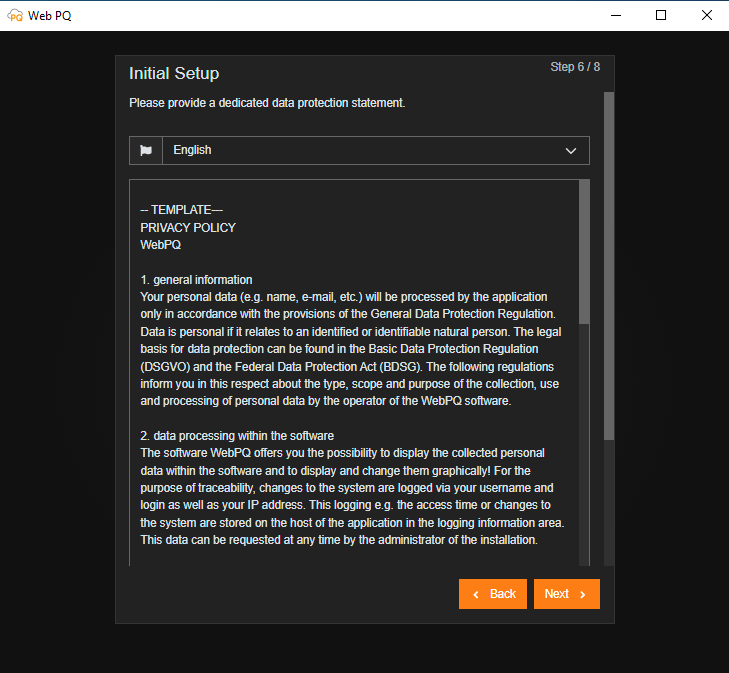

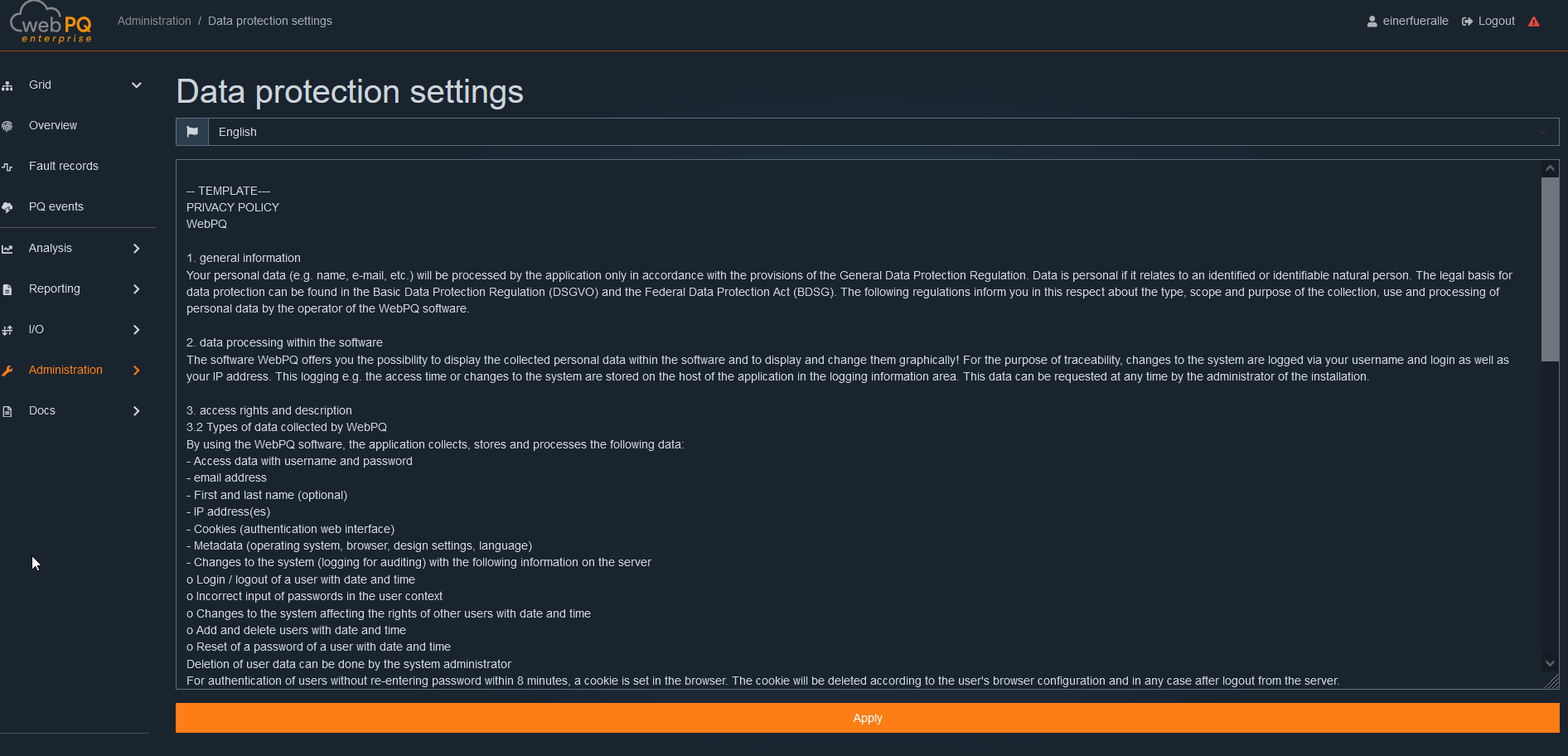

If you want to create an individual privacy agreement for your employees or customers, you can do so directly here. The software logs the required information automatically on the server as per the requirements, e.g., from the BDEW whitepaper.

Information on audit logging can be found under: Audit Logging

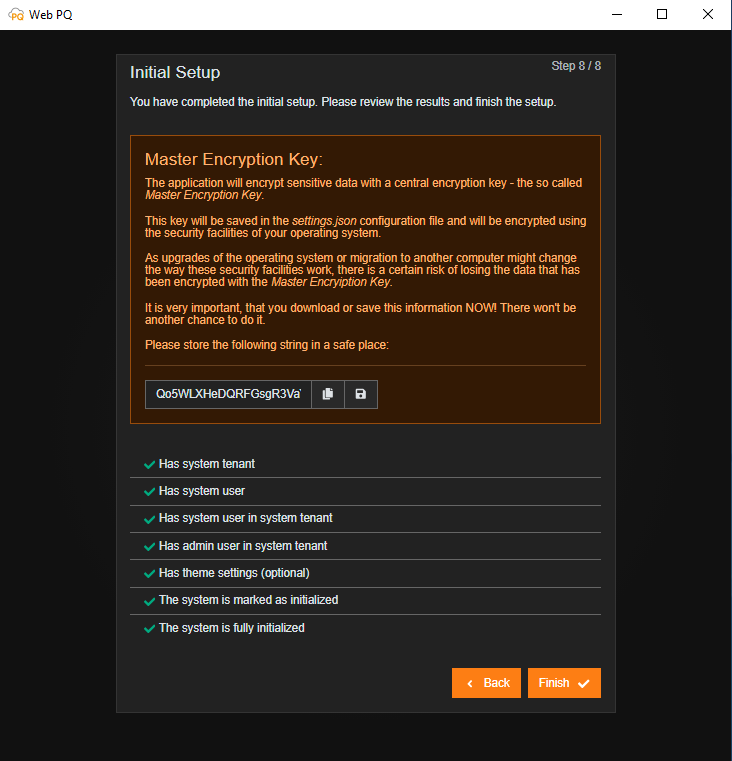

The application encrypts sensitive data with a central encryption key using the AES-256-CBC method, known as the Master Encryption Key. This key is stored in the configuration file settings.json and is encrypted with the security functions of your operating system. Since the functionality of these security features may change due to operating system upgrades or migration to another computer, there is a certain risk of losing data encrypted with the Master Encryption Key. It is very important that you download or securely store this information NOW! There will be no further opportunity to do so.

After all data has been entered, it is transferred to the database, and the application is restarted.

The WebPQ software is usually installed together with a locally running PostgreSQL database. Therefore, both the software and the database must be uninstalled in two separate steps if necessary.

The uninstallation of WebPQ software is done through the Windows built-in uninstallation assistant. This can be accessed either through the Control Panel or by searching in the Windows Start menu.

The database must be uninstalled through the Windows Services Management. The "WebPQDatabase" service must be stopped and removed. Then, the database files can be manually deleted.

Follow these steps:

Open the Windows Services Management.

Stop the "WebPQDatabase" service.

Uninstall the "WebPQDatabase" service.

Delete the database files in the database installation directory (default: C:\Program Files\WebPQDatabase).

Alternatively, the uninstallation can also be done via the command line. Open the command line with administrator rights and enter the following commands:

sc stop WebPQDatabase

sc delete WebPQDatabaseThe WebPQ software stores temporary files and exported files in the directory %programdata%/aeberle/webpq. These files can be manually deleted after uninstalling the software. However, ensure that no important data is lost. Similarly, files of exports and reports may be stored in directories set within the application. These should also be deleted.

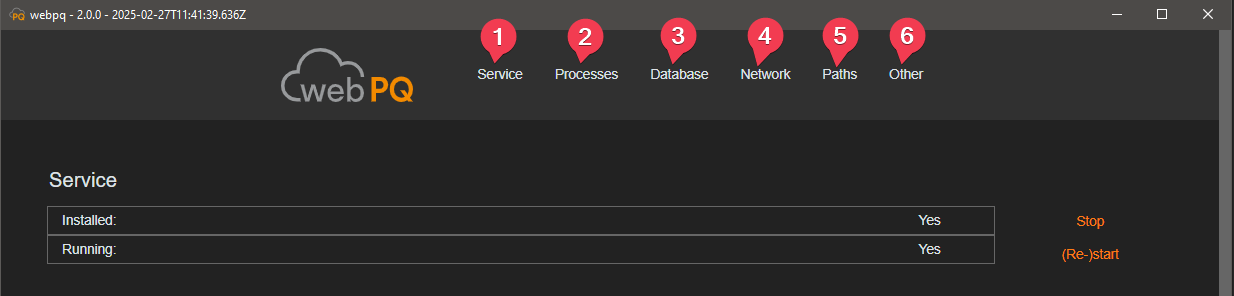

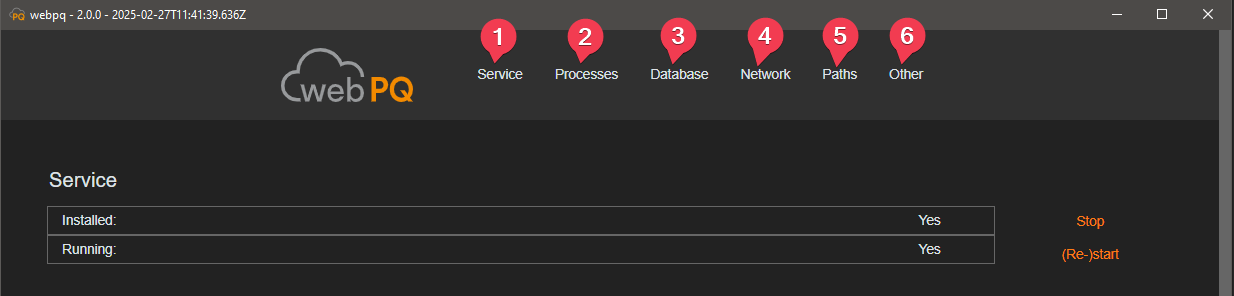

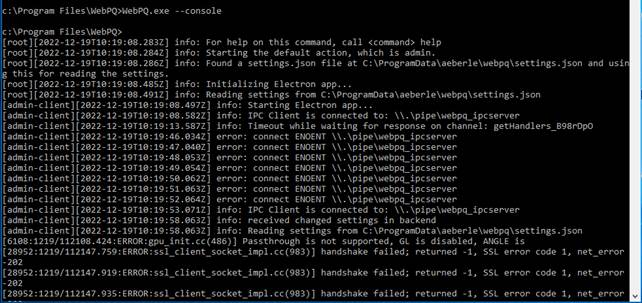

The administrative interface or backend may open with pre-configured connection settings during the initial installation. It can be accessed anytime on the host via the taskbar (next to the clock) by right-clicking the "WebPQ" icon and selecting "Administration."

During the initial setup of the software, usually only the hostname of the database server, the database name, and the username and password of the database need to be configured in the "Database" section. If the database is installed on the same host as the WebPQ software, the default values can usually be retained.

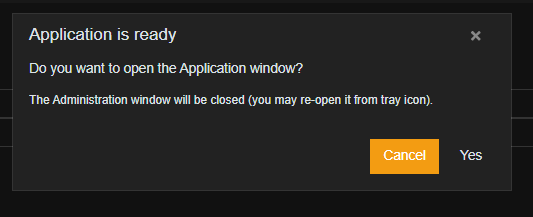

After correctly entering all settings, the processes restart by clicking the "Update" buttons. Then, you will be asked if the application should be started with the embedded web browser. Clicking "Yes" opens the frontend in the app.

The administrative interface itself is generally divided into six main areas, which can be accessed by clicking on the name.

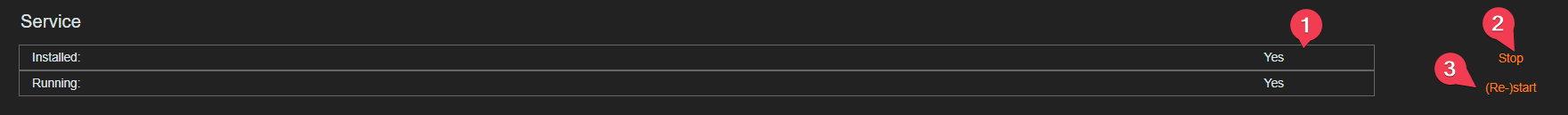

In the "Service" section, the user can monitor (#1), restart (#2), and stop (#3) the status of the background services "WebPQService.exe," which runs under the "System" account.

Generally, the service that provides the web server for the clients should be both installed (Installed: YES) and continuously running (Running: YES).

The service starts automatically when the host starts and runs in the background. It is responsible for communication between the clients and the web server.

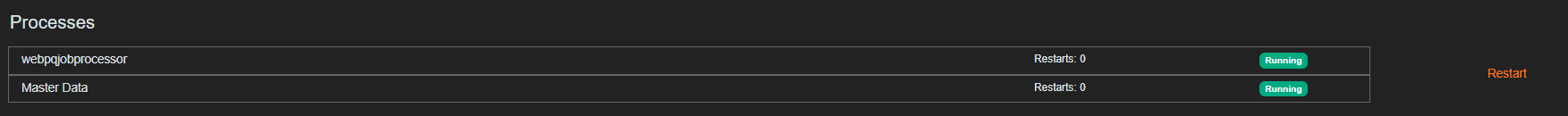

The "Processes" section shows the running processes of the software. Here, the processes can be monitored and restarted if necessary.

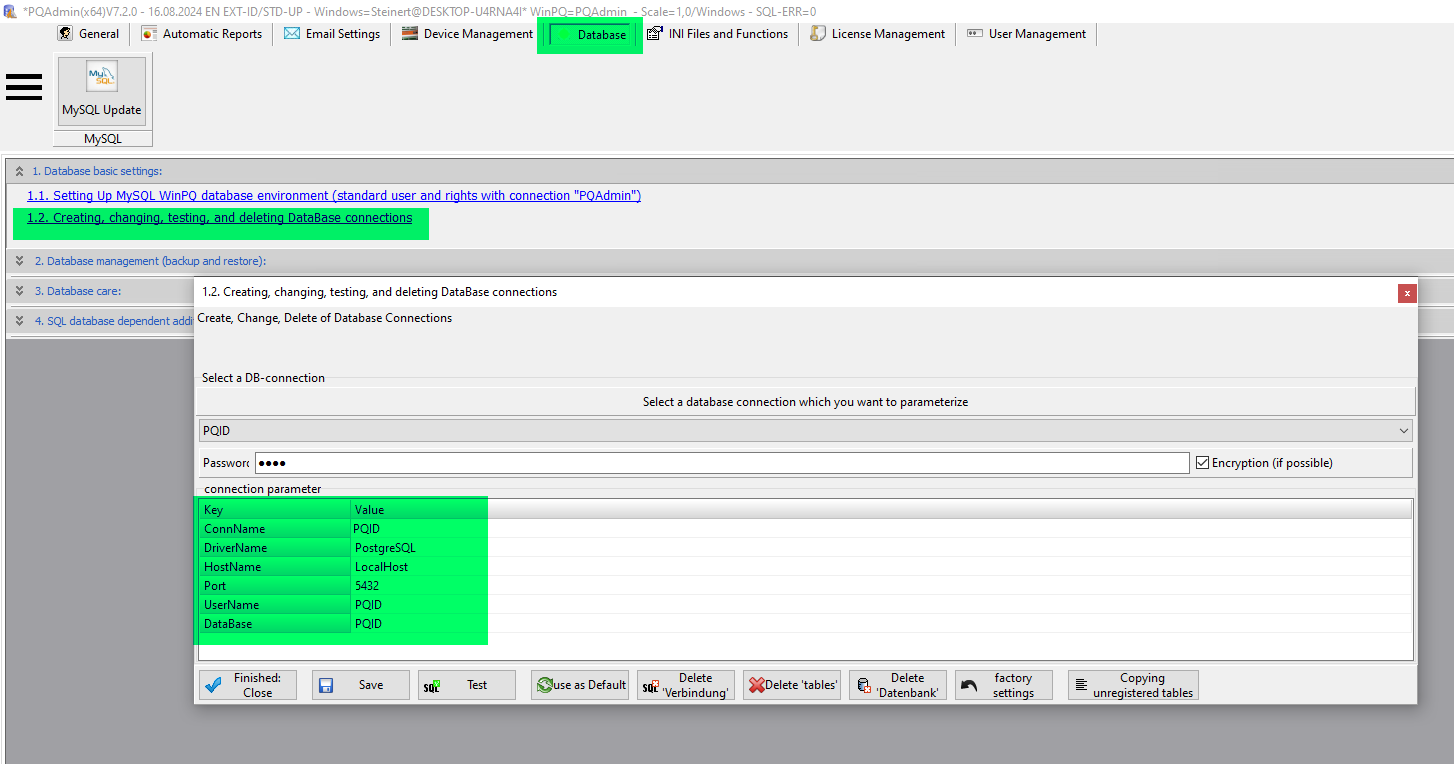

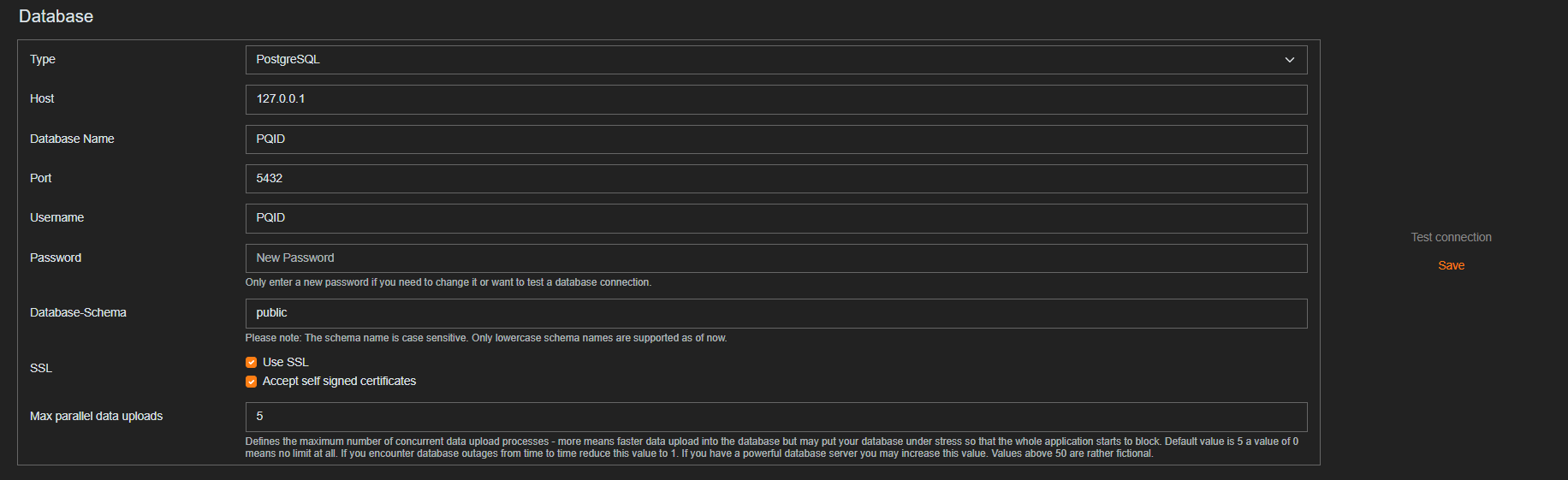

The settings in the database section are necessary to establish a connection with the database server. The database connection settings can be found in WinPQ under "System Management >> Database" if an existing WinPQ database is to be used for WebPQ.

| Parameter | Description |

|---|---|

#1 Type | Specifies the type of database used (MySQL / MariaDB, PostgreSQL & MS-SQL) 2 |

#2 Host | Specifies the IP address / hostname of the server where the database is running |

#3 Database Name | Name of the database where the measurement data is stored and where the WebPQ settings are also stored. |

#4 Port | Specifies the TCP port under which the database server from #2 is reachable |

#5 Username | Username with necessary write permissions on the database specified in #3 |

#6 Password | Specifies the password for the database connection user. The password is stored encrypted in the Windows Security Storage in the system user. For security reasons, it is not possible to read back the password in the interface! A password should only be entered when necessary changes are made! |

#7 Database Schema | WebPQ stores data such as user settings, analyses, and many others in schemas. The default value here is always "public" |

#8 SSL | The connection to the database server is generally encrypted (checkbox checked). |

#9 Self Signed certificates | The supplied certificates of the databases are by default "self signed." However, these can be replaced by company-specific certificates. |

#10 Max parallel data uploads | Sets the maximum number of parallel uploads to the database! By default, 5 is selected here. For large systems with high-performance databases, the number can be increased! |

#11 Test connection | Checks if a connection to the database can be established with the entered data (e.g., if ports are open and all entered data is correct for a connection) |

#12 Update | Saves the settings and restarts the services and processes if changes are made! |

| For MSSQL databases, the database schema dbo should be used. |

| Note |

For a connection to a WinPQ system installed with its default settings, the following settings should be used.

| Parameter | Value |

|---|---|

#1 Type | MySQL / MariaDB |

#2 Host | localhost |

#3 Database Name | PQID |

#4 Port | 3306 |

#5 Username | PQID |

#6 Password | PQID |

| Parameter | Value |

|---|---|

#1 Type | PostgreSQL |

#2 Host | localhost |

#3 Database Name | PQID |

#4 Port | 5432 |

#5 Username | PQID |

#6 Password | PQID |

#7 Database Schema | public |

| We recommend changing "default passwords" in any case and using appropriate secure passwords according to your company policies! Instructions for changing passwords can be found in the WinPQ user manual. |

| Note |

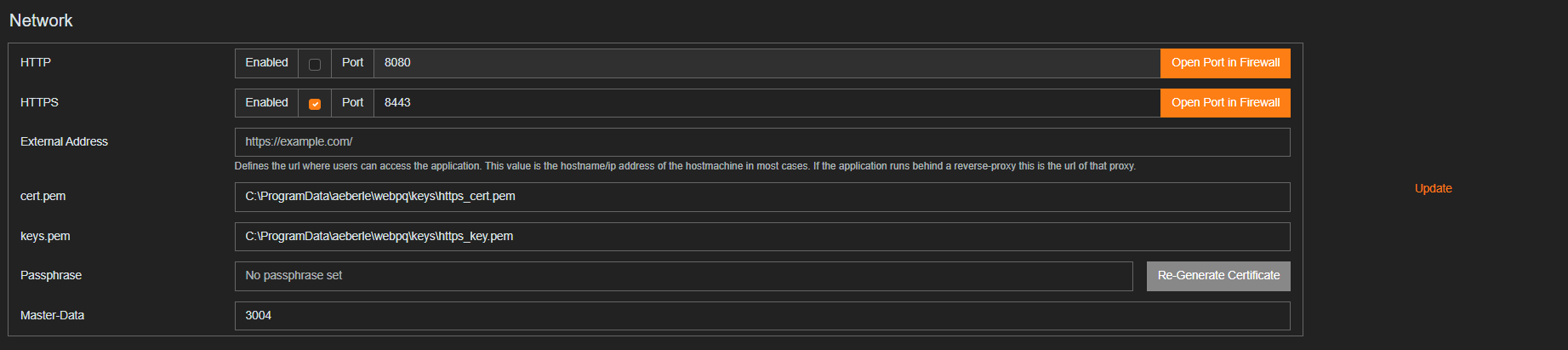

The settings in this section refer to the web server installed on the host (server) in the form of the WebPQ application and the clients (evaluation PCs) that provide the interface.

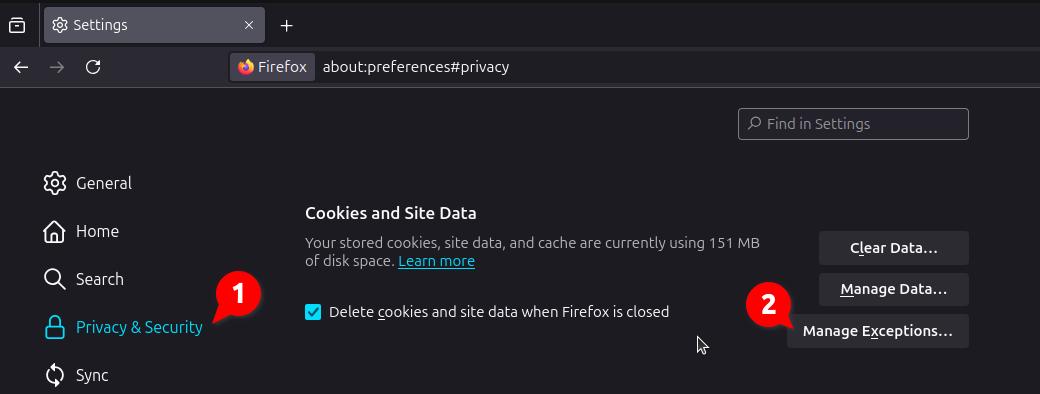

The default settings are "security by default." For example, the web server on the installed PC is only accessible by clients via HTTPS on port 8443 (#2) by default. The service of an unencrypted connection is disabled by default (HTTP).

To use "HTTPS," i.e., the encrypted connection from the client (evaluation PC) to the web server, without a warning of an unknown certificate in the browser on the client (evaluation PC), it is recommended to store your own certificates protected with a password (#6) in the WebPQ application (#3 & #4).

To store the certificates, they must be in PEM format. More information can be found under PEM Certificates.

The certificates can be stored at the specified path and selected in the program interface by clicking (#5) on the path.

| Using "unencrypted" connections and unknown certificates can lead to "data theft" and "data loss"! We always recommend using the "secure" default configuration (HTTPS)! |

| Note |

In this section of the software, all paths where the software stores data are listed. This includes exports of reports in PDF format, data exports in various formats such as CSV and COMTRADE, as well as NEQUAL, log files like the audit log, and temporary files.

This section of the software specifies the path where the settings made in points #1, #2, #3, #4, and #5 are stored (settings.json). For example, if you want to set up a new server or plan a migration, this file can be used to transfer the settings from PC A to PC B. Passwords for the database connection and certificates are excluded from this.

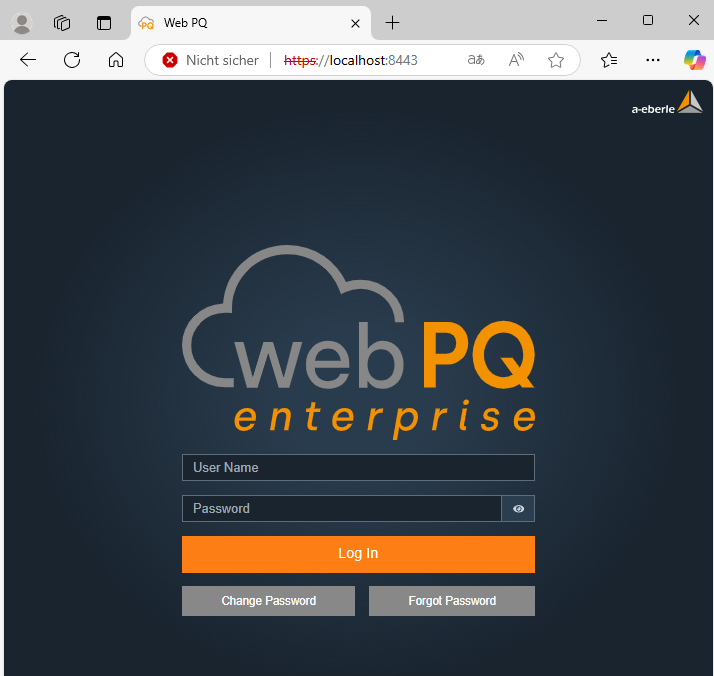

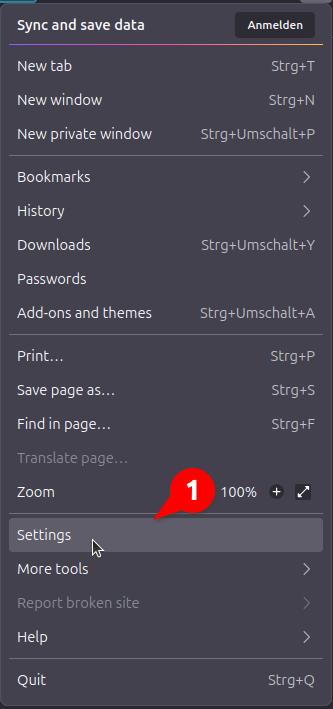

The WebPQ software is opened on workstations via a web browser by accessing a specific link. This link may vary depending on the IT environment and installation. To ensure smooth use of the software, it must be ensured that the port defined in the installation is accessible from the workstation PC and a connection to the server with the installed WebPQ software can be established.

Examples:

Local Installation

If the server on which WebPQ is installed is also the workstation PC, the software can be opened in the browser using the following link.

Client-Server Architecture

If the WebPQ software is installed on a different PC (server) than the workstation PC (client), the address of the server (in this example, the server has the IP address 10.10.1.20) must be entered.

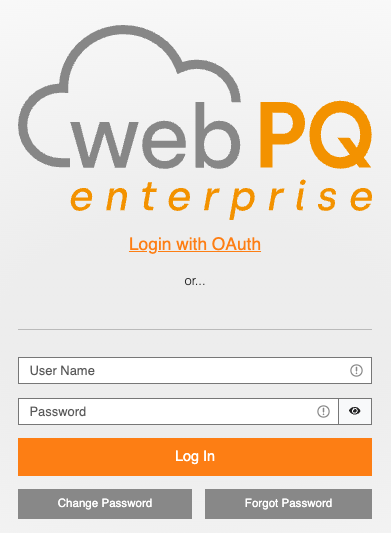

System Login:

Enter the username and password to log in directly to the system!

The user can set their login password directly via the Change Password function before logging in by entering their username and current password. The password policy set for the tenant must be observed!

If the system in the local installation has access to an SMTP server / mail server, it is possible to recover the password using the Forgot Password function and the email address assigned to the user.

| In case of incorrect login attempts, the user will be blocked for five minutes after five incorrect attempts. The incorrect login attempts are also logged in the system. |

| Note |

General Layout of the WebPQ Software

Overview of the user interface and its structure for easy navigation in the software.

The software is divided into the following areas for PQ and other analyses:

Visualization Pages

Description of the pre-configured dashboards that enable quick and targeted display of PQ measurements.

Usage in Analysis

Guide to efficient use of analysis functions, including available tools and settings.

Analysis Cockpit

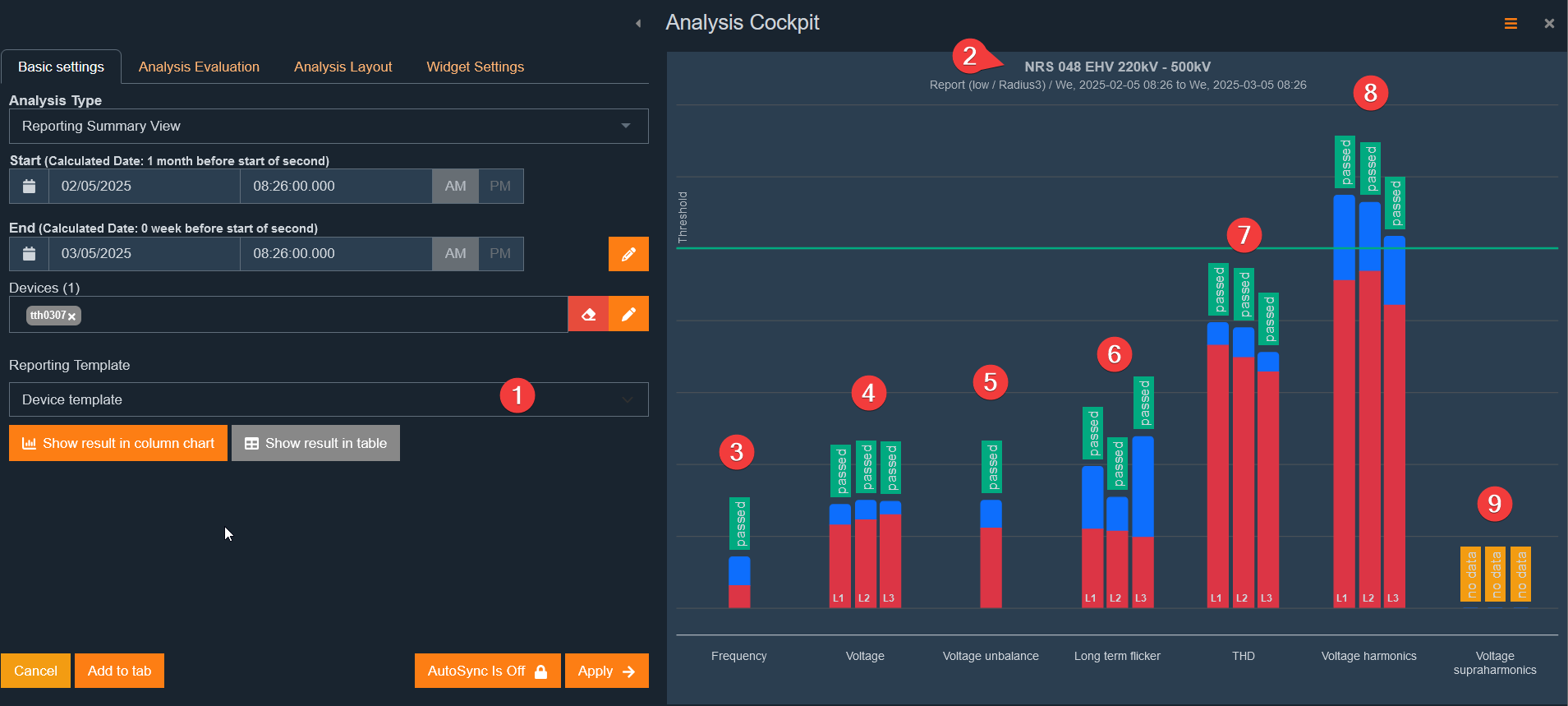

The central analysis tool for creating individual dashboards. More than 20 different analysis types are available to perform customized evaluations.

Device Analysis

Detailed & pre-configured analysis functions for individual measuring devices to specifically access their data. A quick and easy way to keep track of individual measuring points.

Dashboard Analysis

Individual dashboards can be created via the Analysis Cockpit, which can be directly or automatically converted into scheduled reports. It is possible to create any number of dashboards and flexibly adapt them to different analysis requirements.

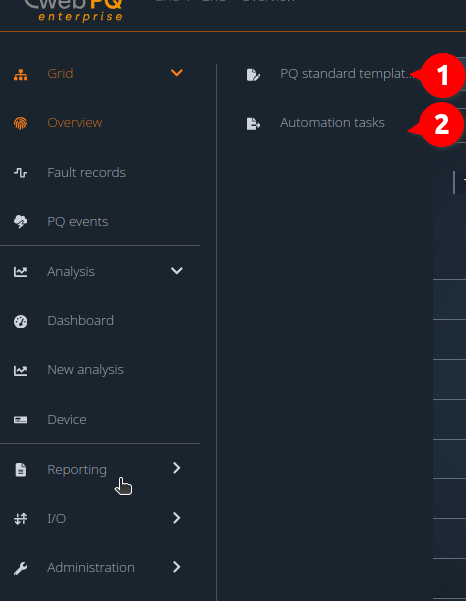

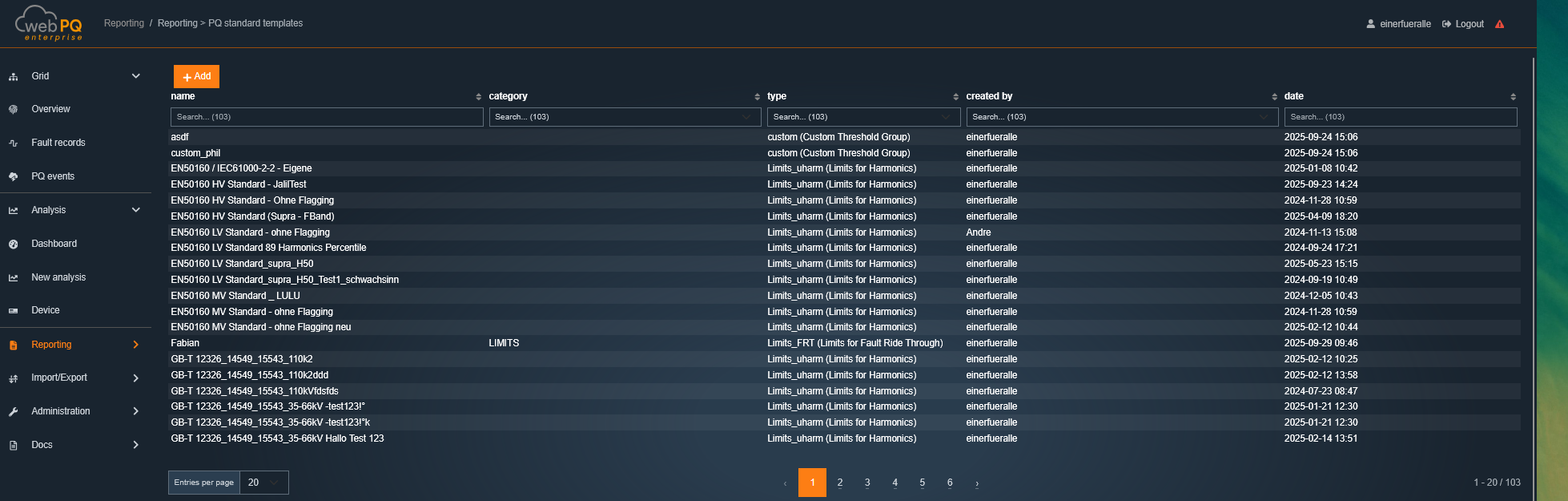

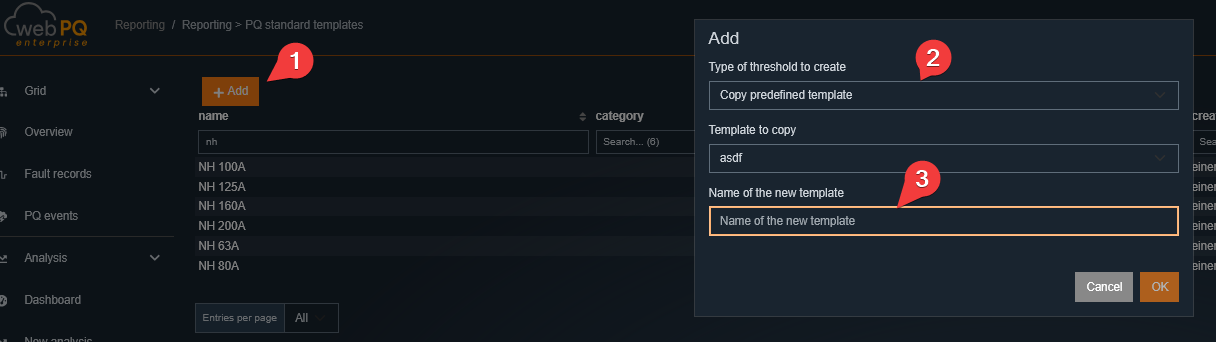

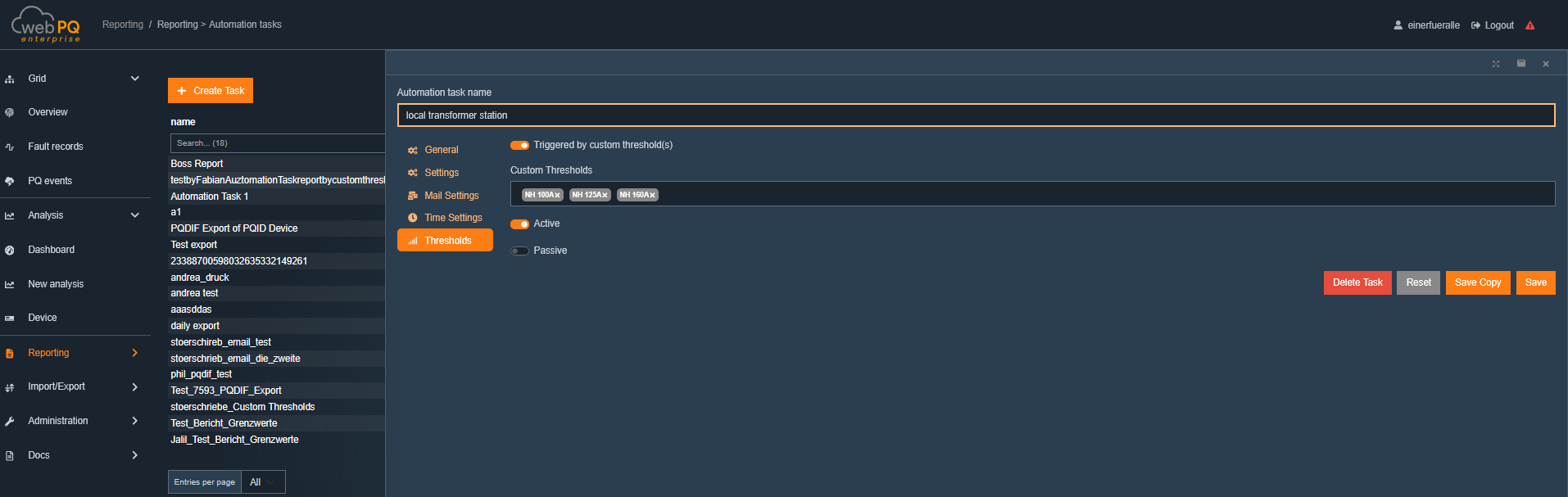

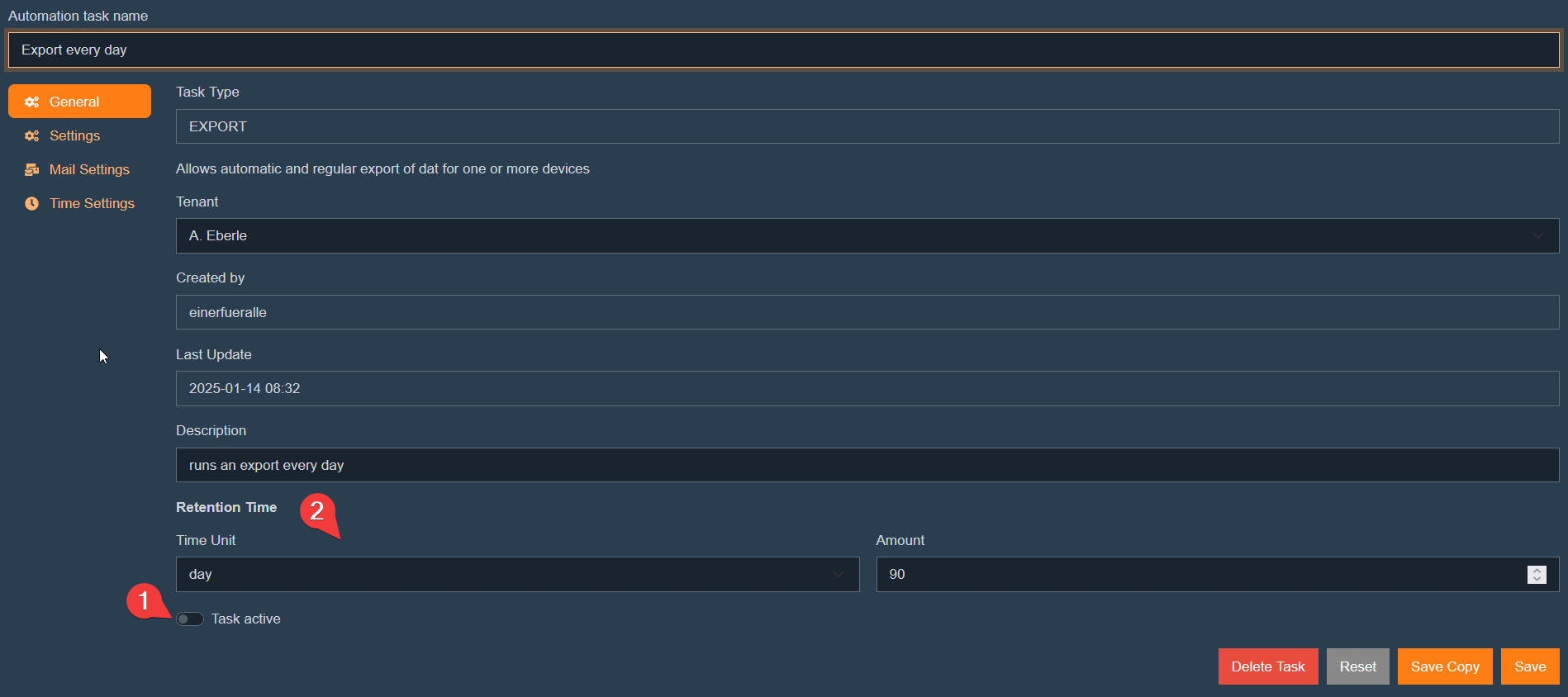

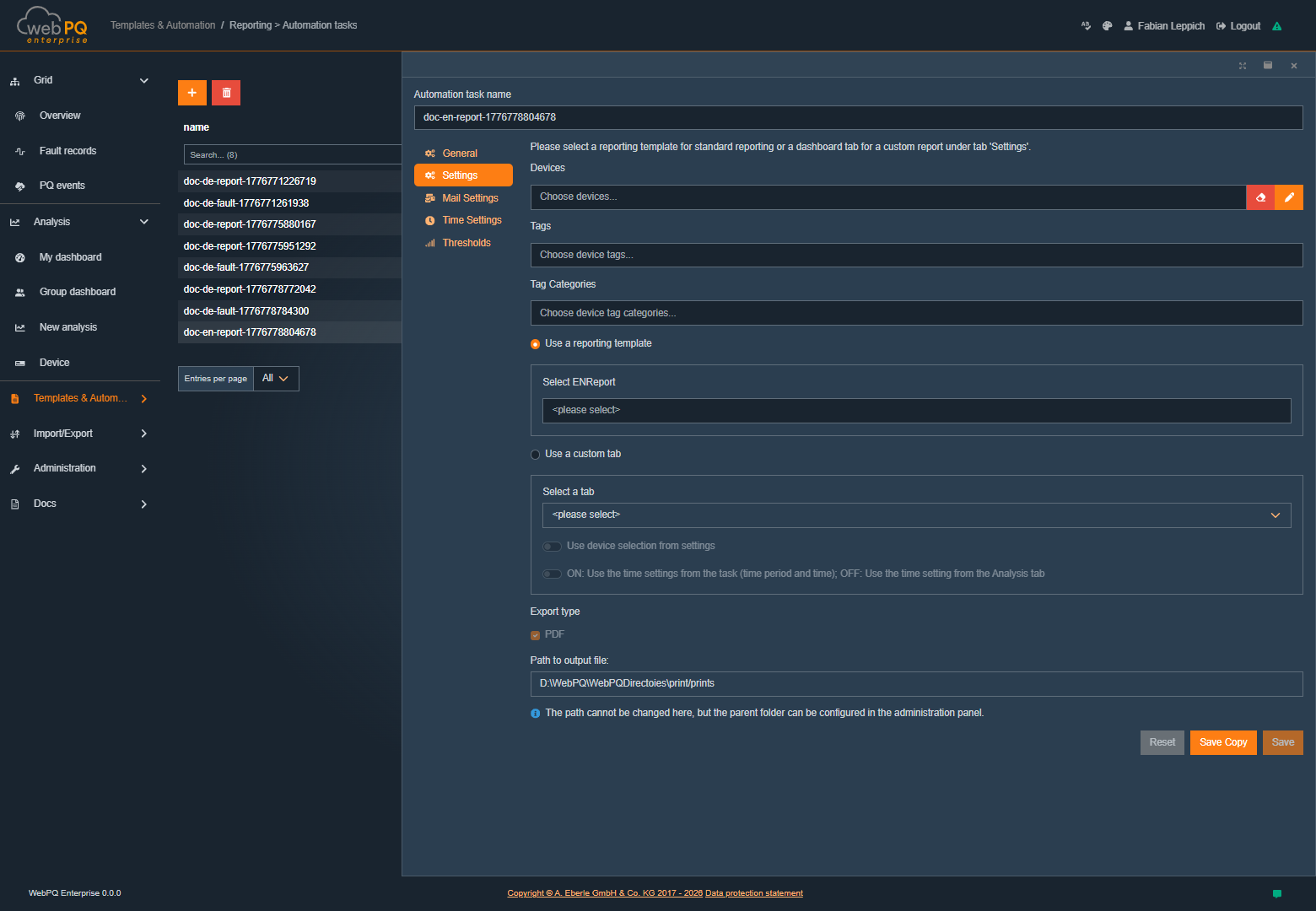

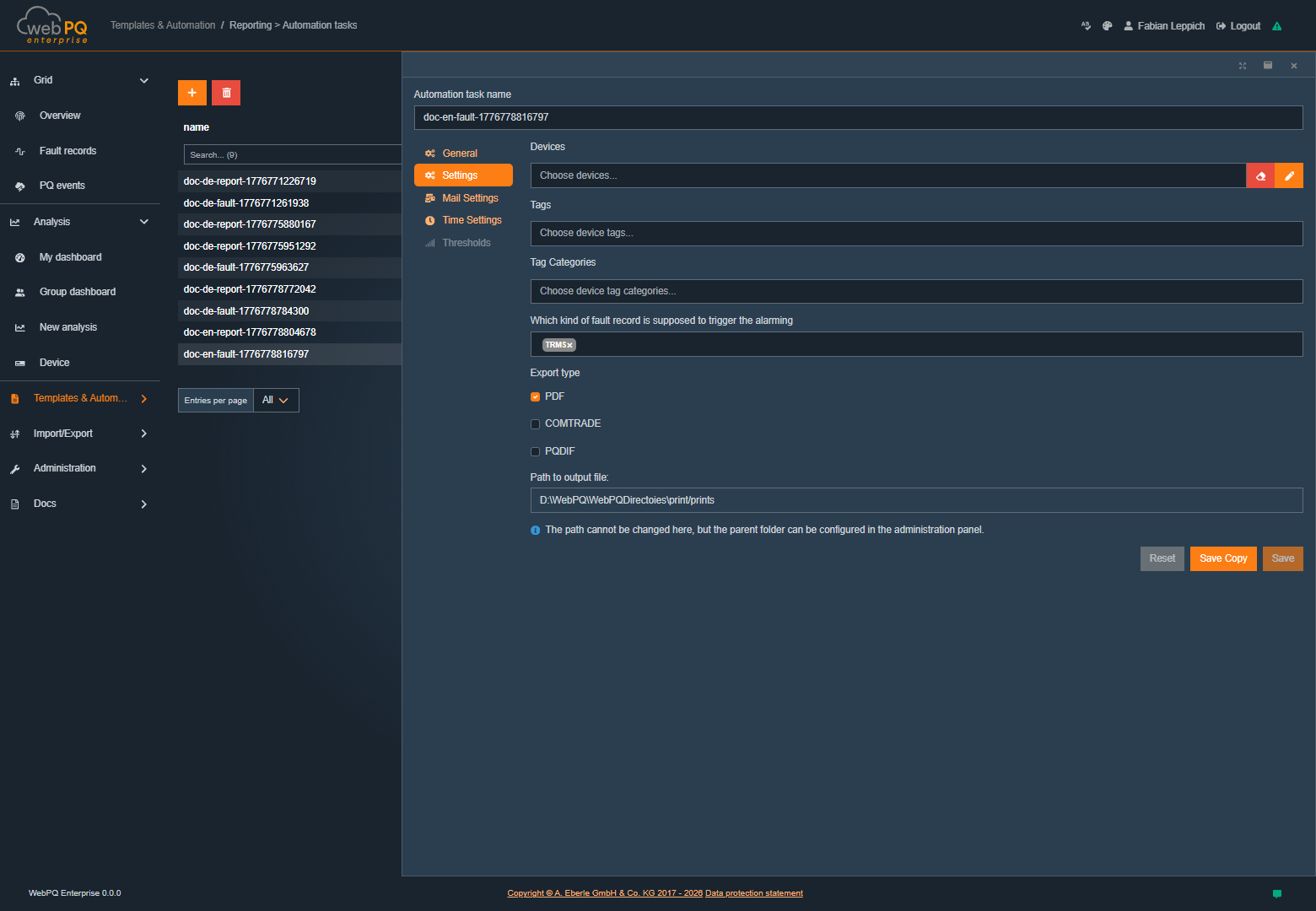

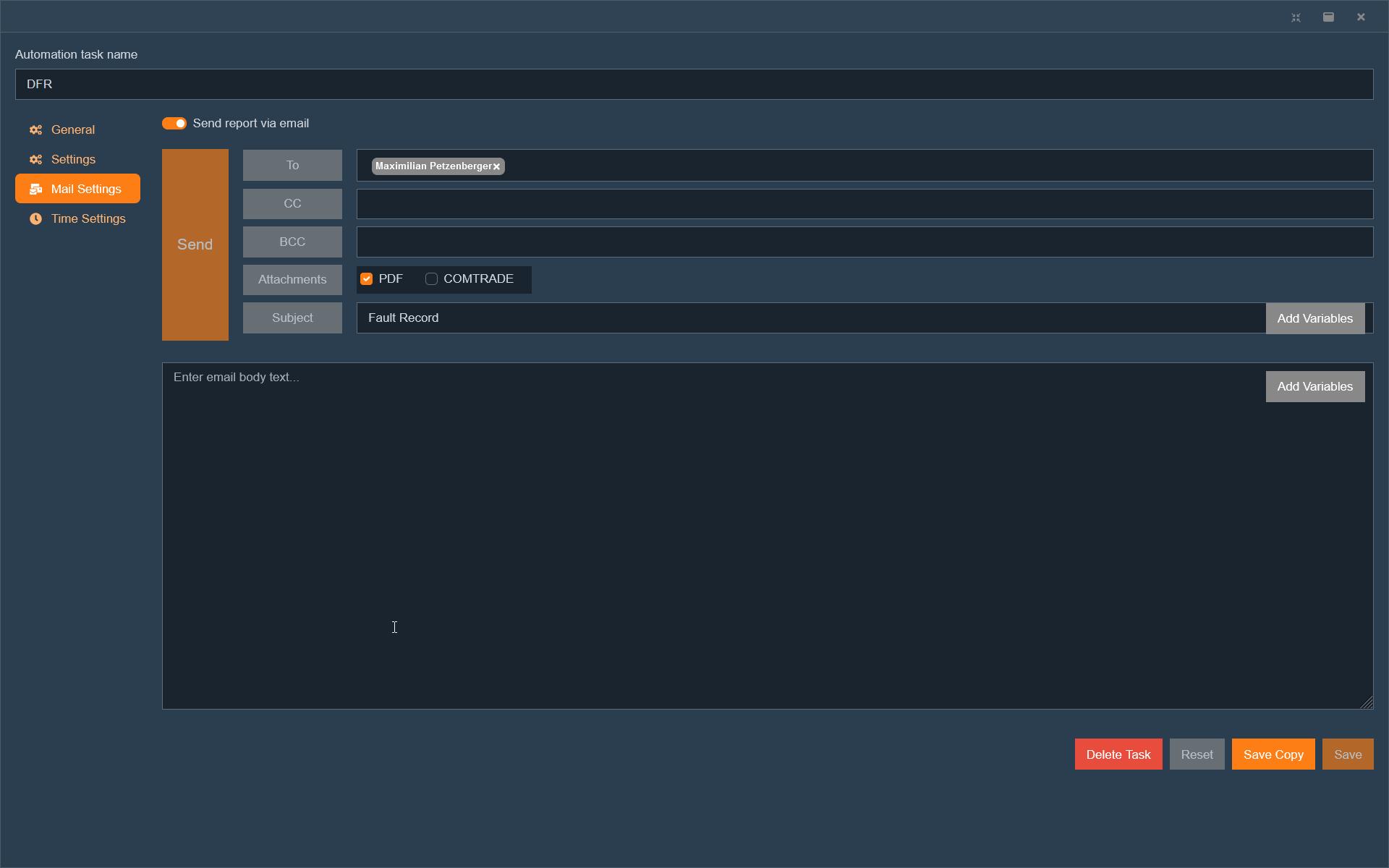

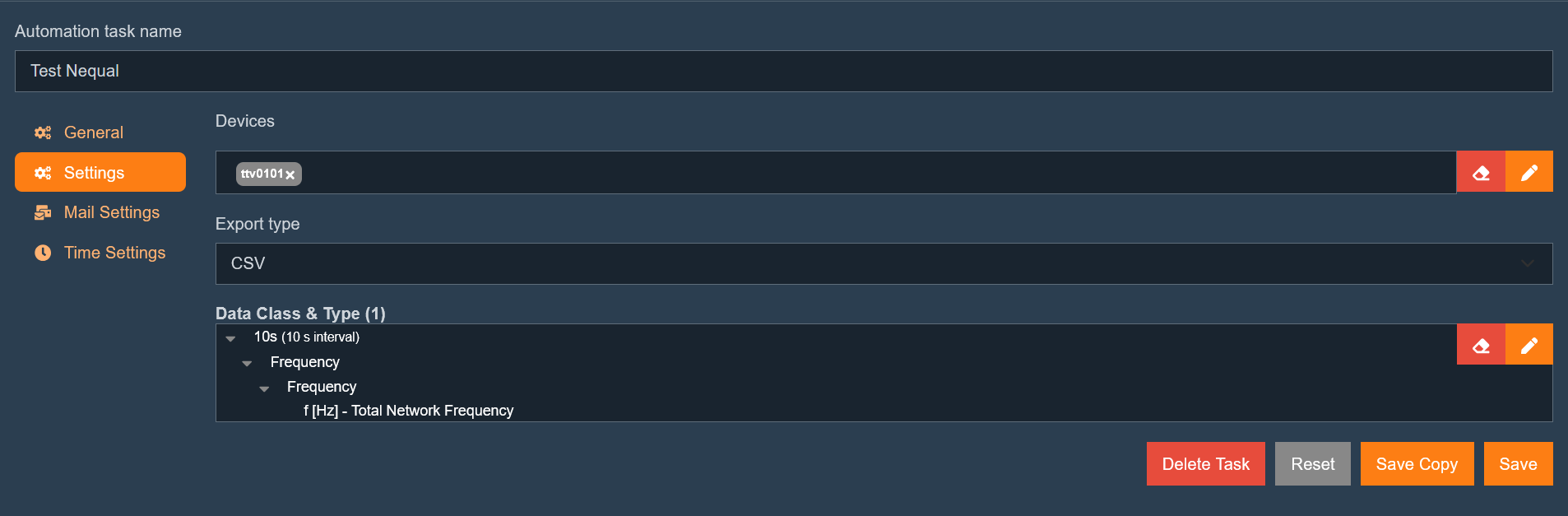

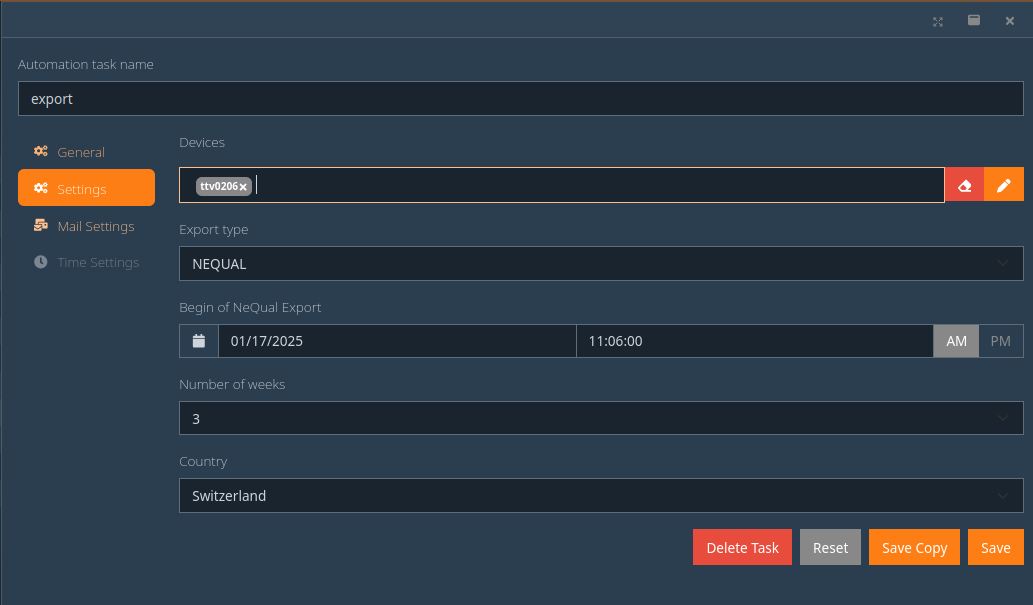

The "Reporting" area includes all settings required for creating normative reports (PQ standard templates). It also offers the possibility to define automated tasks (automation tasks) to execute reports and data exports on a scheduled basis.

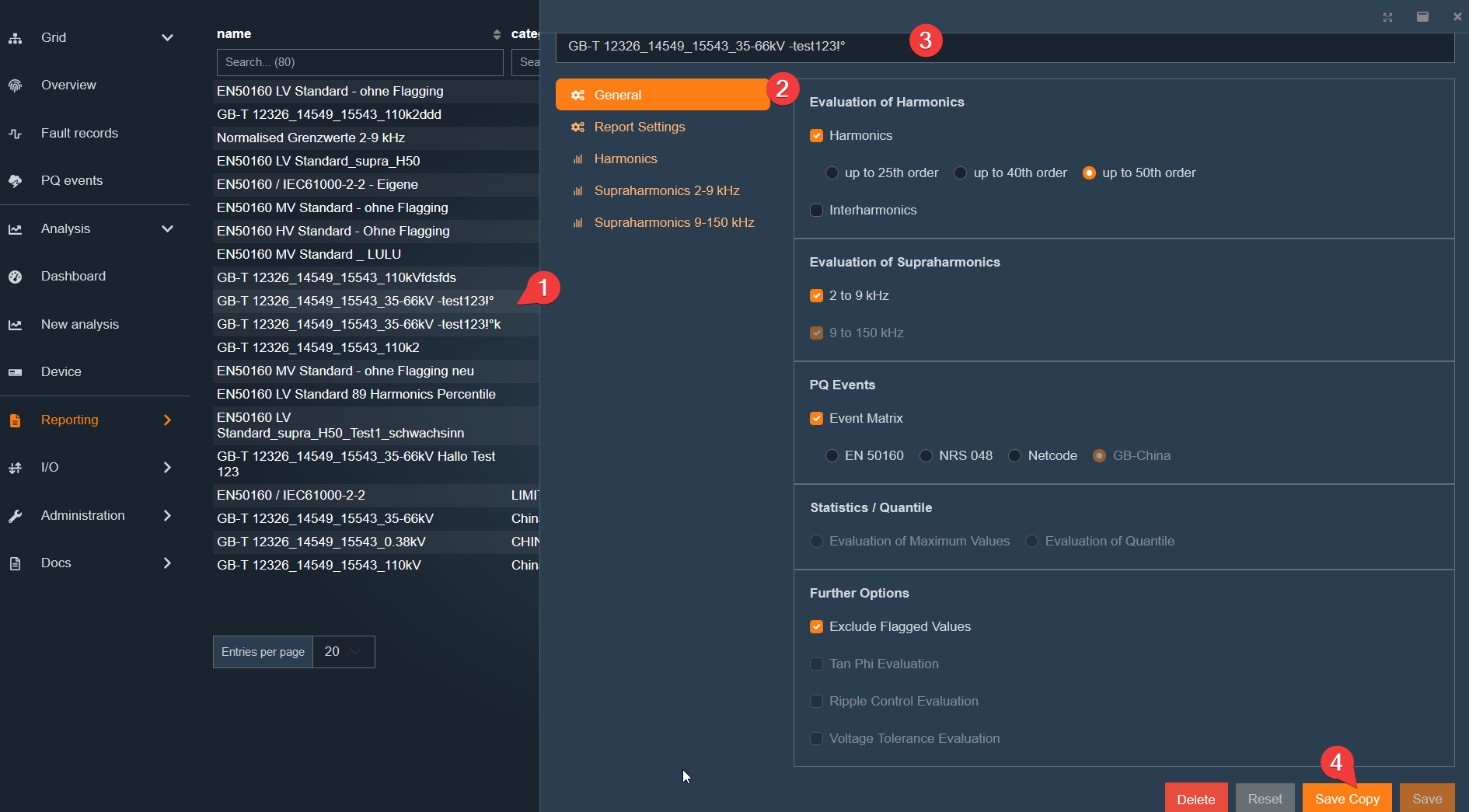

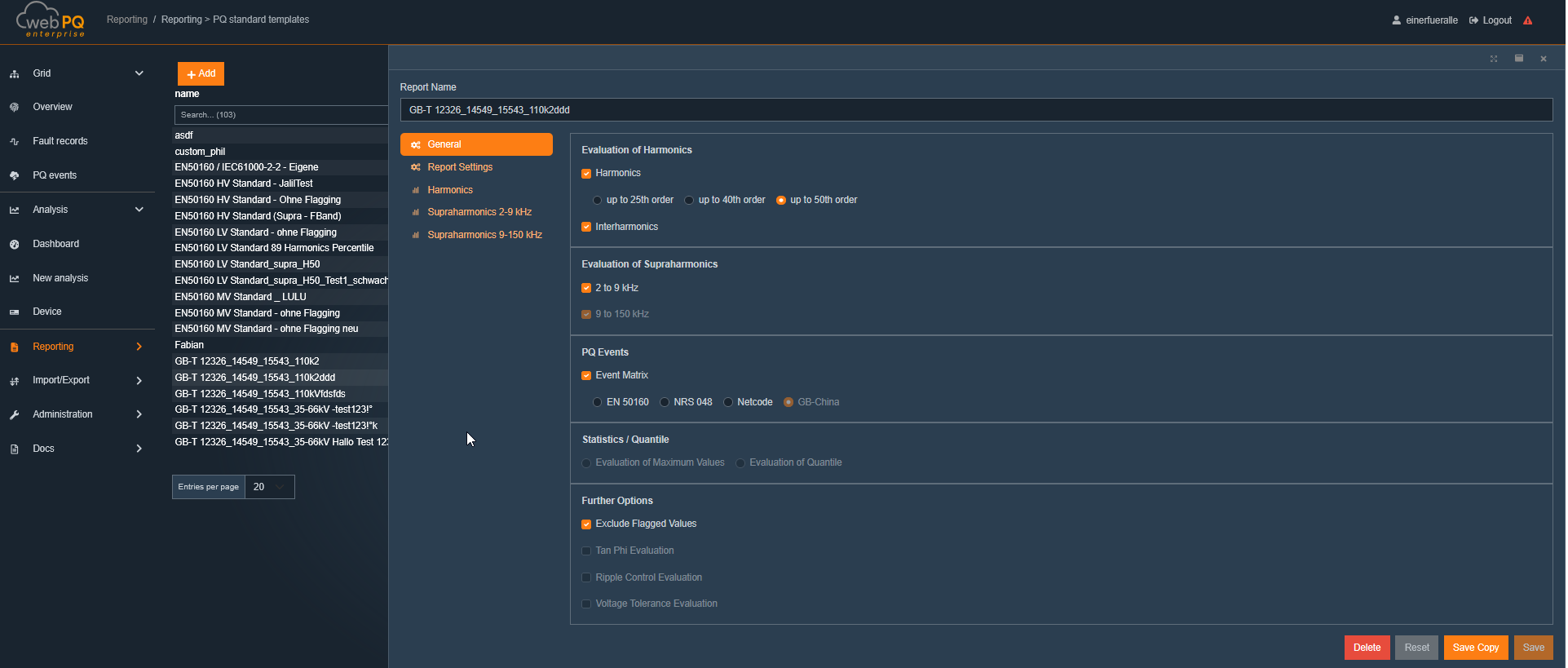

PQ Standard Templates #1

Here, all templates for calculating statistical values can be managed. This includes standardized templates such as EN50160, IEC61000-2-4, and IEC61000-2-2, which are regularly updated by A. Eberle. Based on these standard templates, the user can create their own templates and apply them to specific measuring points.

Reporting Automation #2

Allows the configuration of automated reports, email dispatch of reports and disturbance records, as well as the automatic data export and import management. The function links predefined tasks with measuring points and templates to ensure efficient report generation.

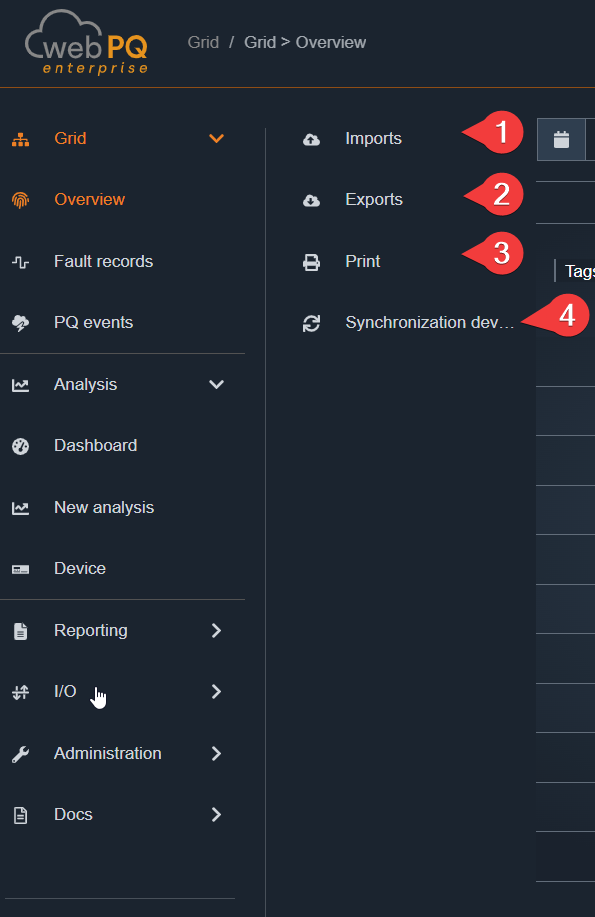

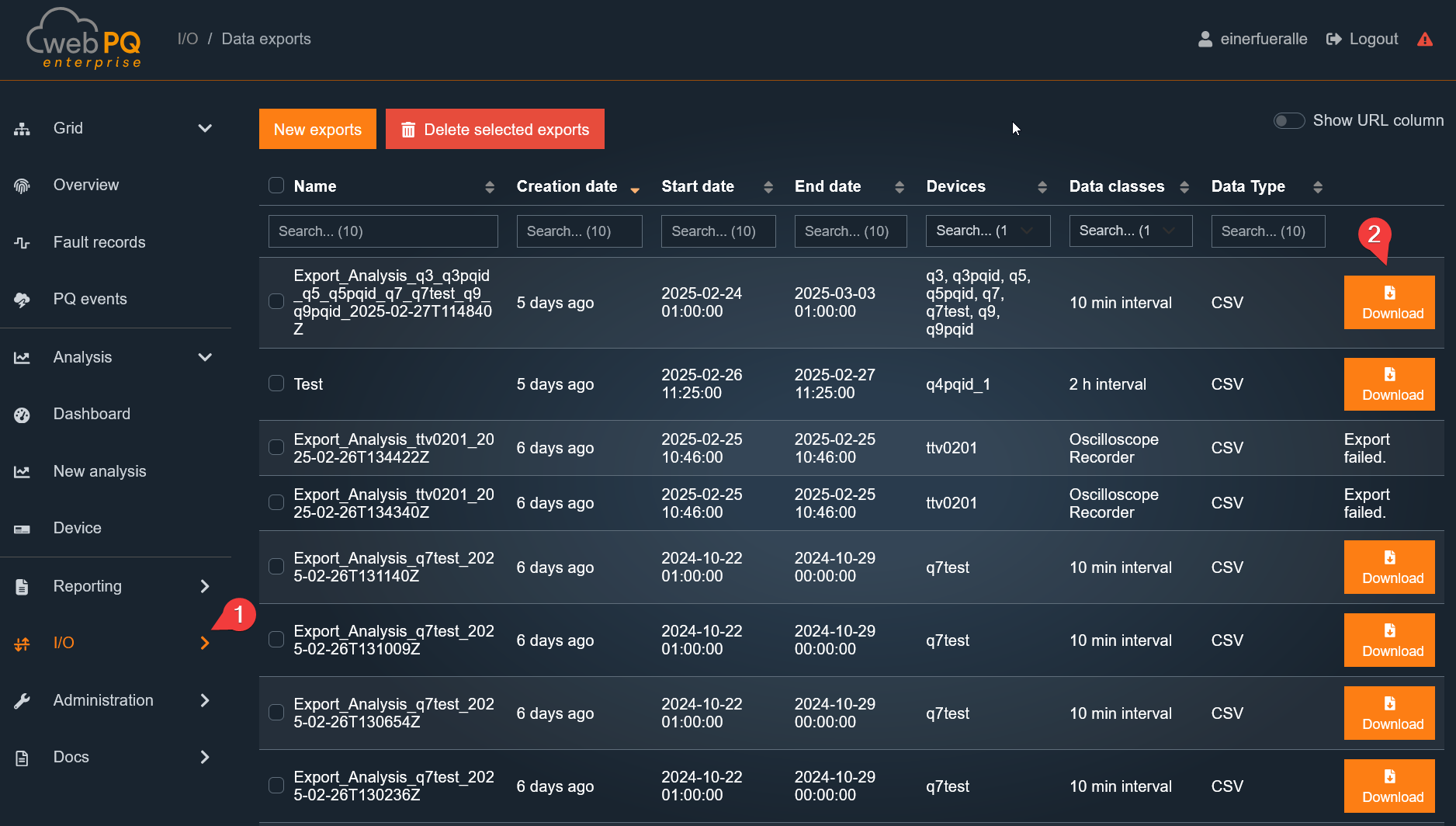

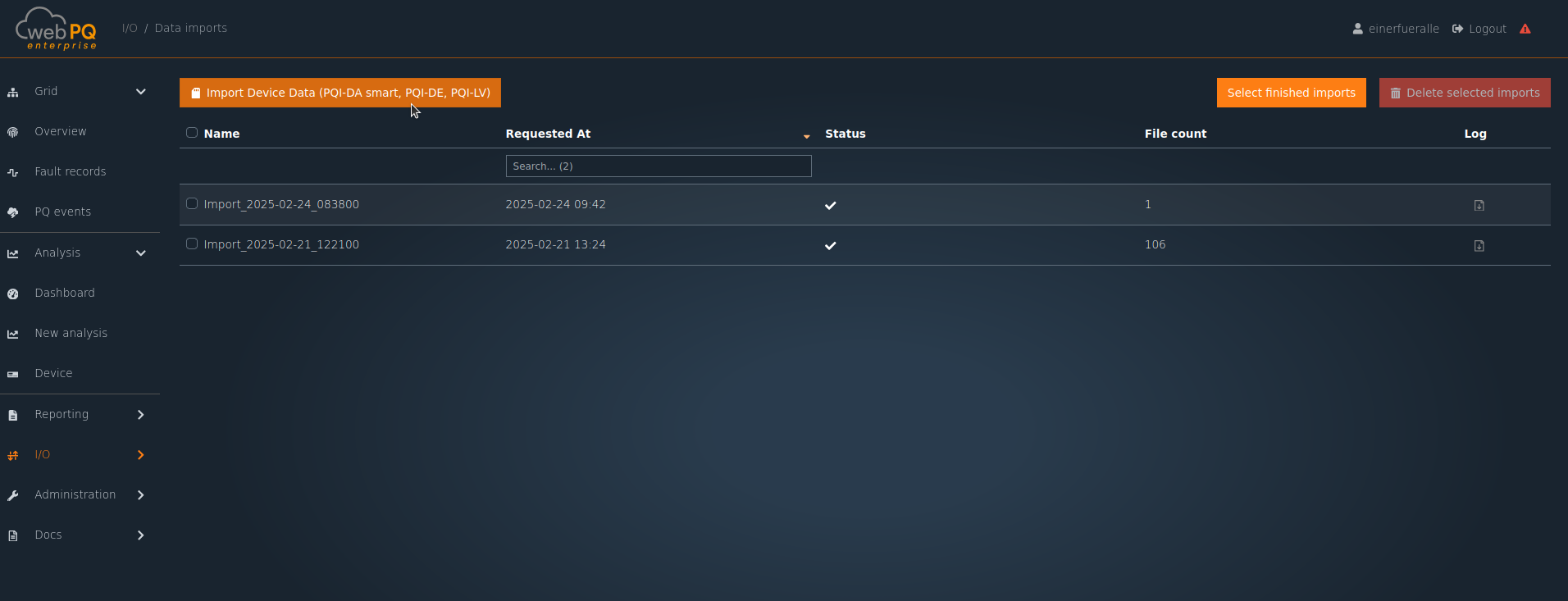

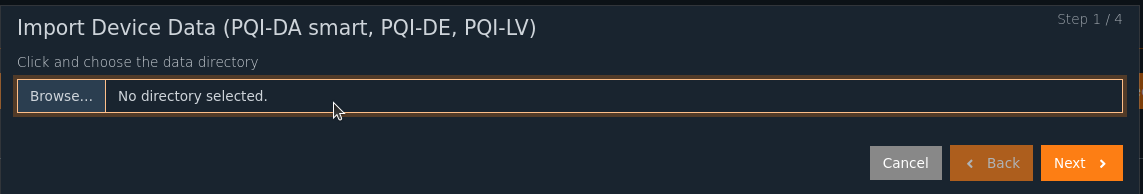

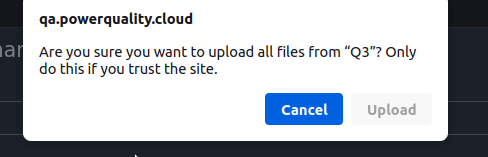

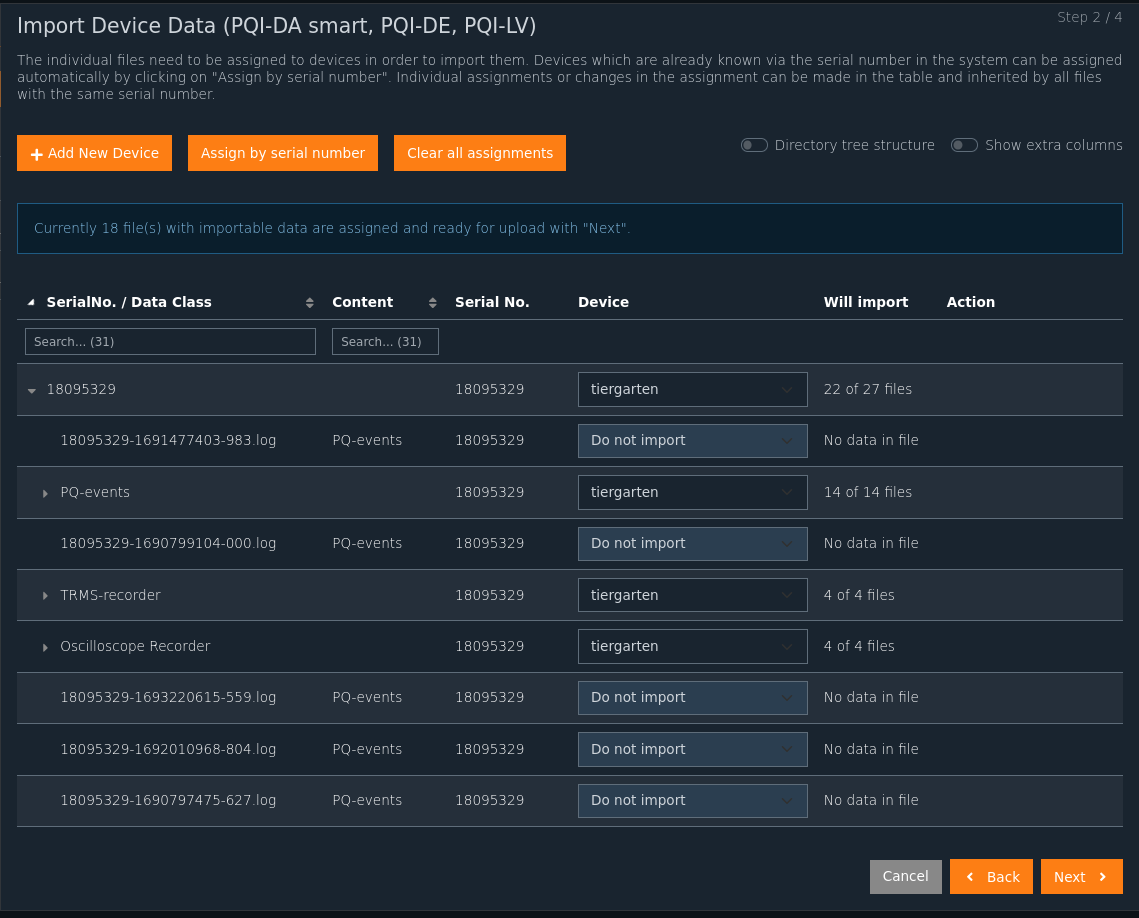

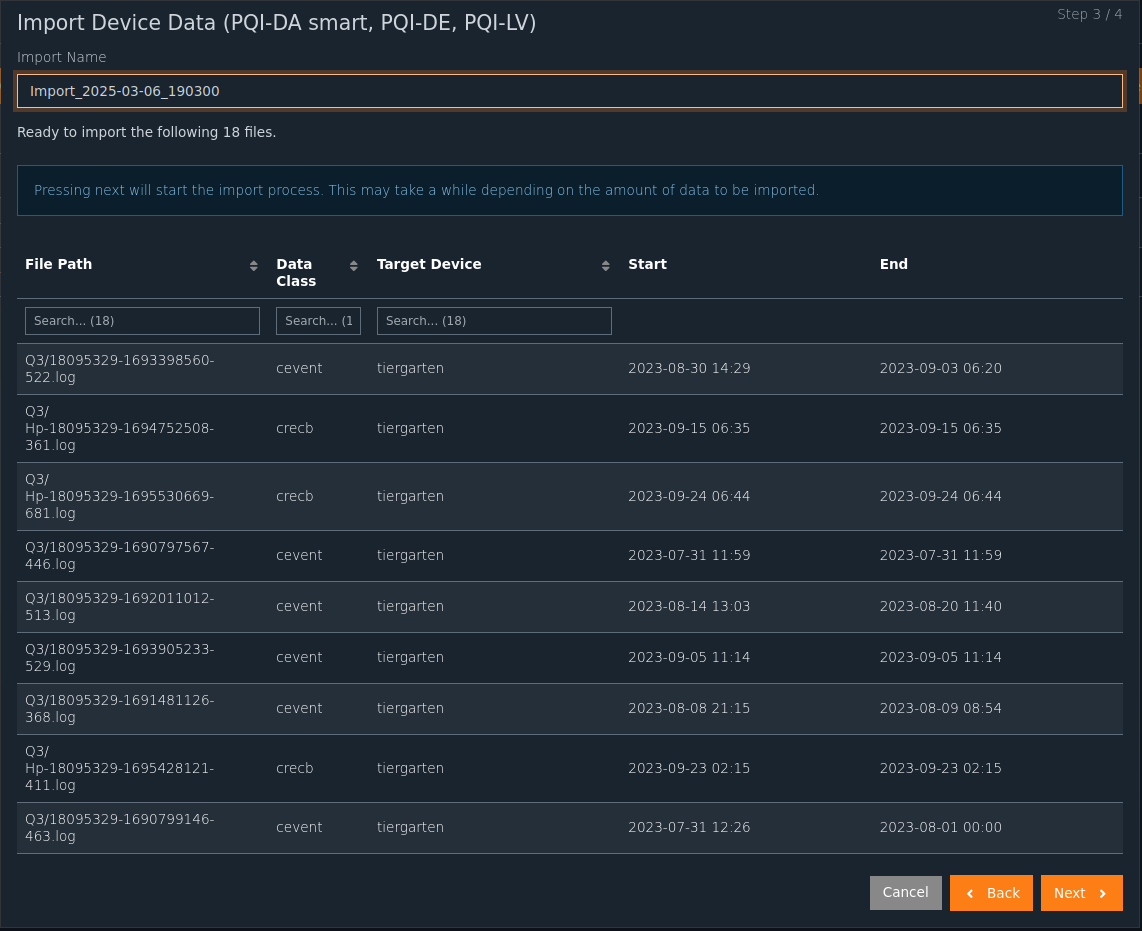

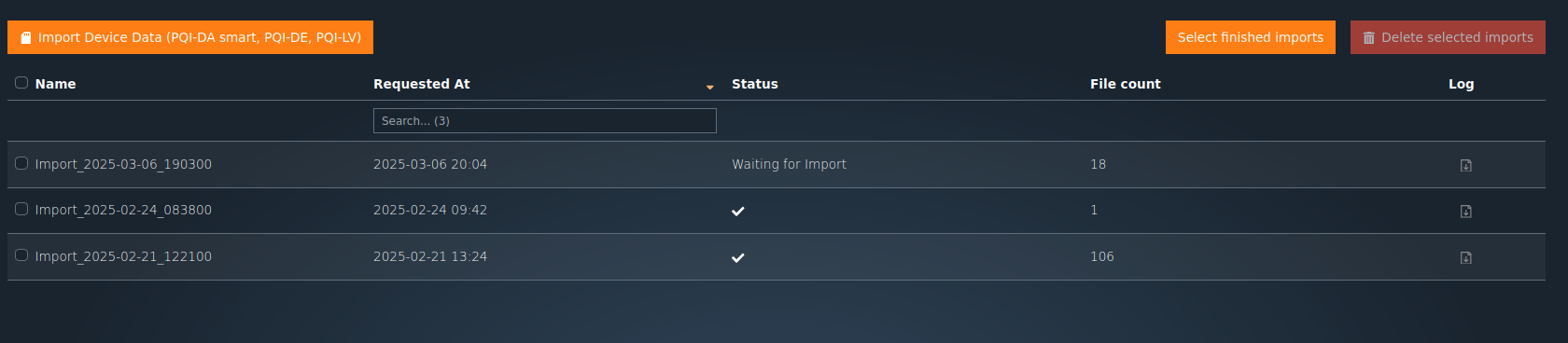

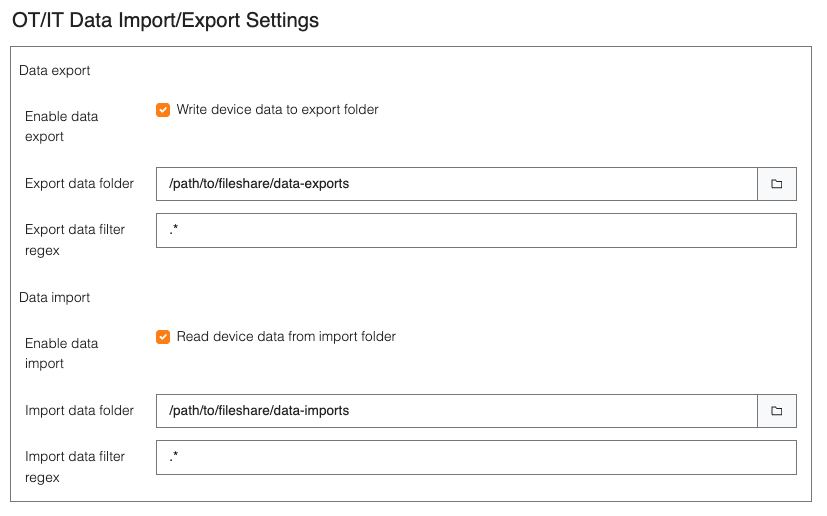

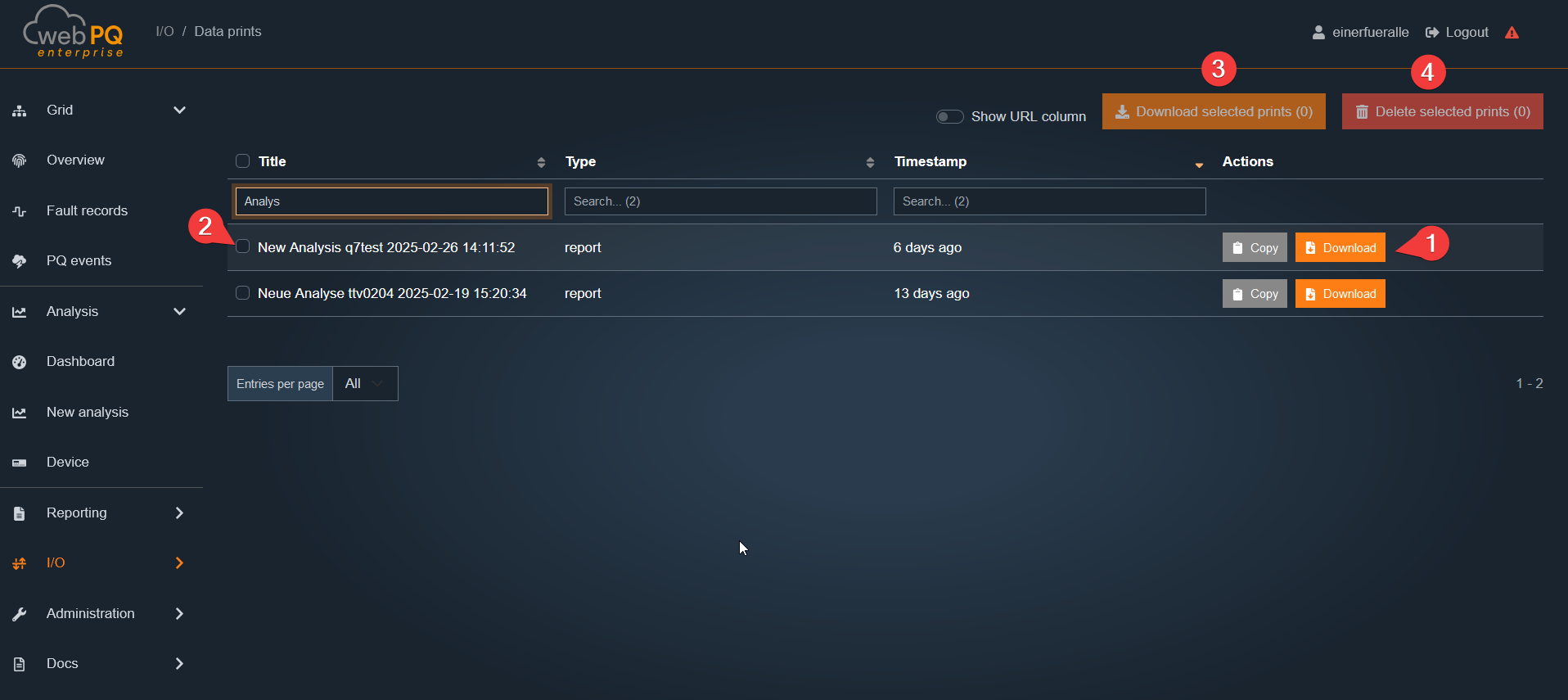

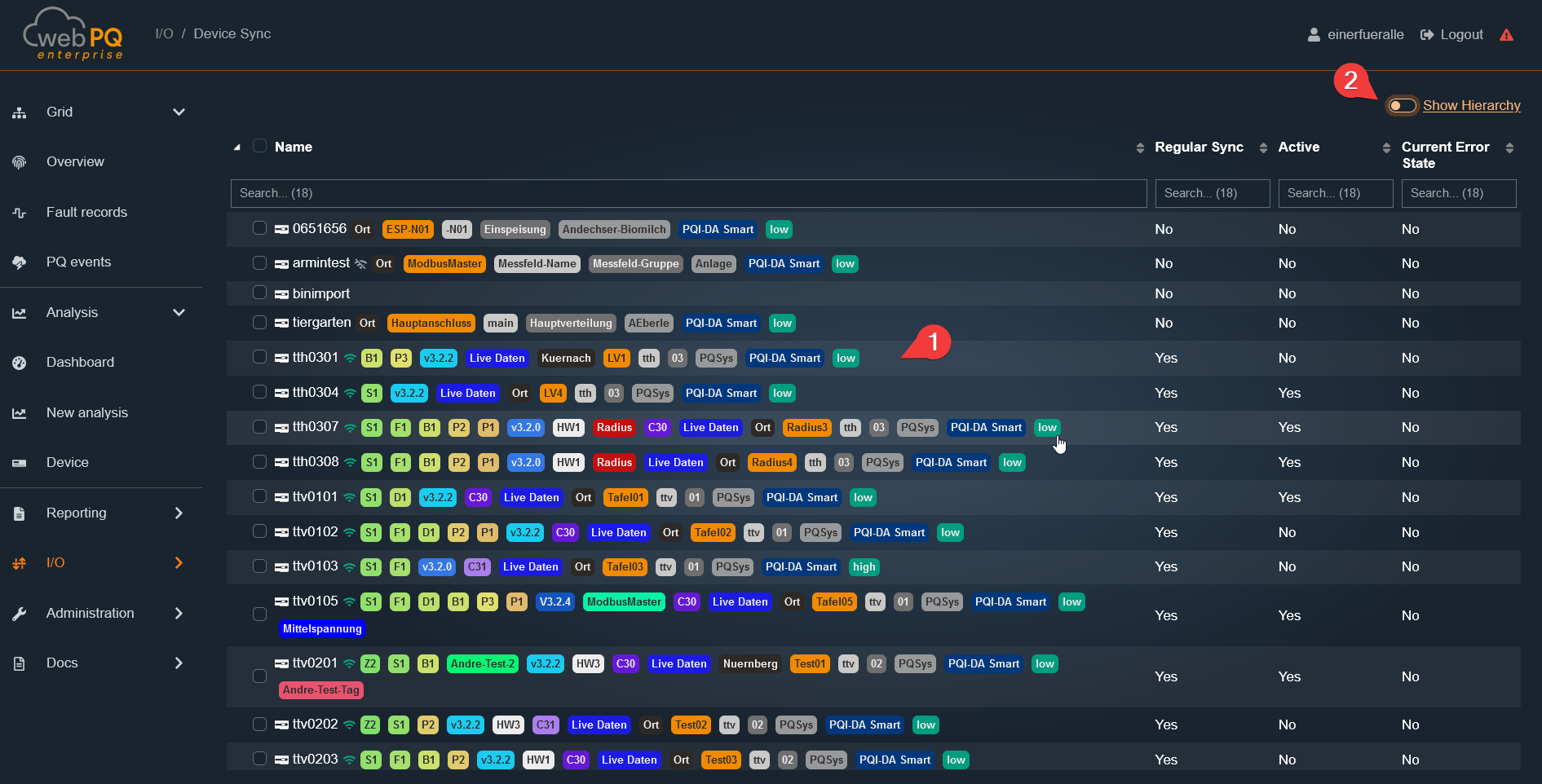

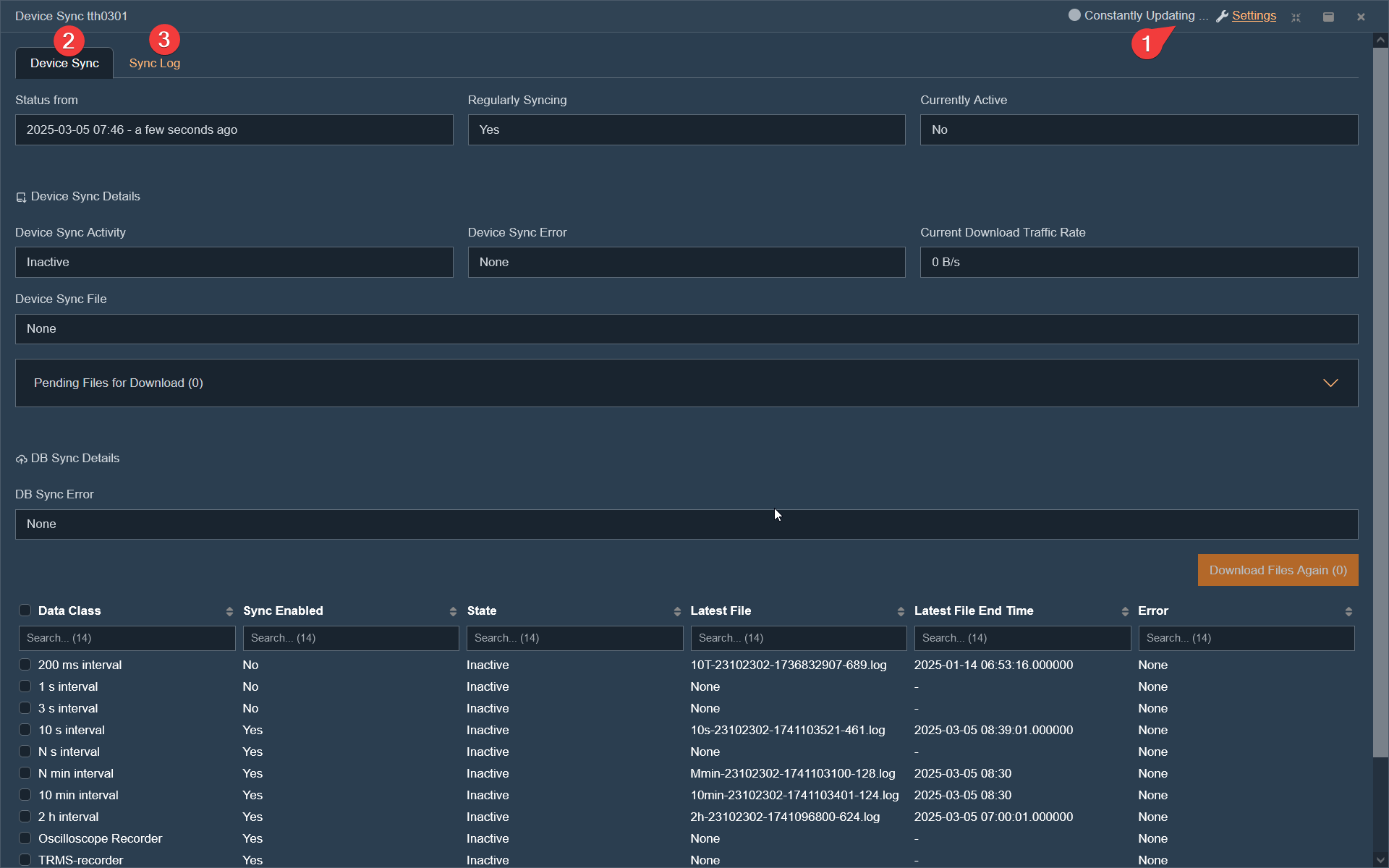

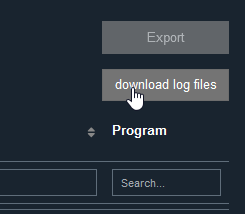

The Import/Export area includes all interfaces for data and report exchange between the WebPQ platform and external systems. Here, measurement data can be imported, reports and raw data can be exported, and synchronization processes can be monitored. Additionally, functions for manual download of PDF reports as well as CSV and measurement data import are available.

Data Synchronization#3

Synchronization of measurement data from one WebPQ instance to another by means of files.

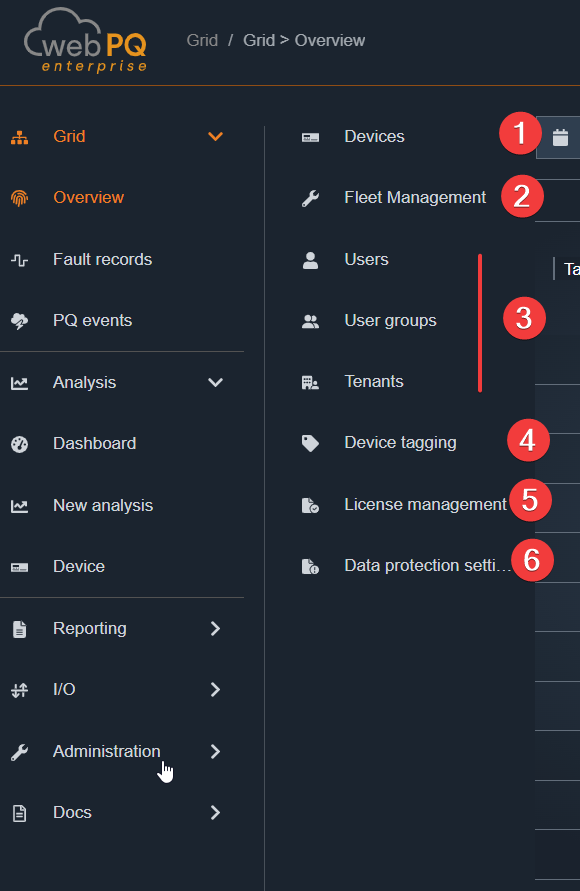

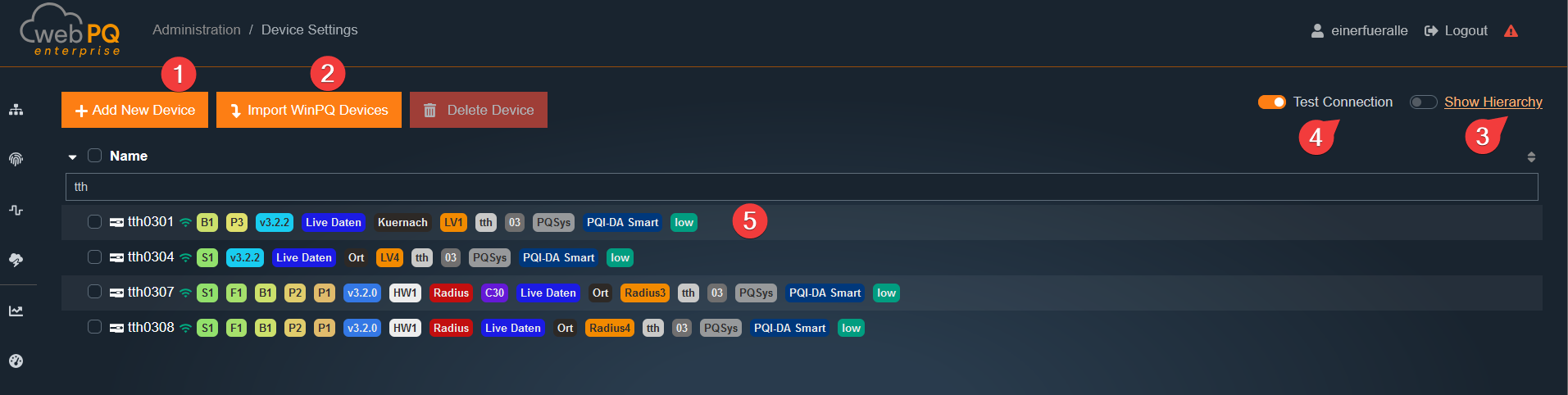

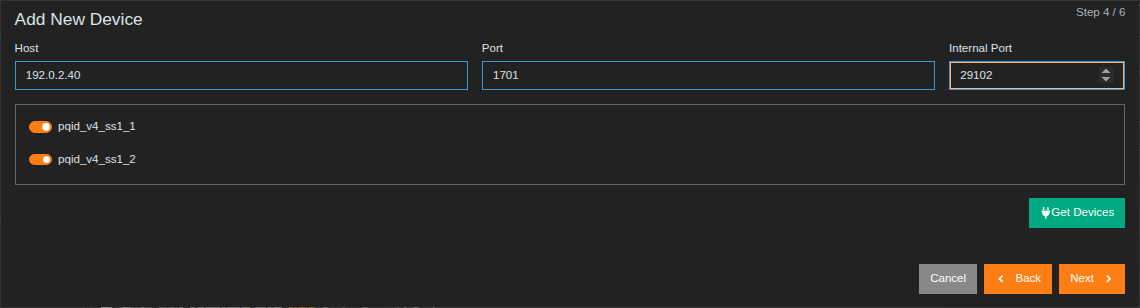

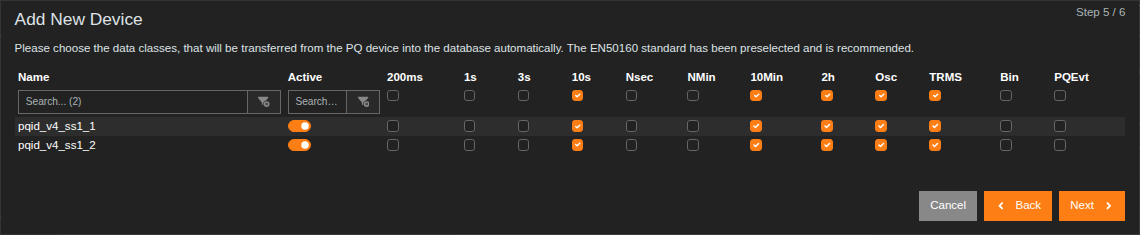

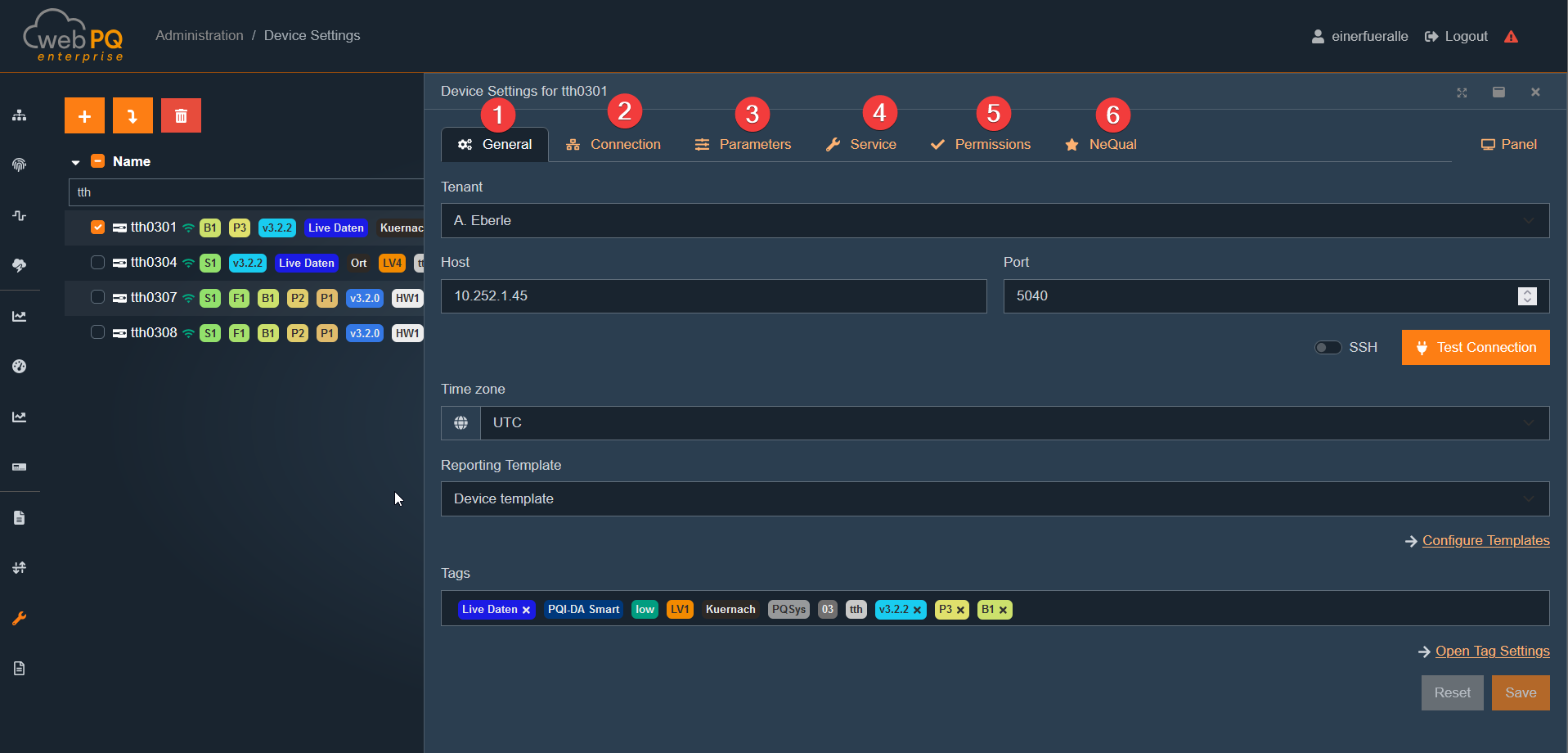

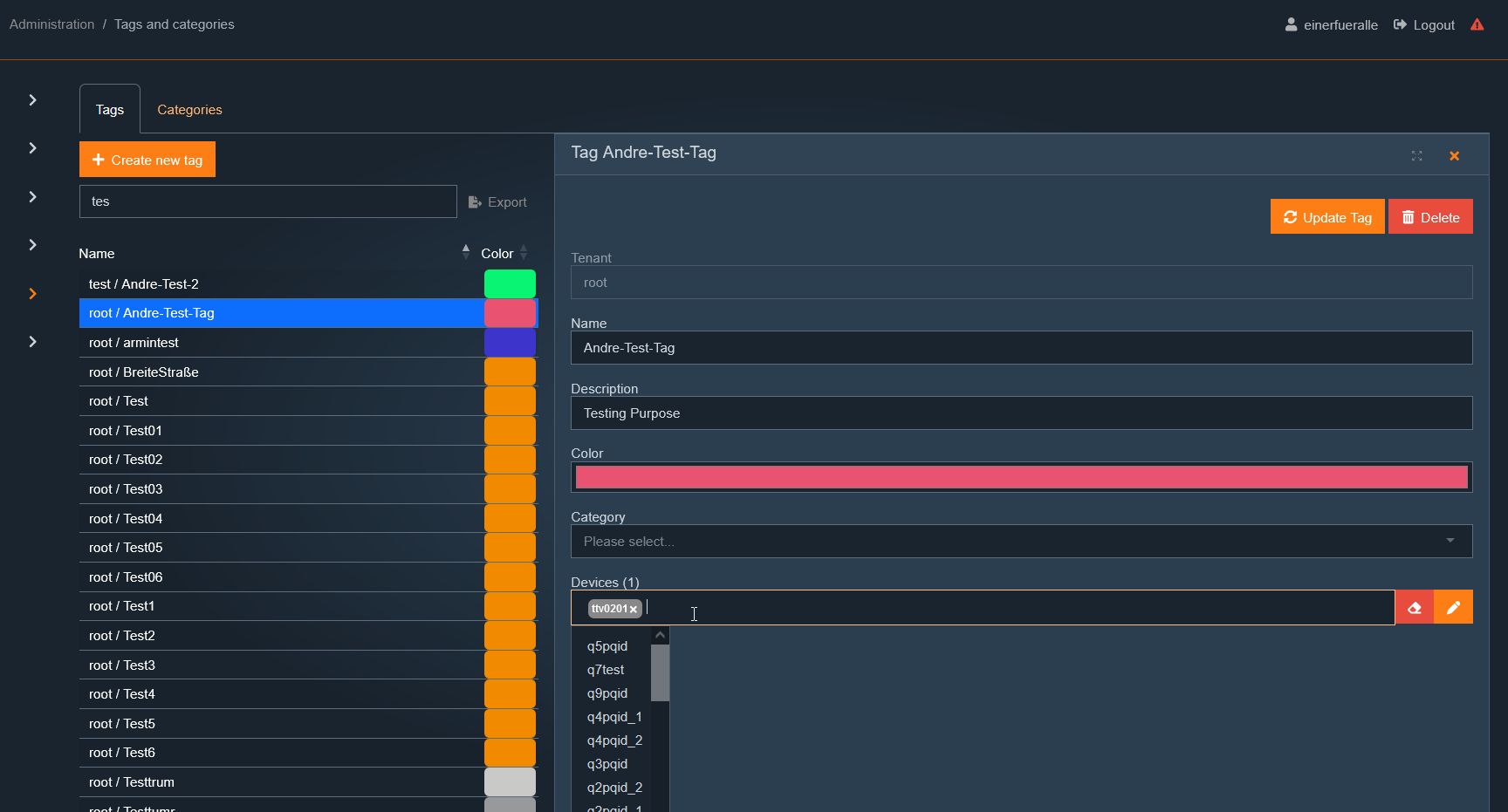

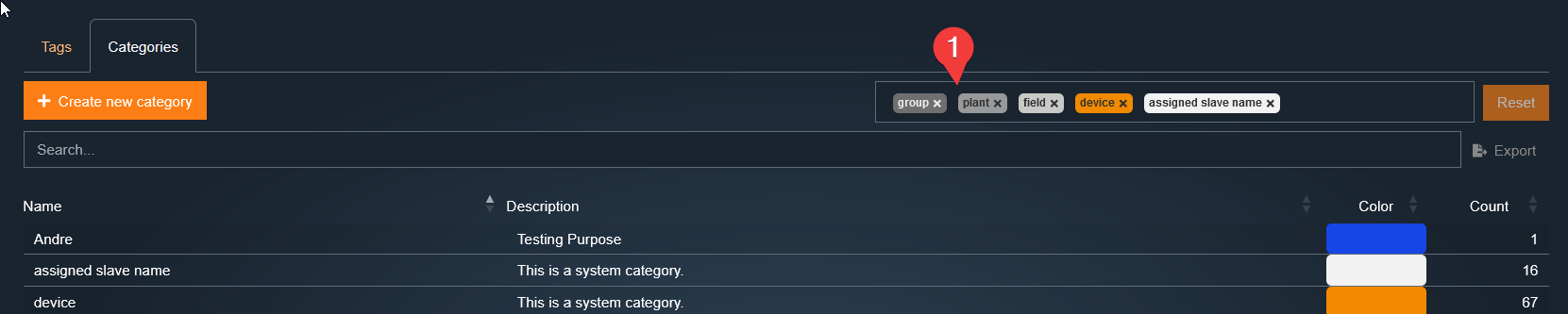

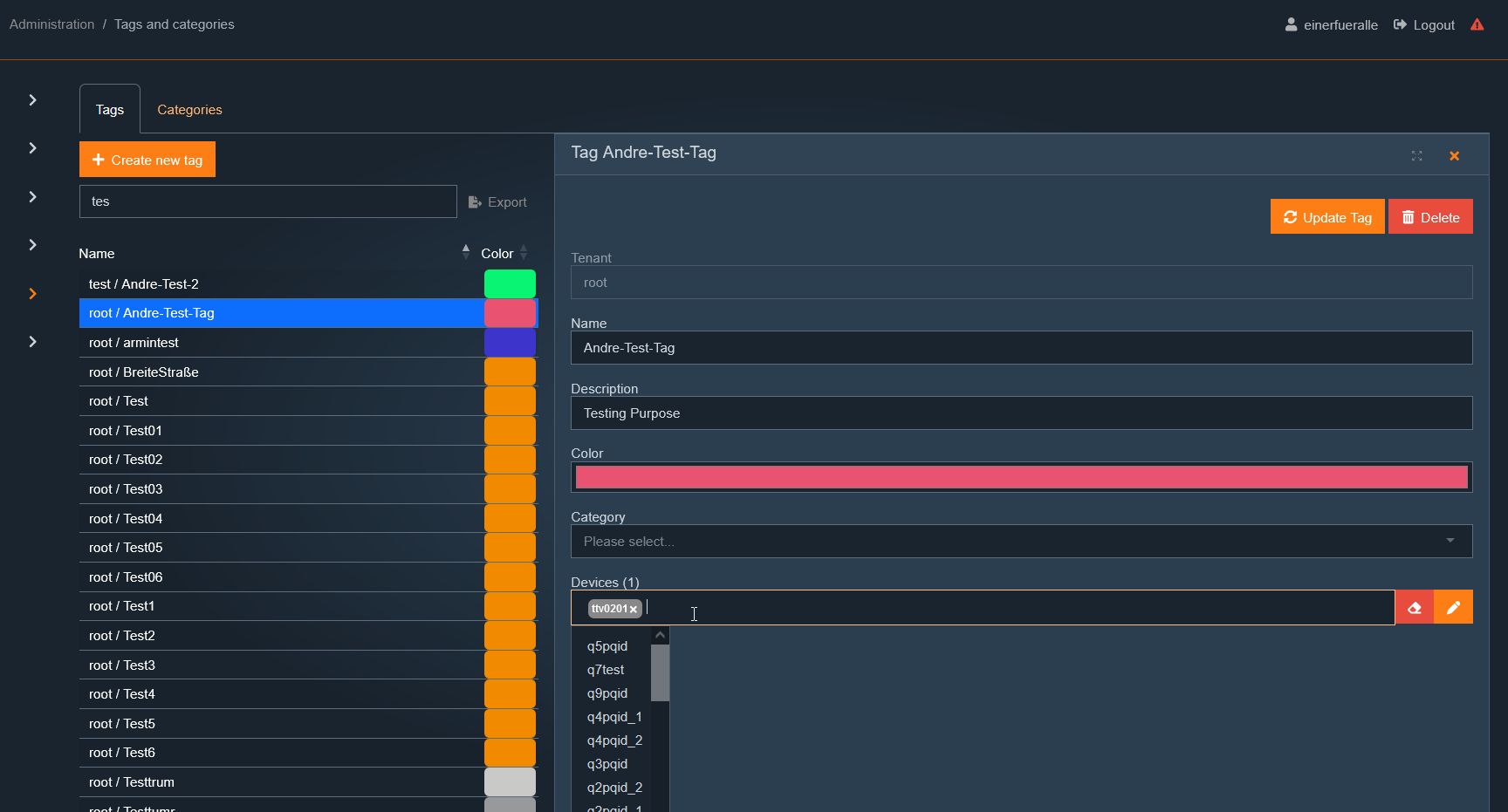

The Settings area includes all administrative functions of the software. This includes the configuration of measuring points, user and rights management, and tenant management. Additionally, other central settings are available here, such as device tagging, license management, and – if licensed – fleet management.

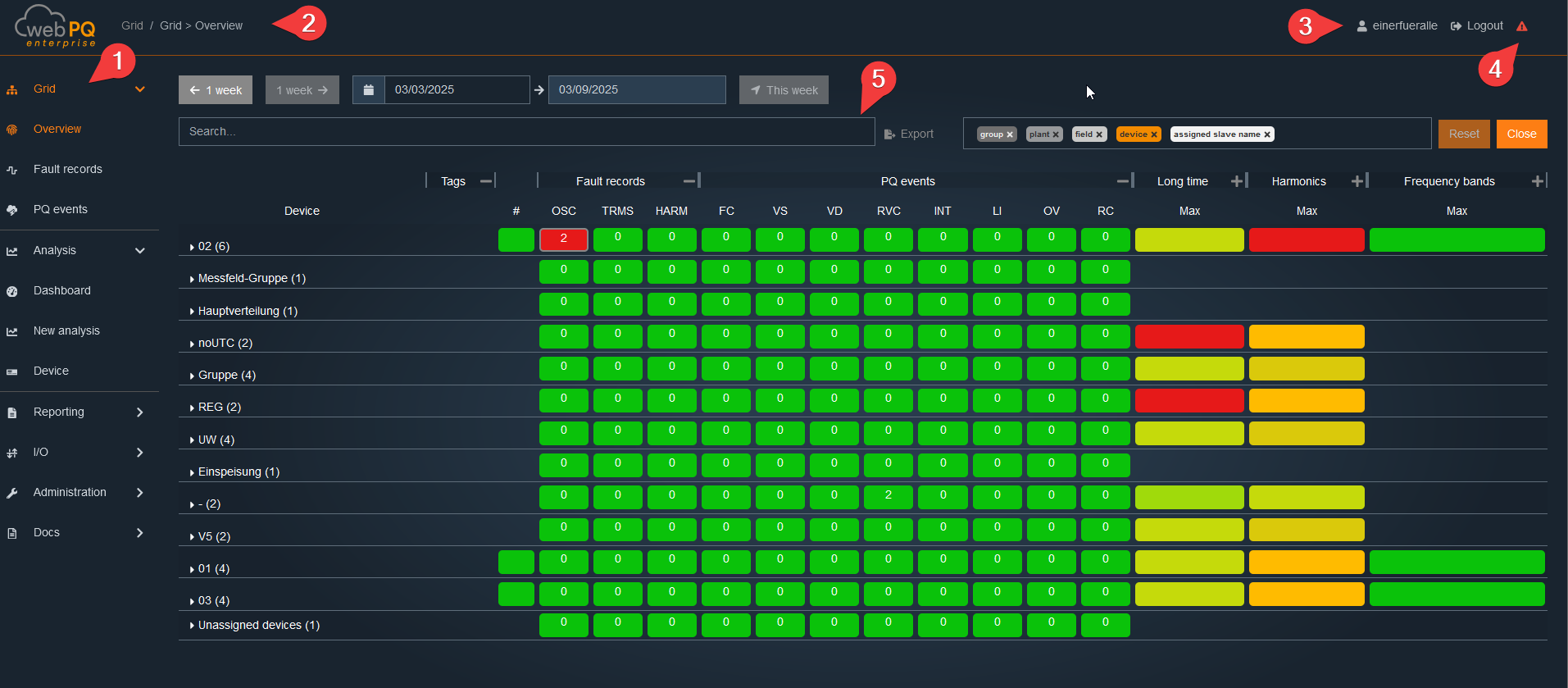

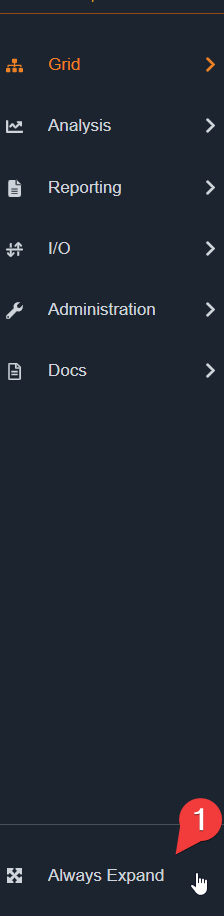

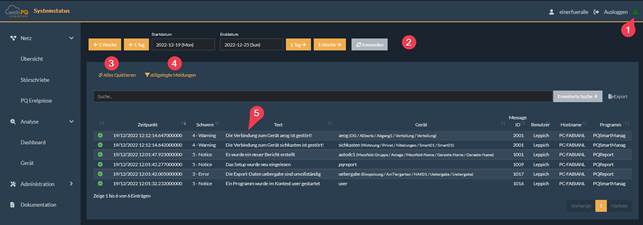

The WebPQ application is divided into three main areas:

Navigation Bar: The navigation bar #1 is located on the left side. For smaller screen resolutions, it is moved to the top area. In this case, the navigation can be opened by clicking on the three lines.

Header – The header contains important controls and information:

Navigation Bar (#2): Shows the current position within the application and allows quick navigation.

Account Settings (#3): Here you can manage personal settings and view user information. See:

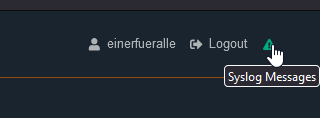

System Status / Syslog (#4): Provides information about the current state of the application, such as connection status or system messages. See: System Messages / System Status or Logfiles

Workspace: The central area #5 of the application, where the actual content and functions are displayed and edited. This is where data entry, analysis, and visualization take place.

To use the workspace as efficiently as possible, you can reduce the navigation bar to compact buttons. To do this, the Always Expand option #1 must be deactivated. This way, the navigation is only displayed when needed, providing more space for the actual workspace.

To organize the system with connections to many measuring points and to operate the system in a stable fashion, the software lists all messages centrally and clearly via #1.

If the exclamation mark turns red  , new system messages – such as connection interruptions or messages about the battery status of the measuring devices – have occurred. If the exclamation mark is green

, new system messages – such as connection interruptions or messages about the battery status of the measuring devices – have occurred. If the exclamation mark is green  , all messages have been acknowledged (#3) or no new critical messages have occurred.

, all messages have been acknowledged (#3) or no new critical messages have occurred.

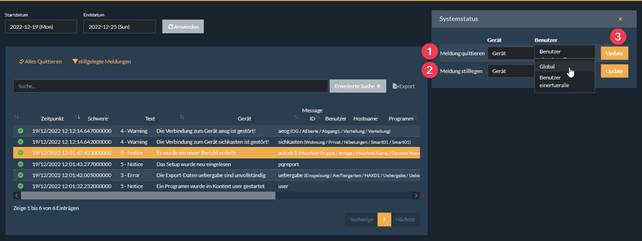

All messages can be acknowledged as a whole (#3) or individually by clicking on the message as shown in the screenshot below (#1) for each device / globally and per user or globally. To do this, select the option under device or user in the dropdown list and confirm this via #3.

It is also possible to "silence" individual messages. This may be necessary, for example, when shutting down a connection for maintenance purposes. To do this, select function #2 "Silence Message". This function can also be set per device or globally for the message type per user or for all users. The "silenced messages" button  allows you to view and reactivate the silenced messages. To do this, simply click on

allows you to view and reactivate the silenced messages. To do this, simply click on  in the respective row of the message to be reactivated.

in the respective row of the message to be reactivated.

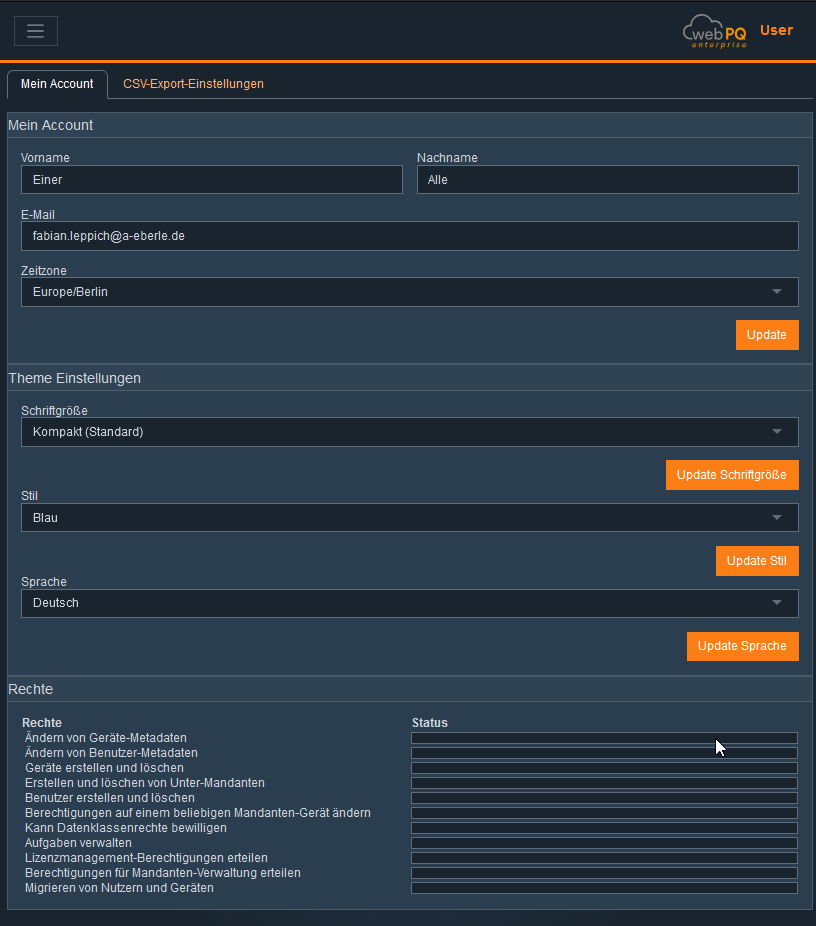

Each user can customize individual settings such as language, theme, and password directly in their user profile.

To open the settings, click on your username at the top right:

In the Account Settings section, personal data such as name and email address are displayed and can be edited.

The timezone can also be configured here, which is important for the correct display of timestamps throughout the software.

Changes are applied immediately after clicking Save and apply to all logged-in devices.

Technical Notes:

Changes to email or password require re-authentication (JWT token is updated).

The timezone setting affects all time information in reports and notifications.

In the Theme Settings section, the appearance of the software can be customized:

Font size: Choose between three predefined sizes (Standard, Large, Extra Large)

Color scheme: Switch between Blue, Black, or Light theme

Language: Select the software language (e.g., German, English)

Technical Notes:

Theme and language settings are stored locally in the browser and server-side in the user profile.

Changes to color scheme and font size take effect immediately in the UI (without reloading).

The language setting controls all UI texts and system notifications.

The permissions assigned to the user are displayed here clearly and in read-only mode.

Typical permissions include Admin, Read, Write, Device Management, etc.

Managing and assigning user permissions is only possible in the separate administration area of the software.

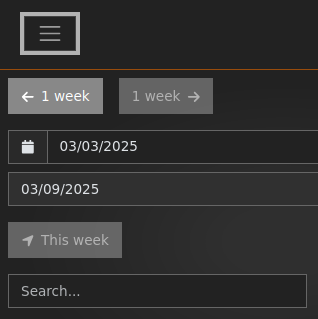

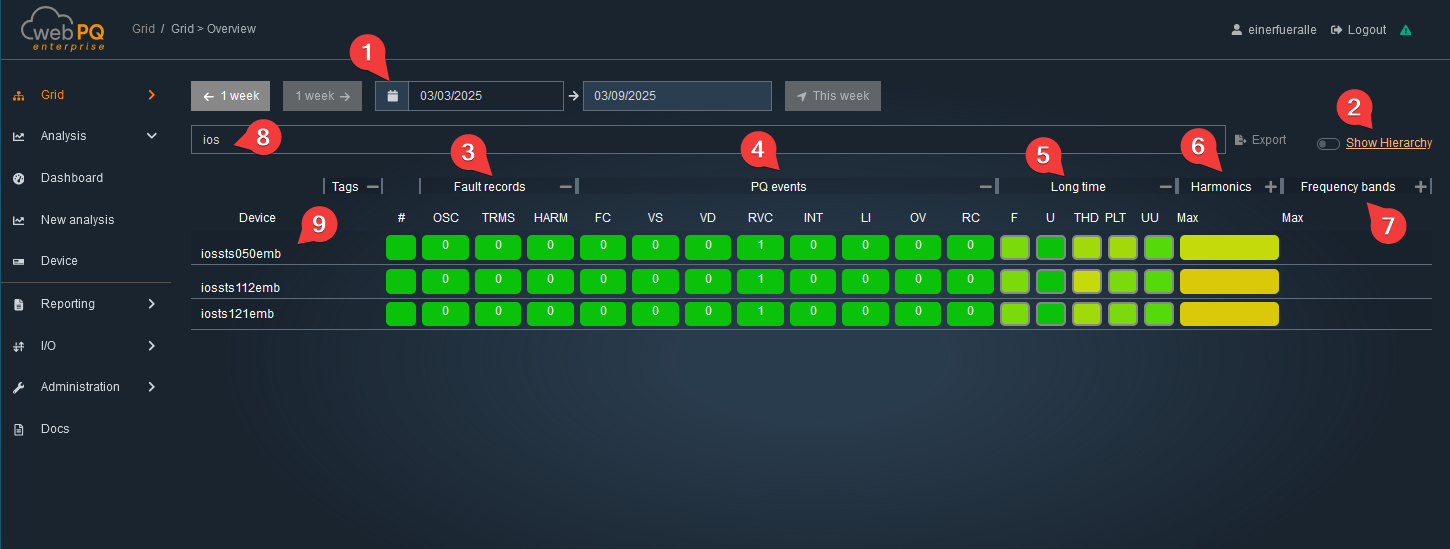

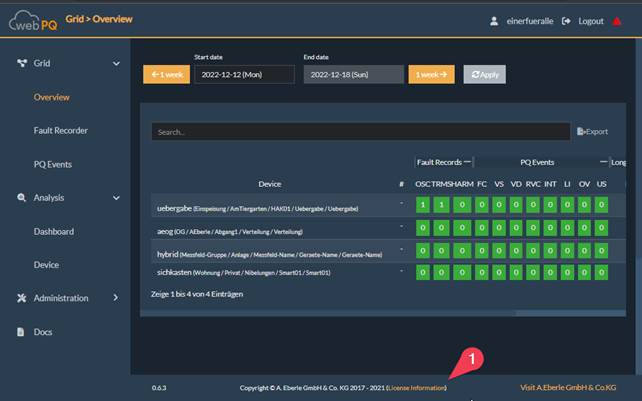

The homepage of the Grid > Overview software provides the user with a quick overview "Heatmap" of all devices connected to the WebPQ software. This overview spans a selected time period, #1, typically a calendar week. The landing page is automatically updated every 60s to always display the latest disturbances. Using the #2 function, the interface can be sorted into custom hierarchy levels, allowing many measurement points to be displayed hierarchically according to the application. More information can be found under Hierarchy Settings

The devices are sorted by default according to the number of disturbances in the #3 area. Disturbances are color-coded:

Red: Unacknowledged disturbances

Green: Acknowledged disturbances

Clicking on an OSC or TRMS record opens the Analysis Cockpit. Subsequently, the button color with the number of records changes from red to green.

In the PQ – Events#4 area, the number of power quality events is listed based on the power quality standard stored and set in the measuring device – e.g., EN50160 or IEC61000-2-4.

Clicking on the -/+ allows the individual areas to be expanded or collapsed. In the collapsed state, the maximum values of the subordinate measurements are always used for the display.

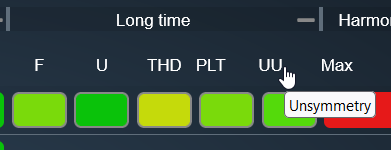

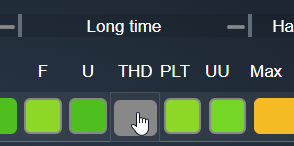

In the Long-term Data#5 area, the statistical data is calculated according to the standard stored in the measuring device. These include:

Frequency[F]

Voltages[U]

THD (Total Harmonic Distortion)

Flicker[PLT]

Voltage Unbalance[UU]

When the mouse pointer hovers over an abbreviation, an explanation is displayed via a tooltip.

Clicking on the designation, e.g., PLT, sorts the display either from the worst to the best measurement point or vice versa. This function facilitates the identification of the most noticeable measurement points for the respective measurement value.

Clicking on the respective measurement values – e.g., THD – opens the Analysis Cockpit. This allows the abstract representation of the measurement values to be converted into a temporal representation for detailed analyses.

In the Harmonics#6 area, all harmonics from H2 (100 Hz) to the 50th harmonic (2500 Hz) as well as the Supraharmonics#7 are listed in relation to the respective limit value. The color scale in the statistical measurements shows the proximity to the limit value:

Green: Values well below the limit

Yellow/Orange: Values approaching the limit

Red: Values exceeding the limit

To quickly find a desired measurement point, the name of a measuring device can be entered in the Search Bar#8 area. The display is then interactively filtered.

Clicking on a device name#9 switches the application from Grid >> Overview to the Analysis >> Device page.

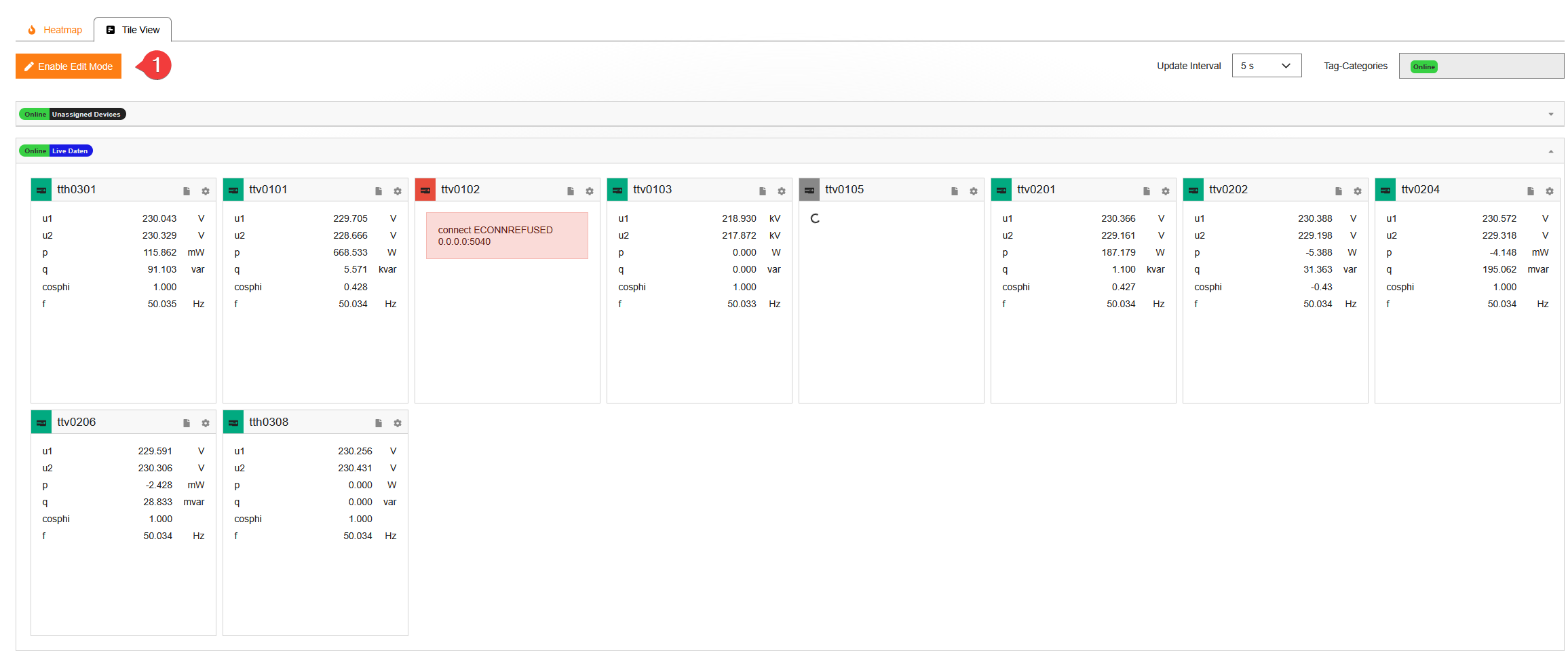

In the second area Grid Overview – Tile View, all devices with their essential live values are displayed within the configured hierarchy levels.

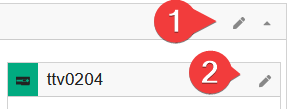

With Enable Edit Mode #1 the tile arrangement can be changed via drag & drop and devices or groups can be assigned to other hierarchy levels. After Save the adjustments are stored persistently and loaded on next access. Changes apply system-wide for all users and can be centrally managed by the administrator.

Via Change Default Displayed Measurements you can define which live data appear in the tiles. All real-time values provided by the measuring device are available. Settings can be made

globally (all devices),

per group,

or per device (#3) in edit mode.

Usage:

Displayed Data for the Group: Applies to all devices in the group.

Displayed Data for the Device #3: Individual device configuration.

The refresh interval of the live data is configurable. The hierarchy order can be adjusted using drag & drop in the upper right corner.

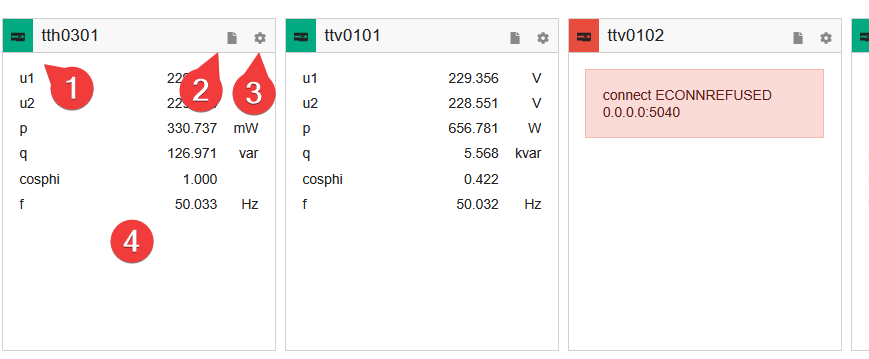

A tile contains the following elements in live operation:

#1 Connection status: Red = no connection, Green = connection active#2 Link to the Power Quality report (e.g., EN50160, IEC61000-2-4)#3 Link to device parameterization#4 Display of the configured live data

Map View allows users to see devices on a map and combine the geographical view with threshold utilization, fault records, and PQ events for the selected time range. It is intended for quickly locating affected areas and then jumping to the relevant analysis or device settings.

Prerequisites

Maps zip package provided by Application Support

Access to the WebPQ backend running on the WebPQ server

A folder on the WebPQ server where map data will be stored. By default, C:\ProgramData\aeberle\webpq\maps is preconfigured. The next section explains how to change this path.

Configure the path in the WebPQ backend

Open the WebPQ backend.

Scroll to Paths.

Set the Maps Storage Folder field to the desired folder.

Save the change (Update) so the setting is applied.

Place the maps package in the maps folder

Extract the zip file to the configured maps folder.

Two files must now exist in the root of the maps folder: map.mbtiles, which contains the actual map data, and map-info.md. This file contains information about the region and detail level of the maps package, which helps when downloading a newer package later.

Restart the WebPQ Server service after a new package was extracted or replaced so the tiles are loaded again.

Verification

In WebPQ, navigate to Grid > Overview > Map View.

If no map package is loaded yet, a message box explains the required setup steps.

The documentation link in the upper right corner of Map View opens this documentation section.

Troubleshooting

No map loads: verify the maps folder path, folder permissions, and restart the WebPQ Server service.

Map does not render: ensure the zip file was extracted and map.mbtiles is present in the folder root.

No device pins: confirm latitude/longitude are configured for the device (see Device Settings).

A device appears in tables but cannot be focused on the map: the device does not yet have valid geodata. Open Device Settings and update the location.

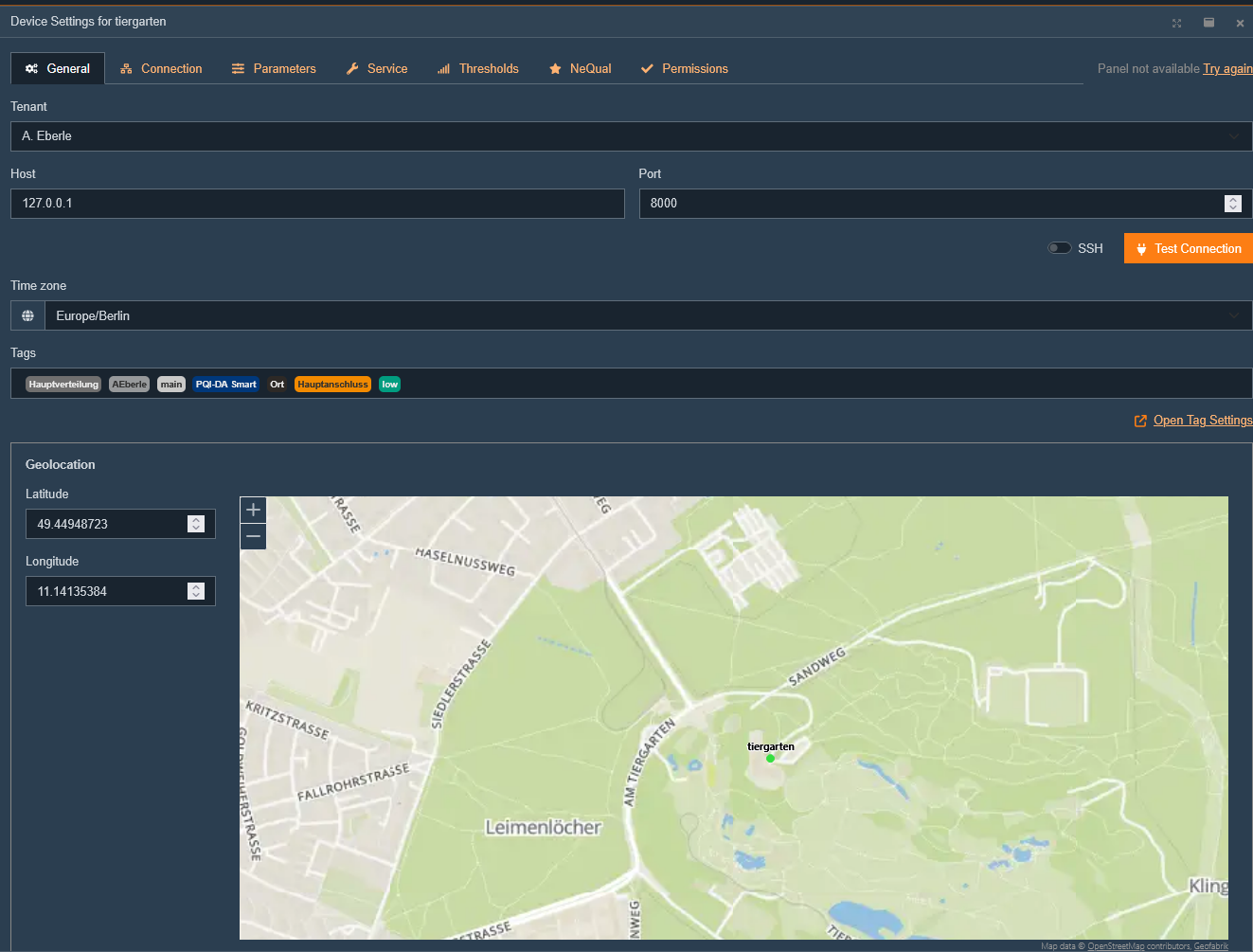

To display a device in Map View, the device geocoordinates must be configured first. Open Device Settings and select a device. The device position can be configured in the General tab. Enter latitude and longitude, or set the coordinates by clicking on the map.

Map View is divided into three areas:

View settings

The map with tooltip

Information panels

View settings

Contains the following UI elements:

Show All resets the map to the initial overview of all devices with geodata.

Time interval filters threshold utilization, fault records, and PQ events. The default range is the last 7 days.

Show only visible devices limits the bottom panels to the devices currently visible in the map viewport. The setting is stored and restored when Map View is opened again.

Show Hierarchy controls whether hierarchy tags are shown in tooltips and bottom-panel tables. This setting is shared with the overview-page hierarchy display.

The documentation link in the upper right corner opens the corresponding customer-documentation anchor for Map View.

Map

Initially, Map View shows all devices that have a configured geolocation. Multiple nearby devices are shown as a cluster with the number of devices. Clicking a cluster zooms in until individual devices are visible.

Marker colors can reflect the currently calculated threshold utilization, which helps identify critical areas immediately on the map.

Each device is shown with a pin and the device name. Clicking a pin opens a tooltip with information about the device:

Device name and links to the PQI app, reporting page, and device parameterization

Hierarchy tags, if Show Hierarchy is enabled

Last synchronized data point and how long ago the last data import occurred

Address configured for the device

Threshold utilization as a table overview

Selecting a device from one of the bottom-panel tables centers the map on that device and opens the tooltip. Devices without configured geodata remain visible in the tables, but they cannot be focused on the map until a location is configured.

Information Panels

At the bottom of Map View there is a panel with four columns. Above each column there is a heading with relevant links to other areas of the application.

Clicking any device name centers the map on that device and opens the tooltip. Each information panel column can be expanded or collapsed using the small arrow button in the column header. Collapsed columns appear as narrow tabs on the left side. Column width can be adjusted by dragging the separators. The overall panel height can also be resized. Column widths, collapsed states, panel height, and the Show only visible devices setting are saved and restored when Map View is opened again.

Devices:

Shows the currently relevant devices together with the configured address. Depending on the Show only visible devices toggle, this is either the current map viewport or the complete device set. The table supports sorting and filtering by device name and hierarchy tags.

Fault Records:

Shows recorded fault records for the selected time range and the currently relevant devices. The heading contains a direct link to the Fault Records page.

The table shows the time, device, type, and trigger-related information. Clicking a fault-recording type opens the detail view directly in the panel.

Thresholds and utilization:

The header contains a shortcut to the corresponding cockpit analysis for the selected time range.

Utilization of the user-defined thresholds of the devices.

Users see the maximum utilization of the thresholds defined for each device. Clicking a utilization percentage opens the Analysis Cockpit with the corresponding threshold data. Clicking a threshold value opens the related threshold settings, if a reporting setting is available.

The application is able to assign a threshold to each measured value and display the utilization here as a maximum value. More in the chapter "customer-specific thresholds". @Fabian insert link to new Threshold chapter here.

PQ Events:

Lists PQ events for the selected time range and the currently relevant devices. The heading contains links to the PQ Events over Time analysis and the PQ Events page.

The table shows the event time, device, linked OSC/TRMS recordings, event type, and the measured value. Sorting and filtering can be used to narrow down the list to specific event types or devices.

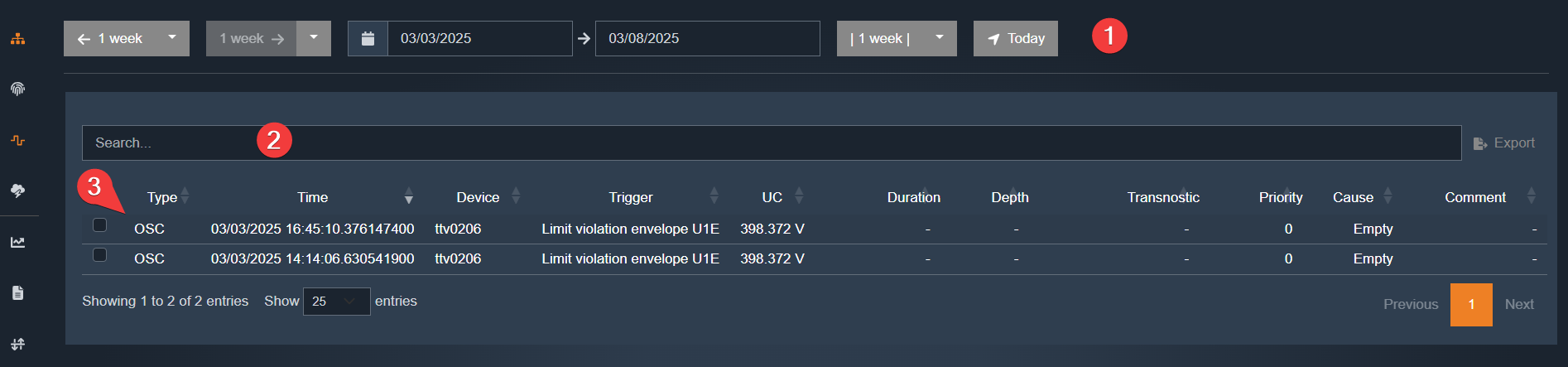

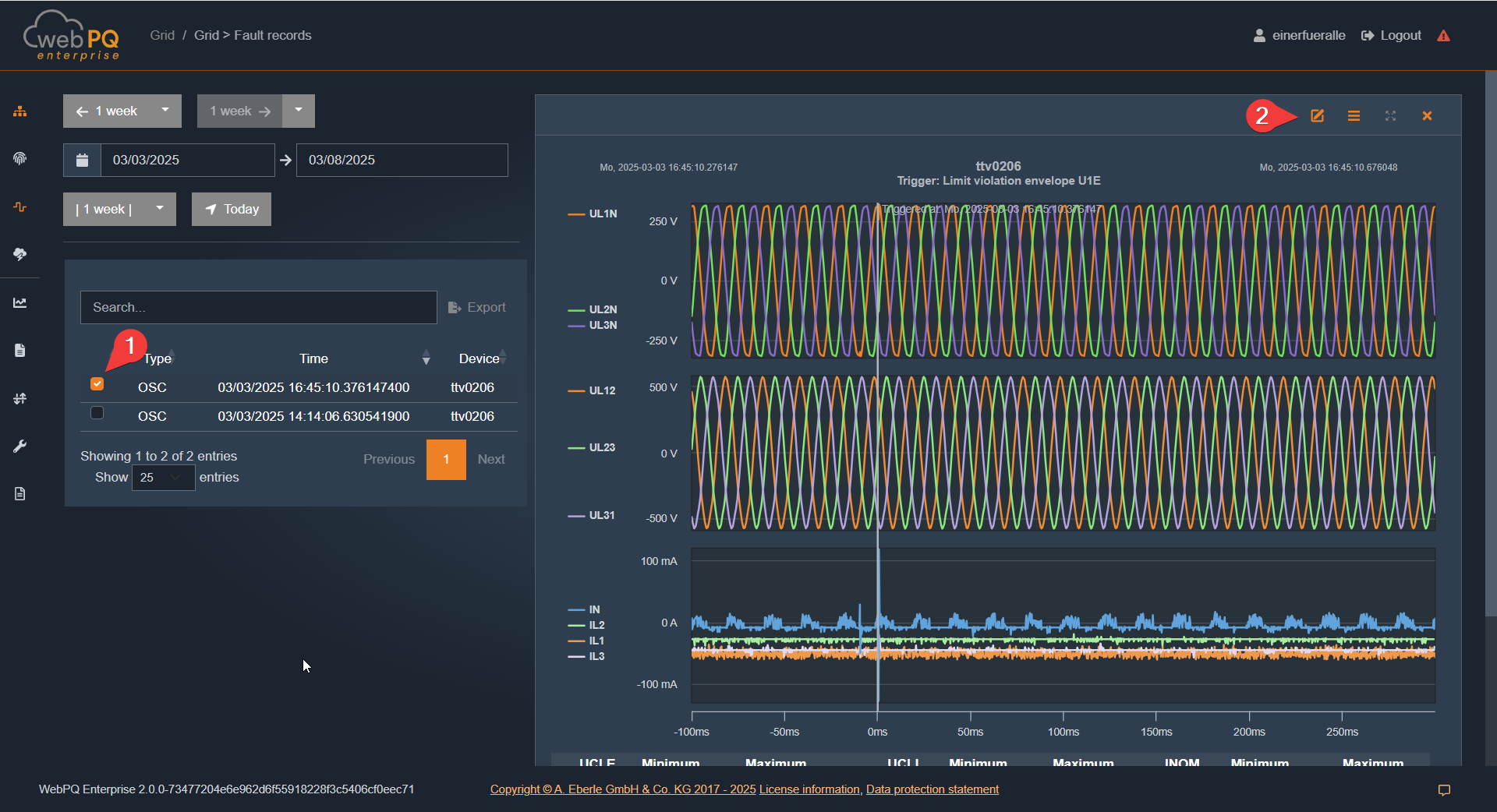

In the Grid > Fault Records area, all disturbances within a freely selectable time period #1 are displayed in a list.

The display offers the following functions:

Date-range jump buttons for quickly switching by week and jumping back to the current day

Hierarchy display for showing or hiding the configured device-tag hierarchy in the device column

Search function#2 for targeted search of disturbances

Device filtering via the device tree in the table header

Ability to open fault records via the OSC and TRMS#3 links in a Quick View

In the #4 area, the user can set individual filters. In the shown example, a filter on duration > 50 ms is set, displaying only disturbances in this range. The table can also be sorted by type, time, device, trigger, duration, and other available columns.

The device column supports both free-text search and selection through the device tree. If hierarchy display is enabled, the configured device tags are shown directly in the list so that disturbances can be grouped more easily by location or structure.

Clicking on OSC or TRMS#1 opens the Quick View window from the right.

For advanced analysis options, setting markers, or describing the disturbance, the Analysis Cockpit can be opened via #2.

Clicking a device entry opens the corresponding device page at the event time. This helps when the fault record needs to be checked together with device details or configuration.

To view two or more fault records in parallel, multiple disturbances can be selected via the selection function. The selected records can then be loaded together into the analysis view for direct comparison.

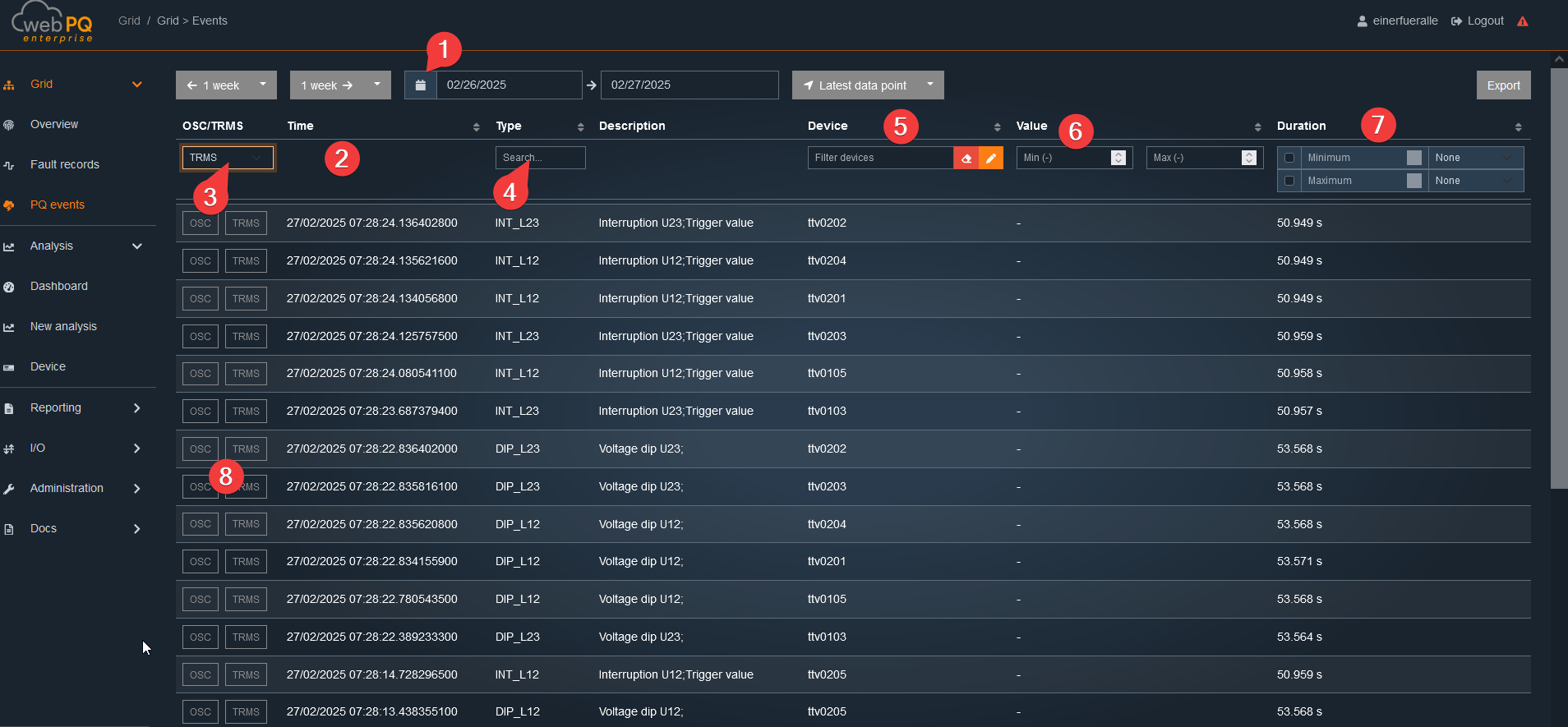

In the Grid > PQ Events area, all Power Quality Events (not disturbance records!) within a freely selectable time period #1 are displayed in a table.

The display offers several search and filter functions#2:

Filter by disturbance record (#3): Shows only PQ events where a disturbance record was triggered according to IEC

Filter by type (#4): Allows free text search for the type of event

Filter by device (#5): Convenient device narrowing via device selection

Filter by value range (#6): Setting an upper and lower limit value

Filter by duration (#7): Restriction to events of a certain duration

Above the table, the same date-range jump buttons as in the fault-record view are available. The table also provides a hierarchy toggle so that configured device tags can be shown or hidden in the device column.

The device filter only offers devices that actually have PQ events in the selected time range. This makes it easier to narrow large installations down to the relevant sources quickly.

Additionally, existing disturbance records can be opened directly in a Quick View via the OSC and TRMS#8 links.

The Description column is derived from the PQID metadata of the event type. This means the table shows the translated event description that belongs to the stored PQ event.

The current result set can be exported directly via the Export button above the table.

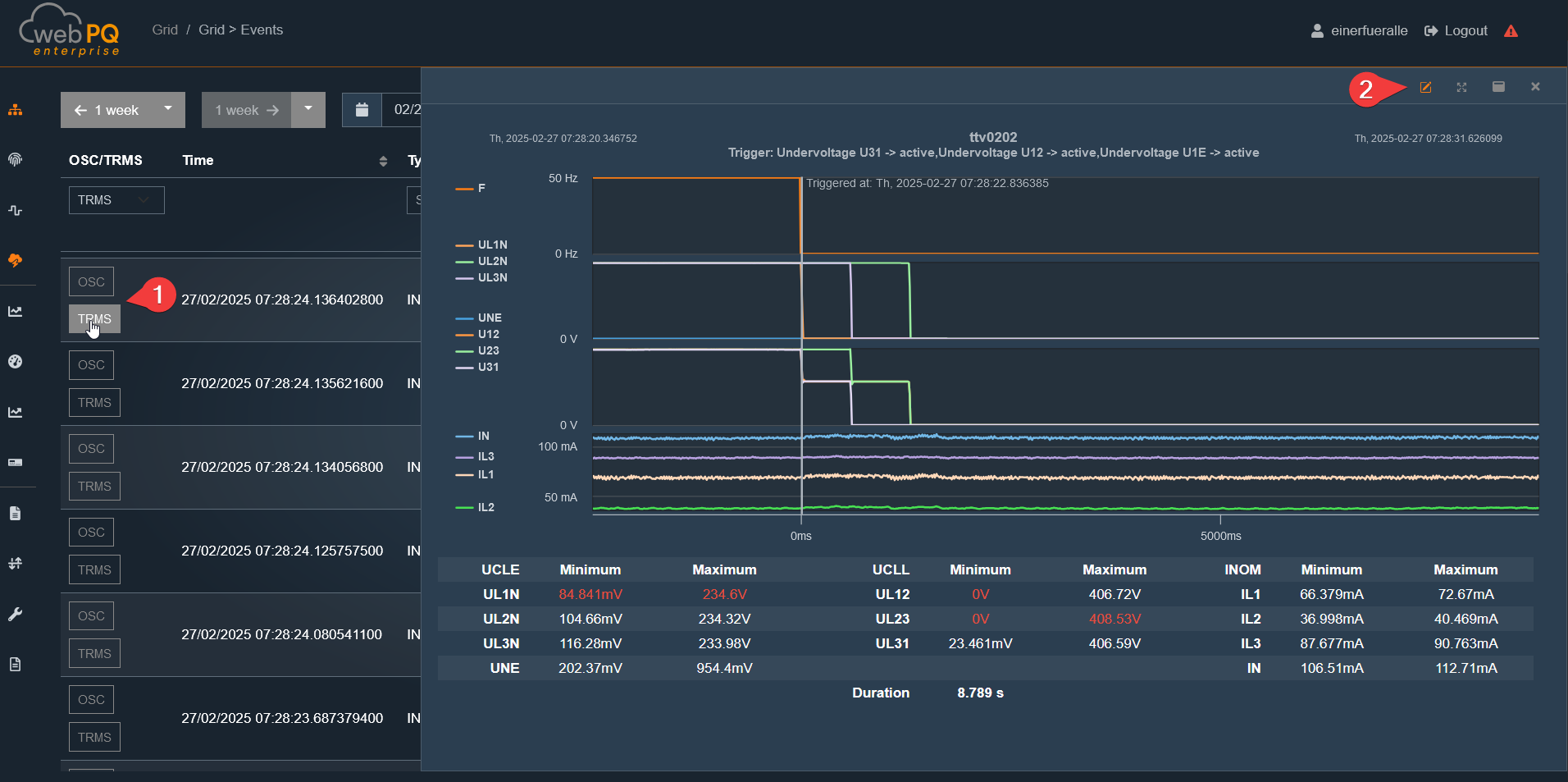

Clicking on OSC#1 opens the Quick View window from the right.

For advanced analysis options, setting markers, or describing the disturbance, the Analysis Cockpit can be opened via #2.

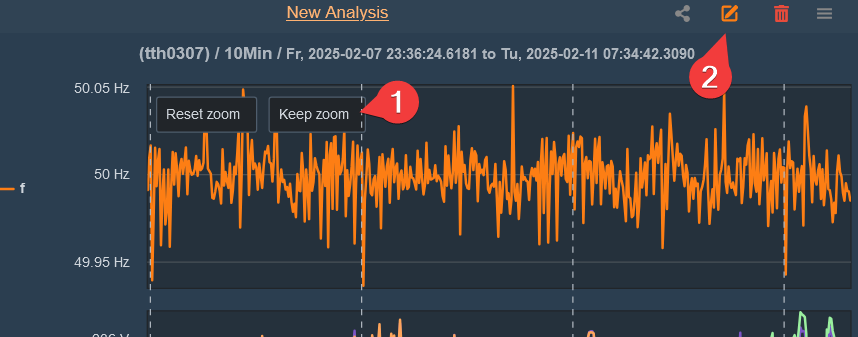

To zoom, use the left mouse button or, on a touch display, the standard two-finger zoom gesture.

Zoom In: Hold down the left mouse button and drag left or right.

You can save the zoomed area using the "Keep Zoom" function #1.

To reset the zoom to its original value, use "Reset Zoom".

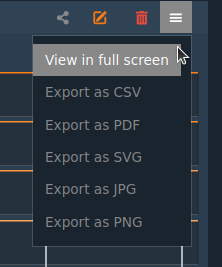

#2View in Full Screen Mode: Expands the graphic to fill the entire monitor, which is especially useful for tablets or phones.

Export as CSV: Saves the measurement data as a CSV file.

Export as PDF: The disturbance record is saved as a PDF and can be downloaded from the server via (Import/Export >> Print) after creation.

Export as JPG / SVG or PNG: Exports the graphic in various image formats.

Export as Comtrade: Converts the disturbance record into a Comtrade file, which is also available on the server via (Import/Export >> Export).

Export as PQDIF: The disturbance record or measurement data (depending on the analysis) is saved as a PQDIF file and can also be provided on the server via (Import/Export >> Export).

Note:

Exports, including PDFs, are stored on the server. The storage location is defined in the server settings in the WebPQ backend and can only be changed by the administrator. Installation Paths

By clicking on a measurement#1, individual measurements can be selected or deselected.

Deselected measurements are automatically moved to the bottom.

Measurements are always displayed in order of their height on the Y-axis.

The Analysis Cockpit can be accessed and opened at any time via the button  .

.

More information about the Analysis Cockpit can be found under Link

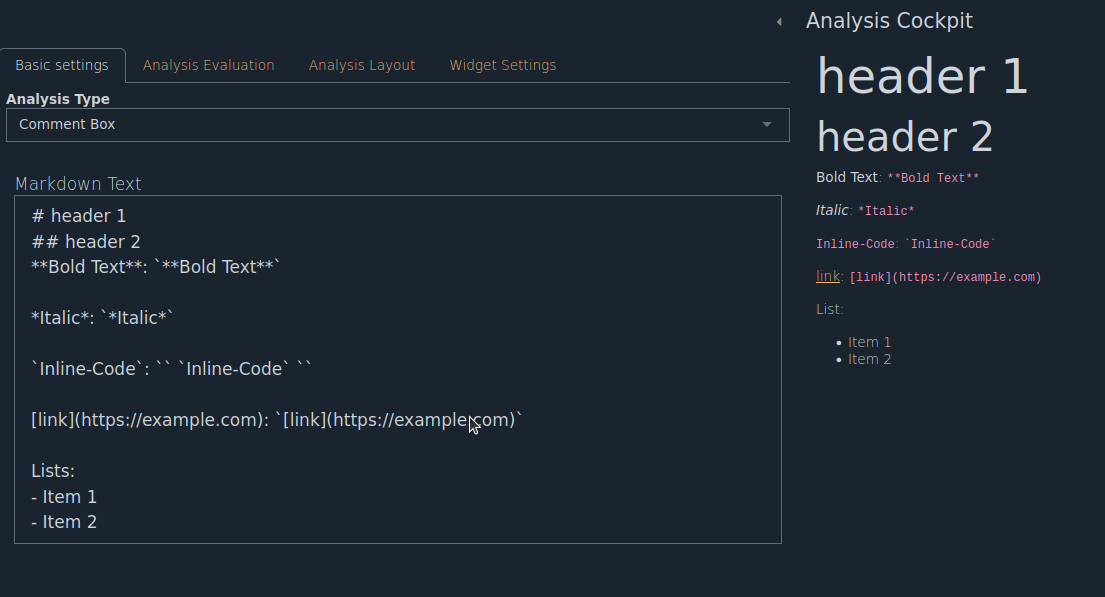

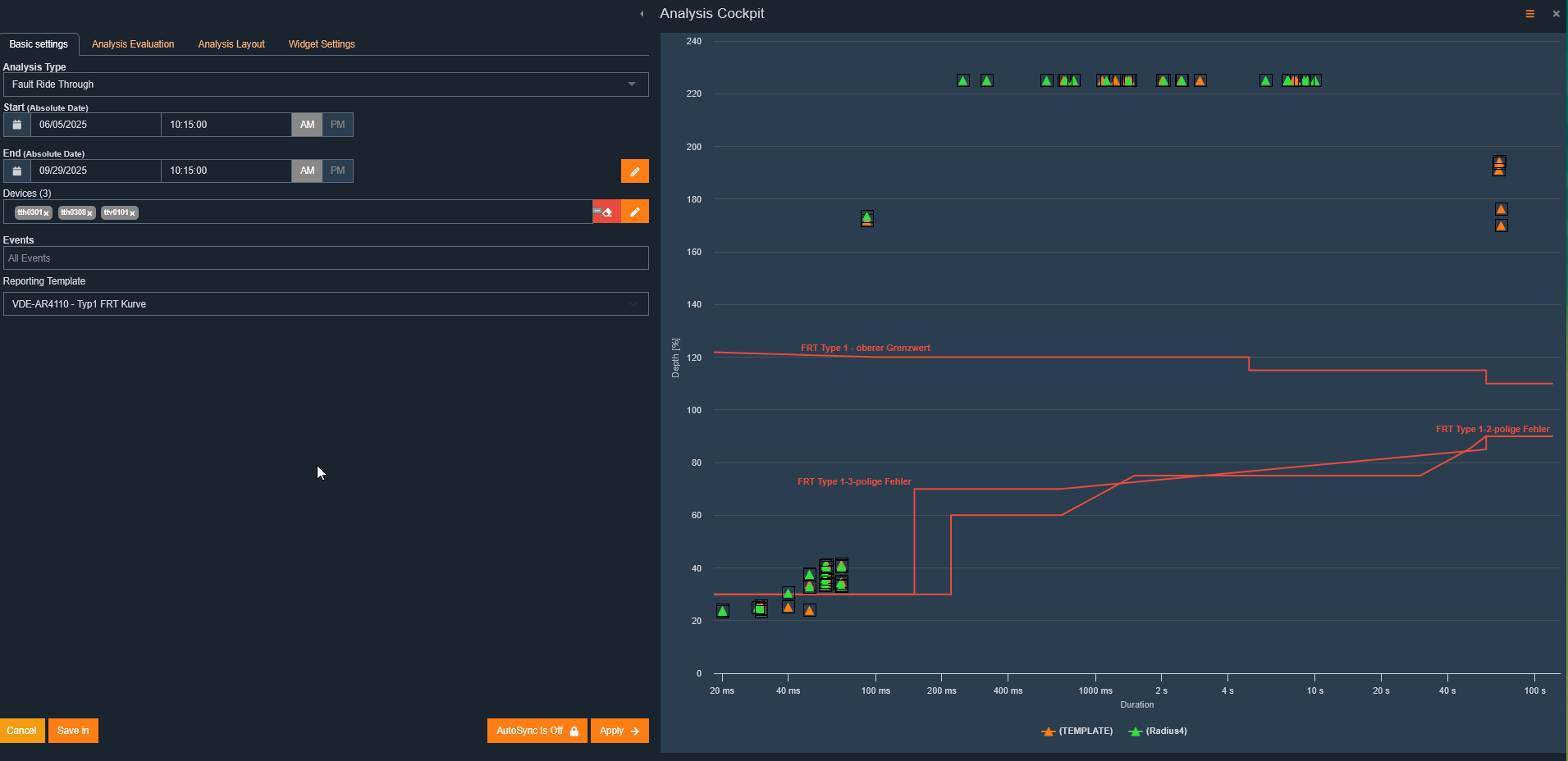

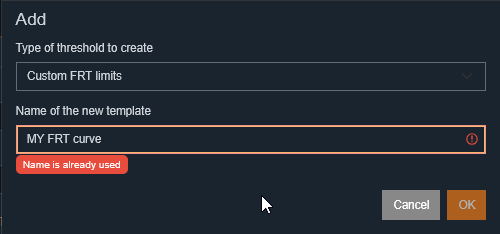

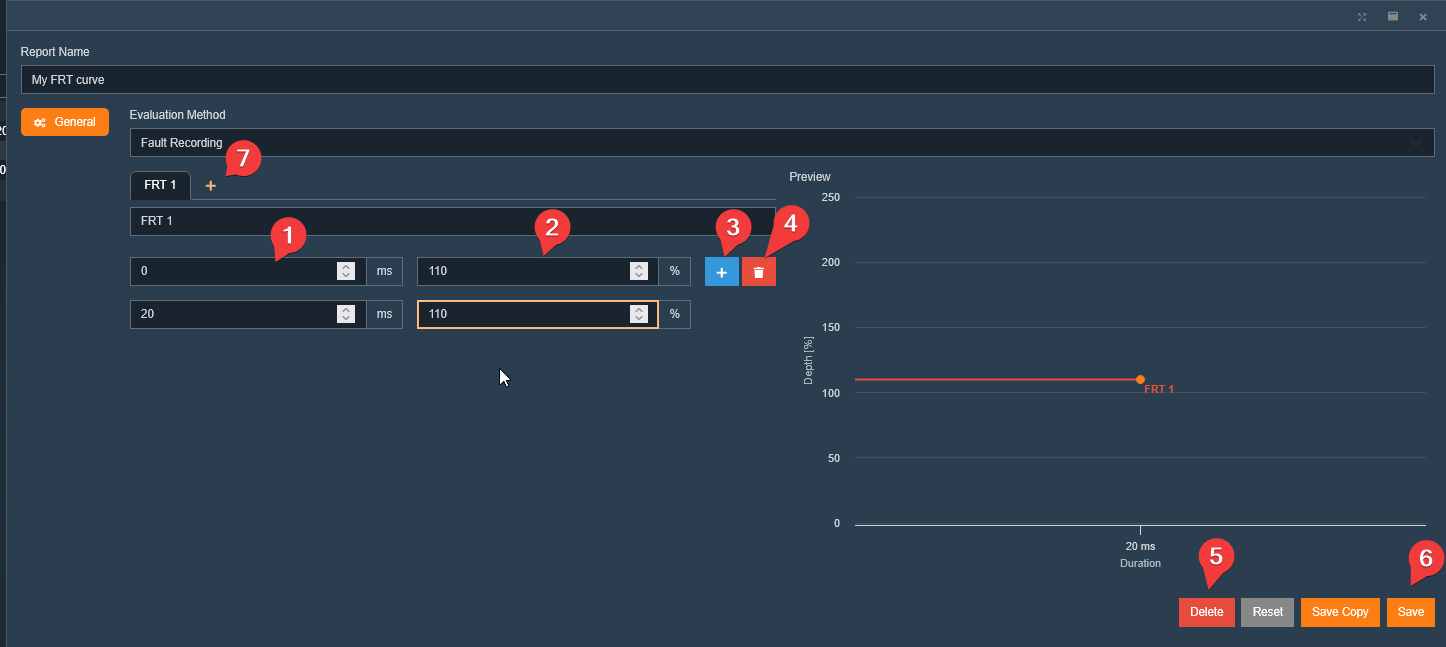

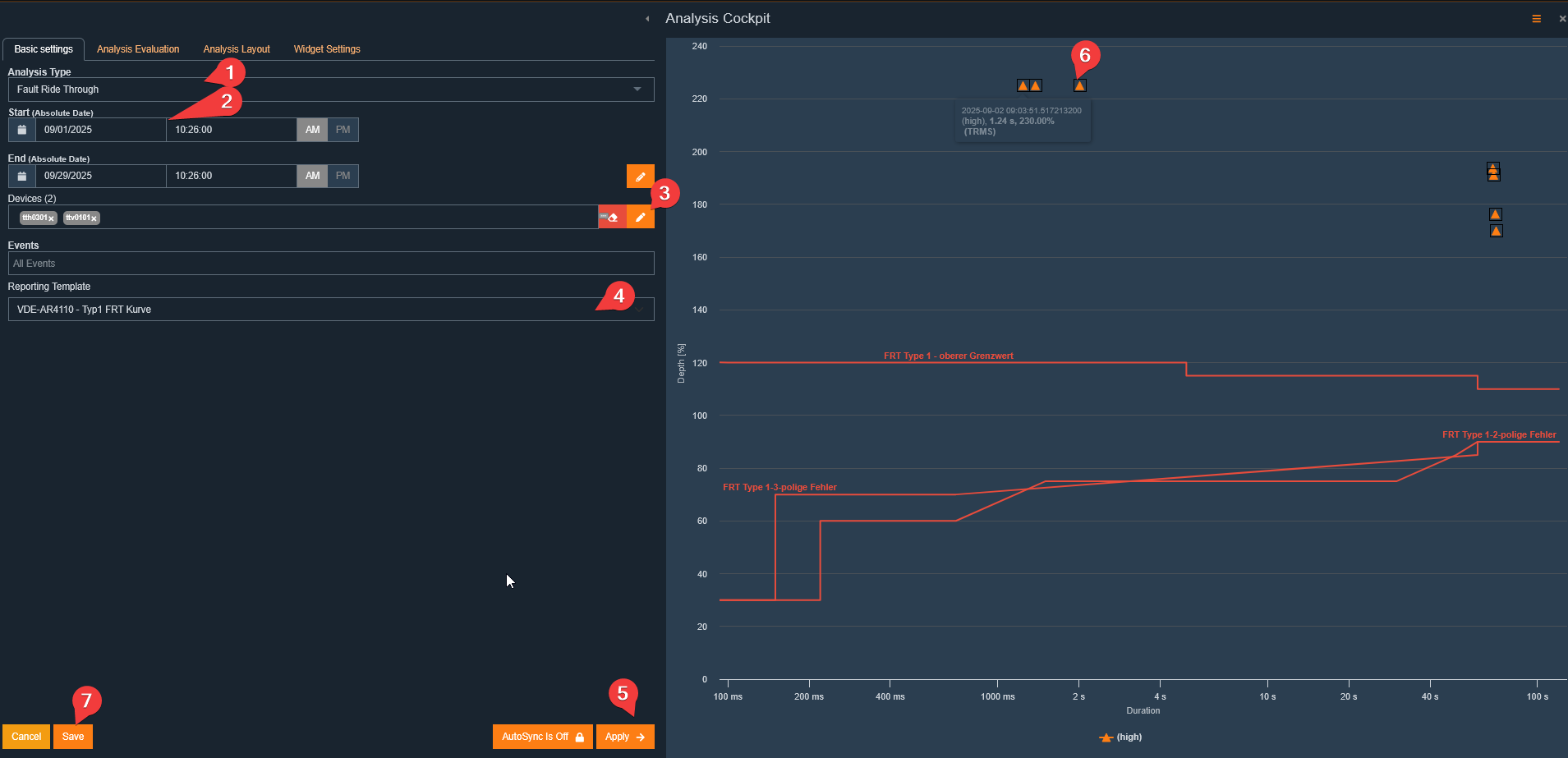

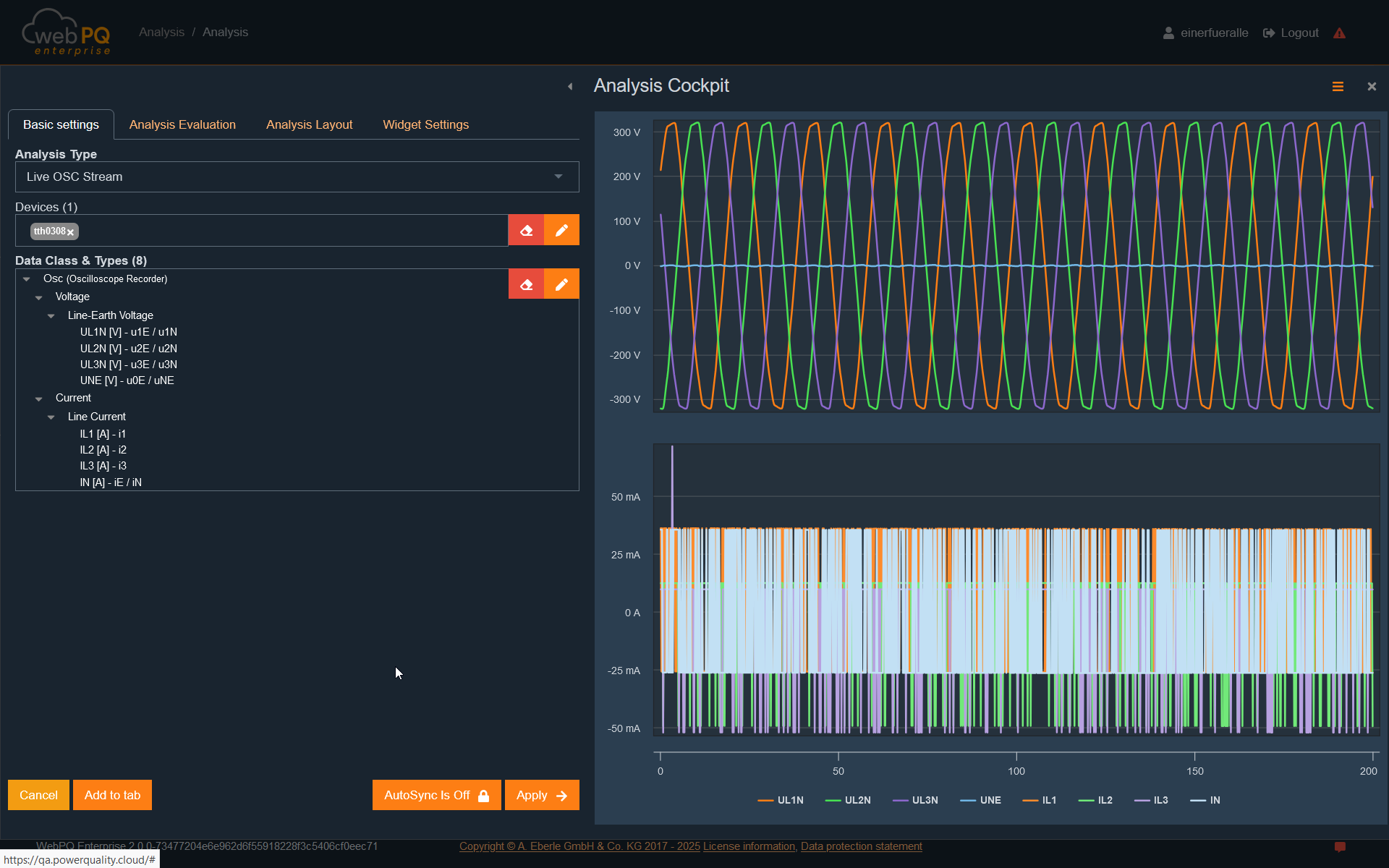

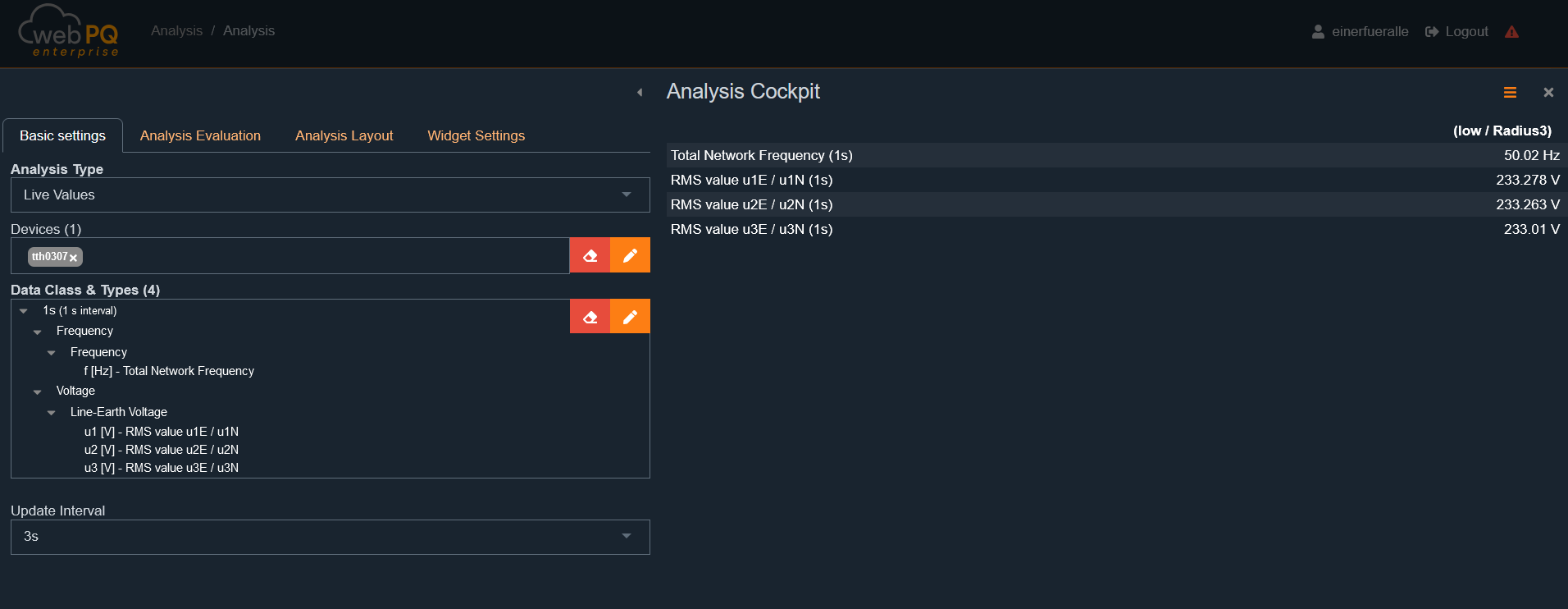

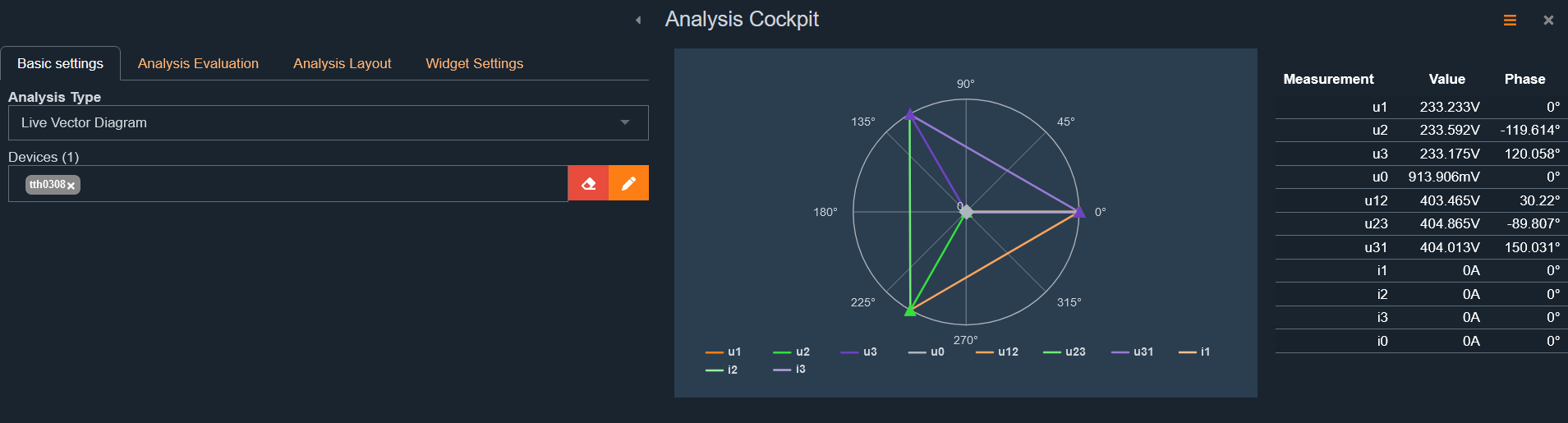

The Analysis Cockpit is the central workspace and analysis area, offering various types of analysis, including:

Level-time diagrams

Bar charts

Histograms

Pre-configured reports for creating custom dashboards and reports

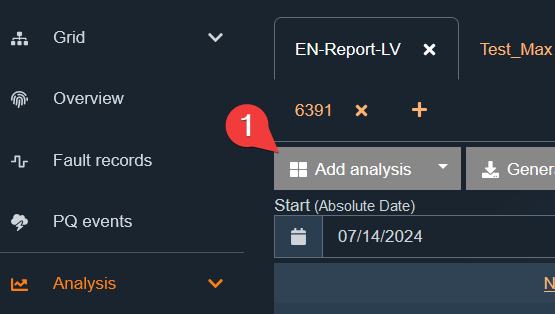

You can open the cockpit in several ways:

Via the main menu under "Perform Analysis"

Via the analysis dashboard by selecting "Add Analysis"#1

By clicking a measurement value in the statistics under "Network > Overview"

Via the shortcut from any analysis

from any analysis

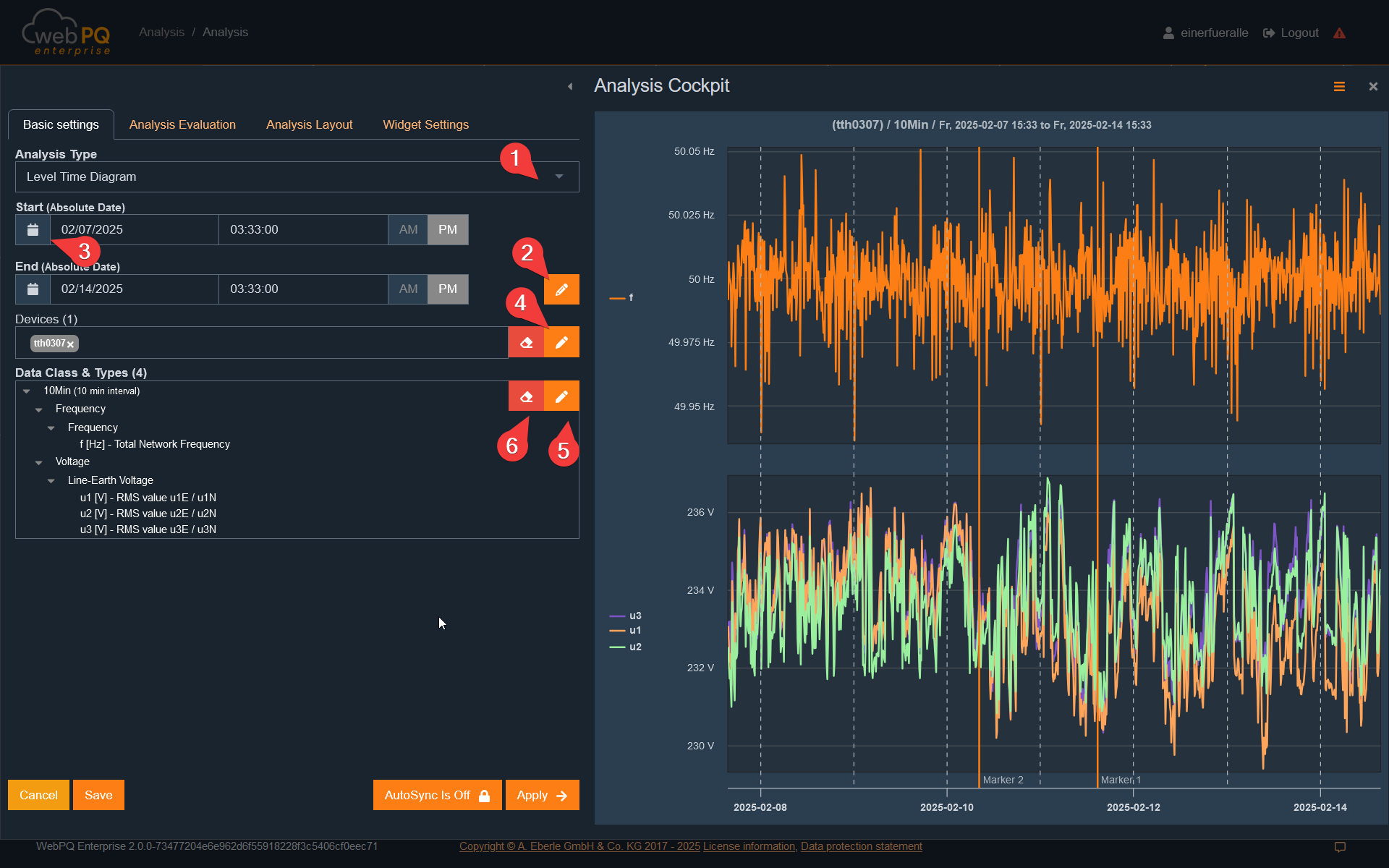

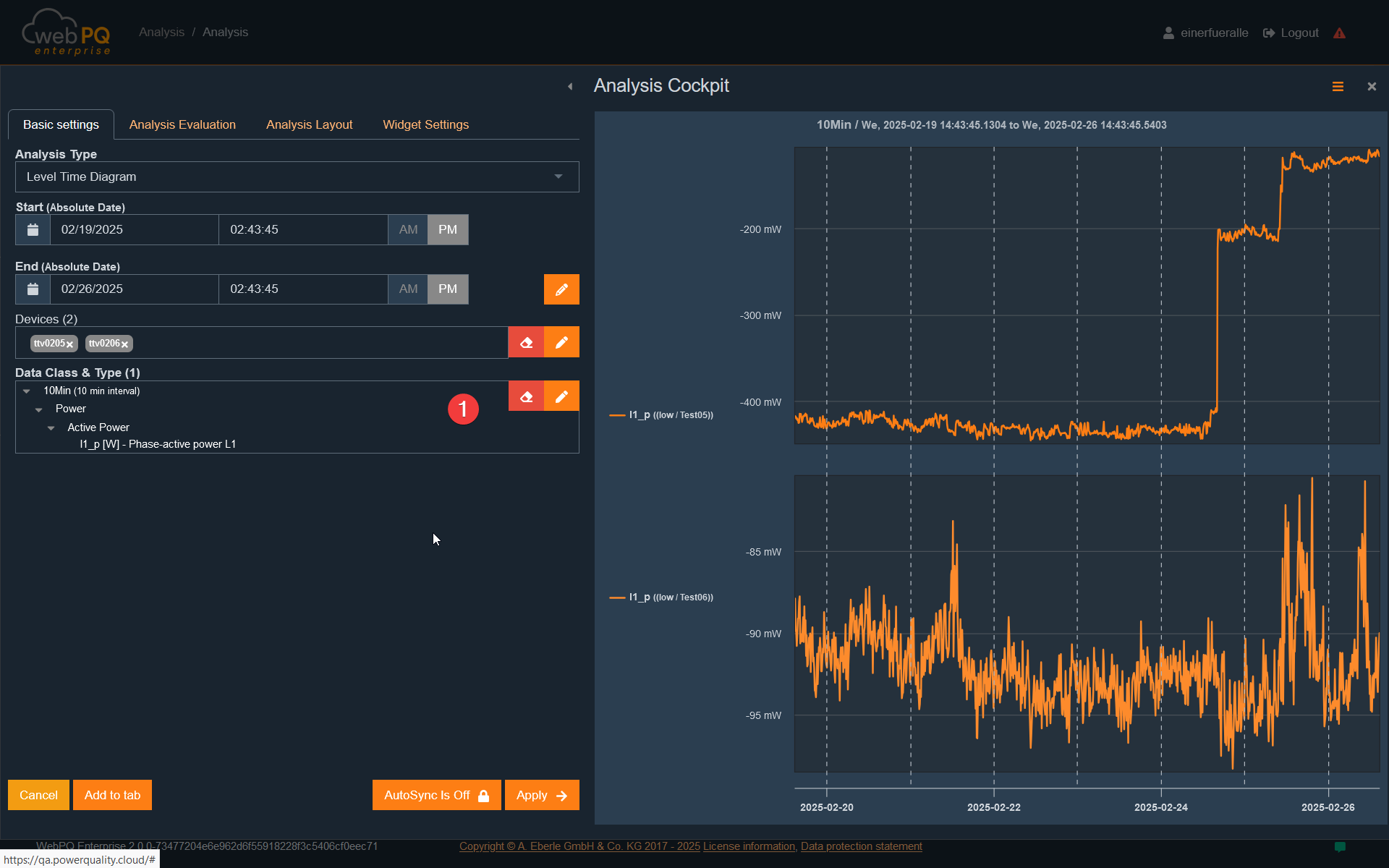

The Analysis Cockpit is divided into two sections:

Left section: Configure settings here

Right section: Visualise measurement data here

Key functions in the Analysis Cockpit:

Basic settings#1:

Select the analysis type

Define the device selection

Set the evaluation periodSettings

Choose the measurement variables

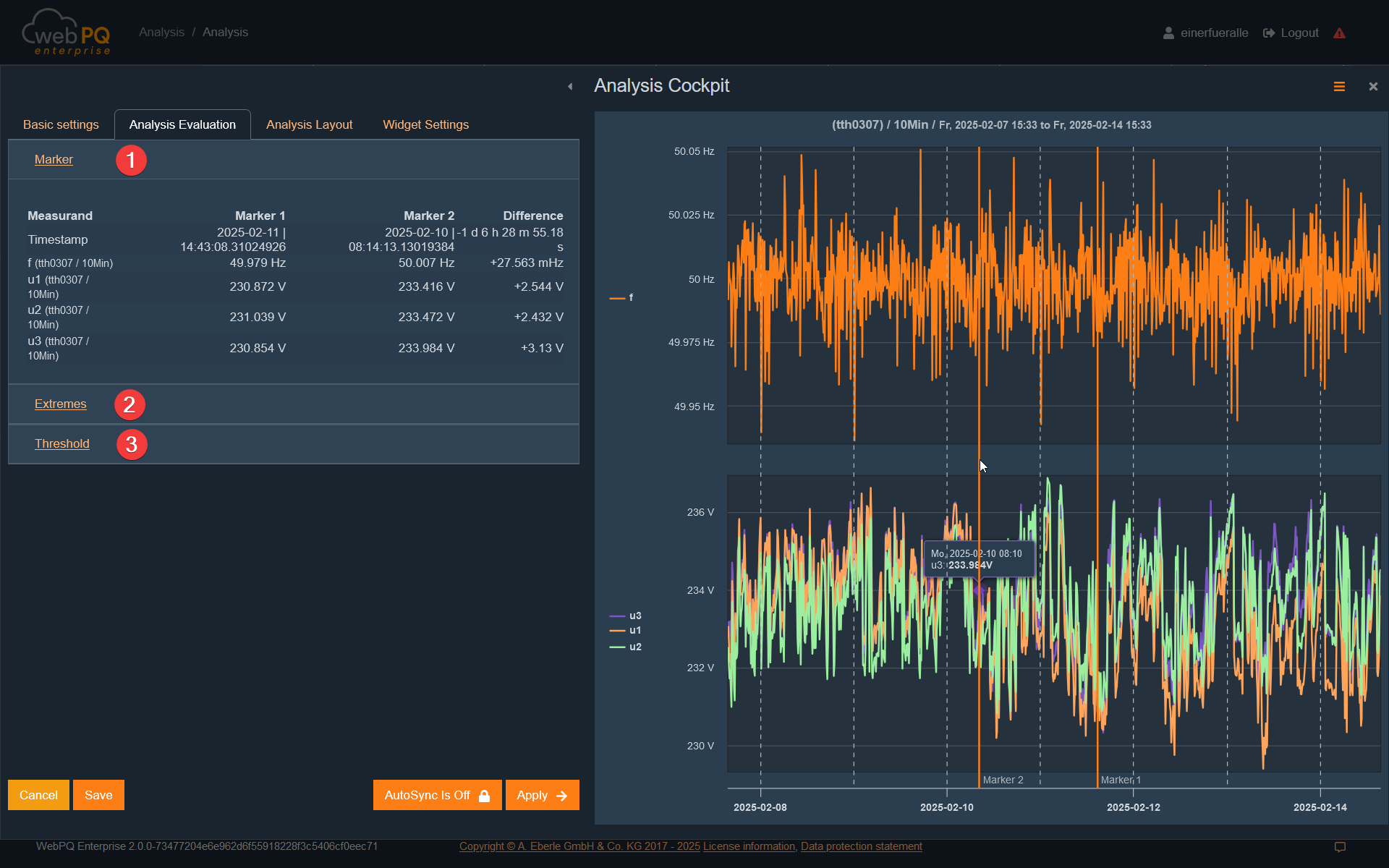

Analysis evaluation#2:

Set and display markers

Comment and prioritise disturbances

Display limit and extreme values

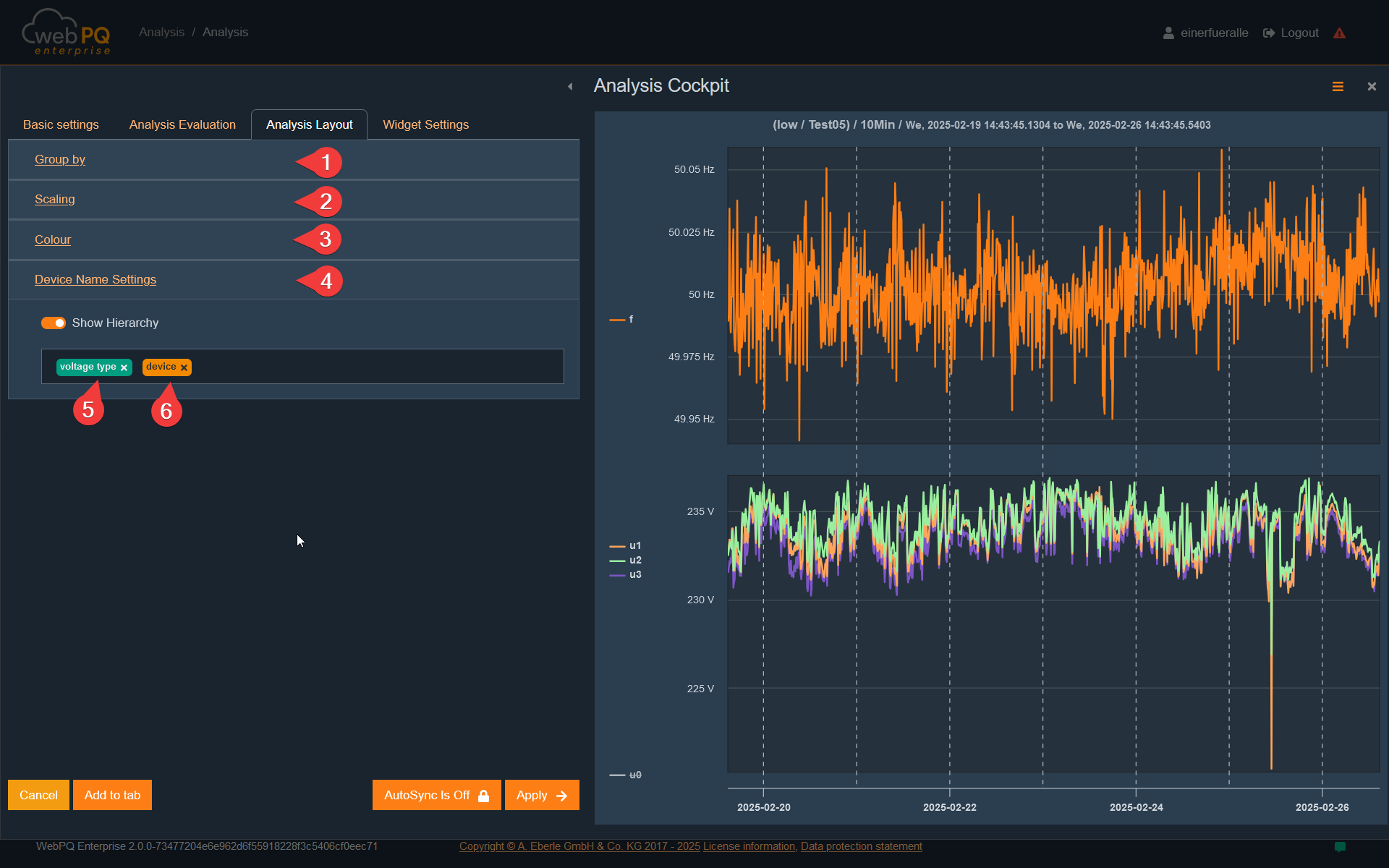

Analysis layout#3:

Group measurement variables

Scale the display

Select colours for measurement values

Widget settings#4:

Adjust the size and title of an analysis widget

Save the widget to the analysis dashboard

Save#5:

Permanently save the analysis in the analysis dashboard.

The dashboard is stored for the user in the database, allowing all created analyses to be recalled and restored.

Auto synchronisation#6:

When enabled, changes are immediately shown in the right section

Apply#7:

Applies the selected measurement variables and displays them in the right frame

Cancel#8:

Closes the Analysis Cockpit without saving data

Note:

The Analysis Cockpit settings are saved in the browser cache. This means data is retained even if the analysis is not saved in the dashboard.

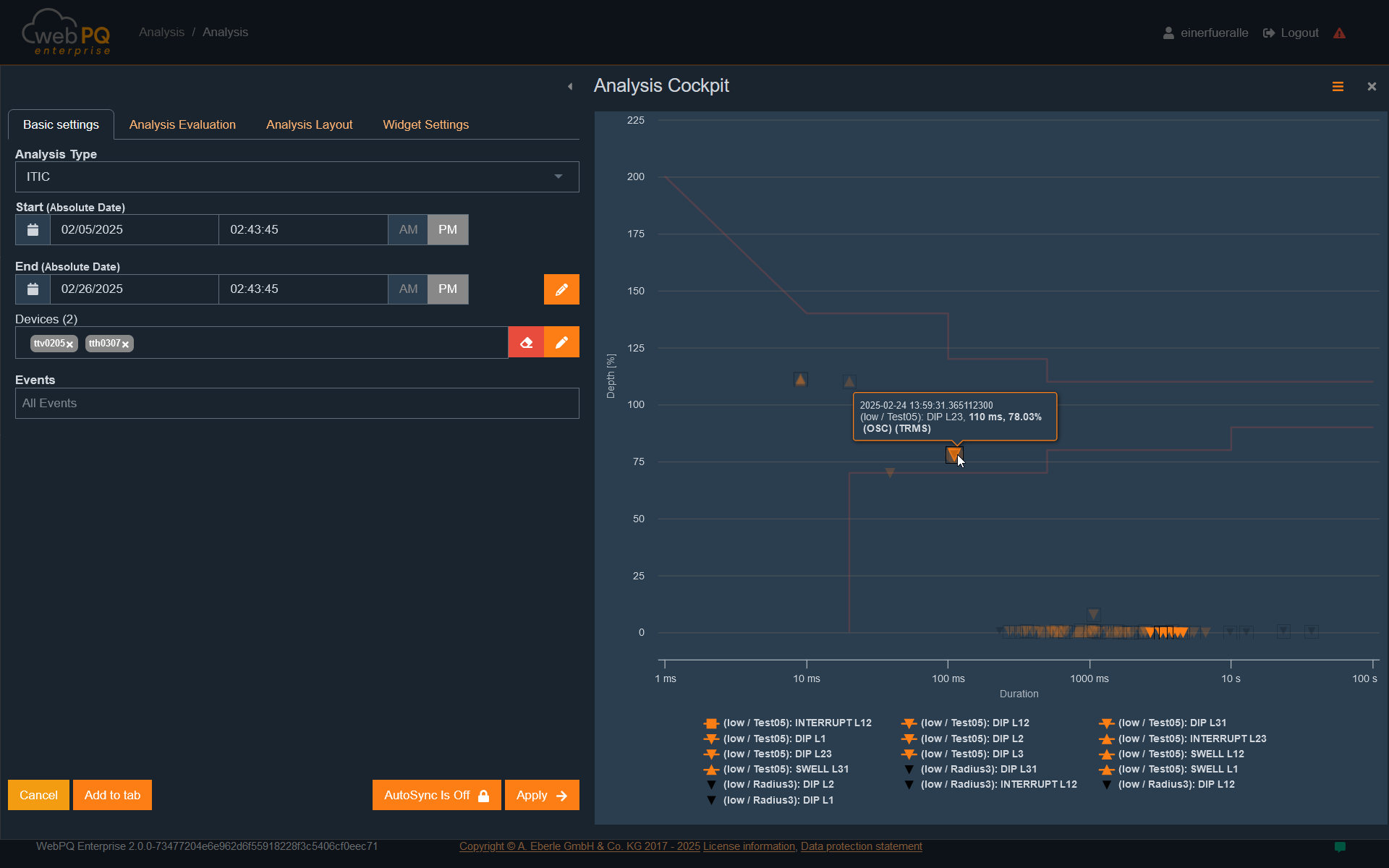

Under #1, select the desired analysis type. By default, the level-time diagram is pre-selected. Alternatively, other types such as histograms or, for power quality event analysis, the ITIC graph can be chosen as the evaluation type.

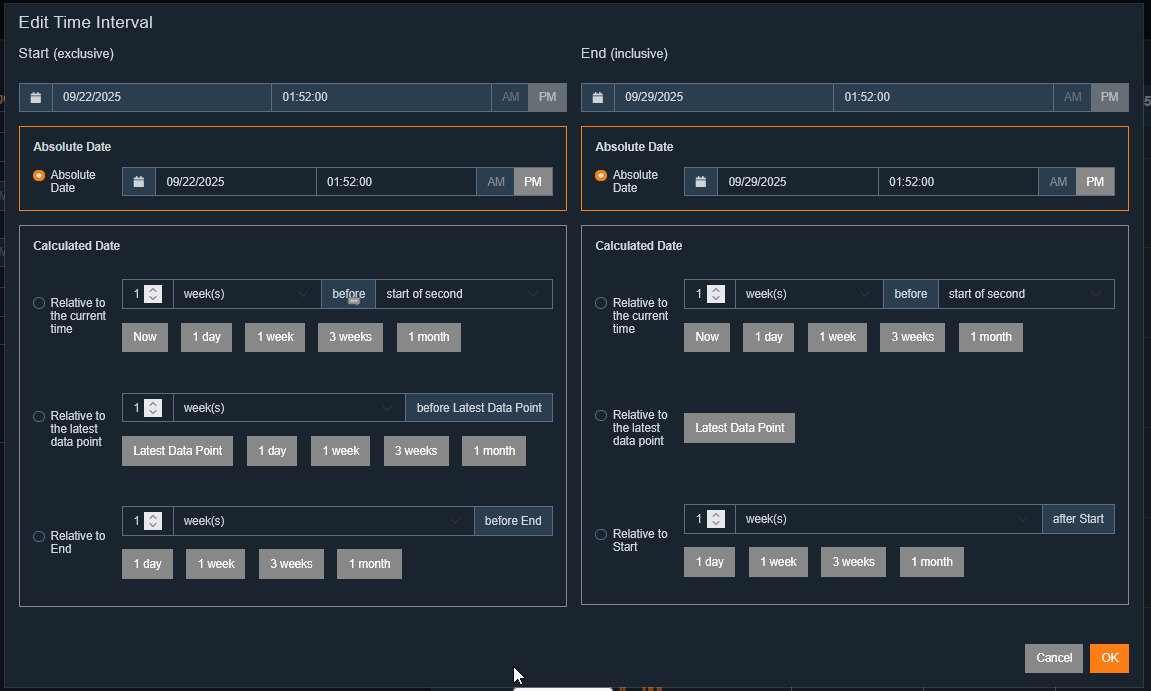

With the time setting#2, you can open a dialog to configure the analysis period. If an absolute period has been defined in the

menu, it can be set either by directly entering the start and end time under #3 or via the input fields.

In the device section #3, select the devices to be evaluated. This can be done by entering the device ID or using the selection list by clicking #3. The list shows all devices available in the database.

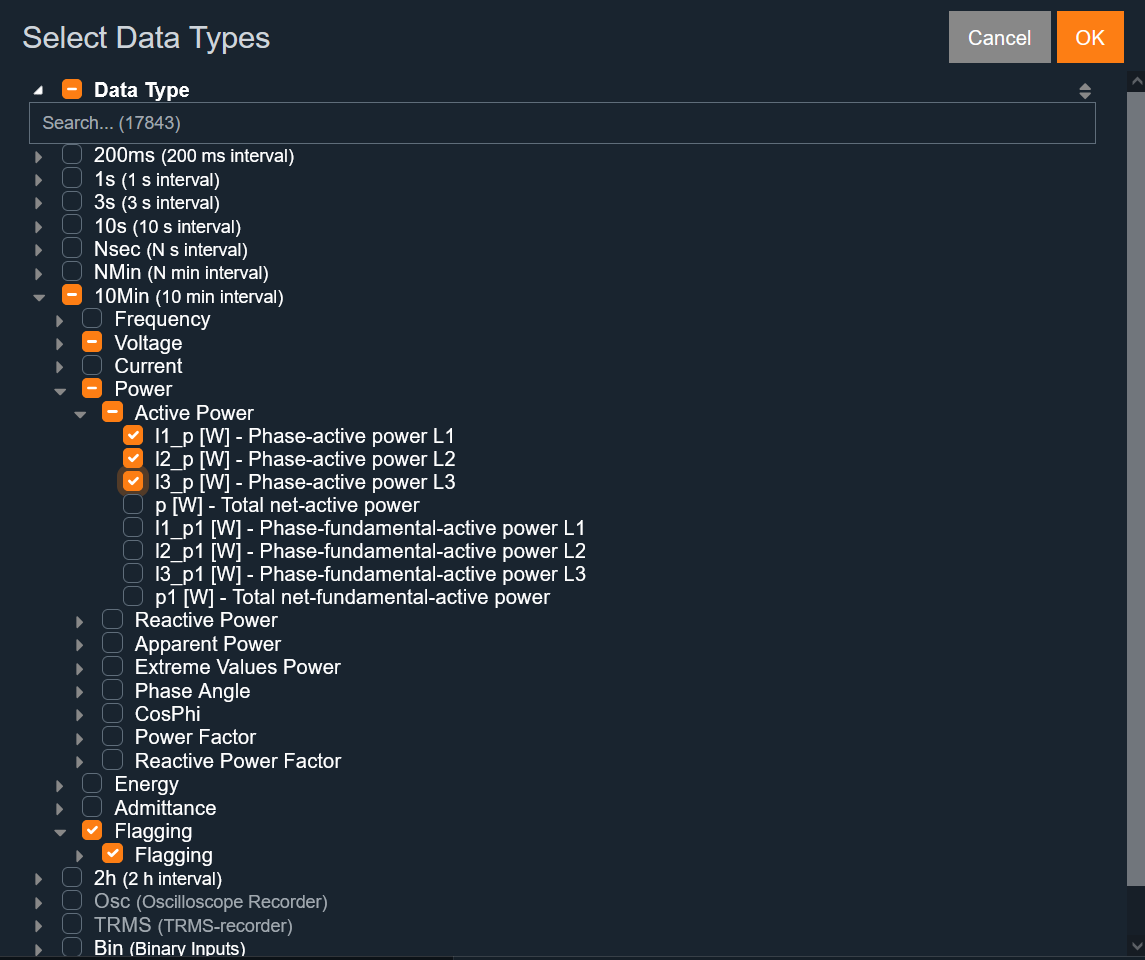

Using the measurement variable tree#5, select the measurement values recorded by the chosen devices during the selected period and transferred to the database. The selection can be reset with #4.

Depending on the analysis type, specific parameters must be considered for correct evaluation. These are explained in the following sections.

The level-time diagram displays selected measurement values as a line chart over a defined period. It is ideal for temporal analysis of measurement values and their changes.

A special feature is the integration of flagging according to IEC61000-4-30 Class A. If enabled, flagging information is shown directly in the measurement variable tree #1 within the measurement series.

Measurement variable selection is dynamic: It is based on the intersection of available data, restricted by parameters such as period and selected devices.

See also: Time settings for experts

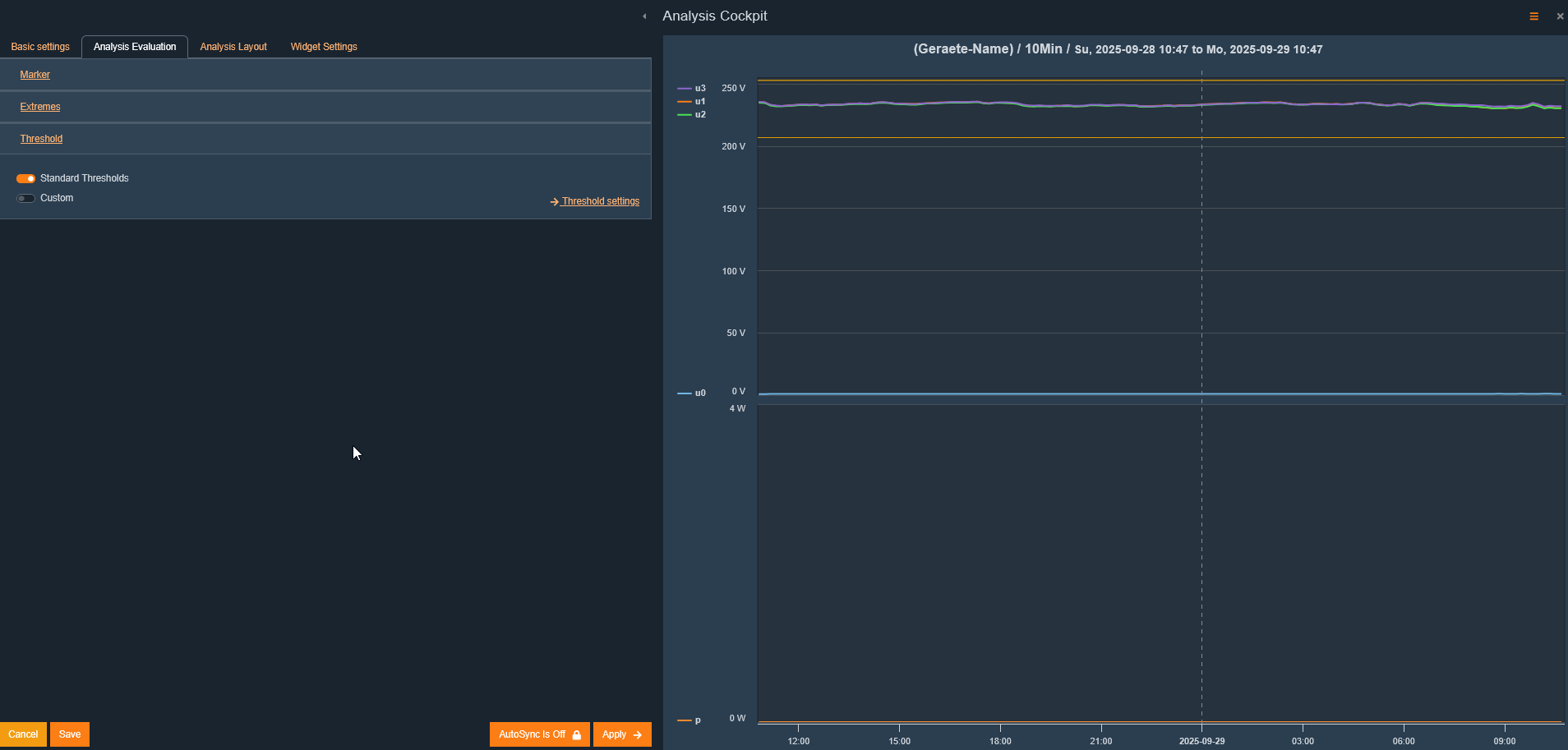

In the "Analysis Evaluation" tab, you can display the thresholds stored in the measuring device for the selected measurement variables. From version v2.1, alternative threshold sets can also be selected and applied to the measurement data.

To show thresholds, select either the standard thresholds #1 or the extended threshold sets #2. Thresholds are then automatically displayed in the chart.

Extended threshold sets can be customised and managed in Reports > PQ Standard Templates. You can create, edit, or delete threshold sets there.

Flagging is visualised as a normal measurement value and displayed in the measurement series.

In the level-time diagram, up to two markers can be set for detailed examination:

Set marker:

A left-click on a data point adds a marker.

Up to two markers can be set at once to compare differences between measurement values.

Remove marker:

Clicking a set marker again removes it.

Marker values are automatically displayed in analysis evaluation and can be used for further analyses.

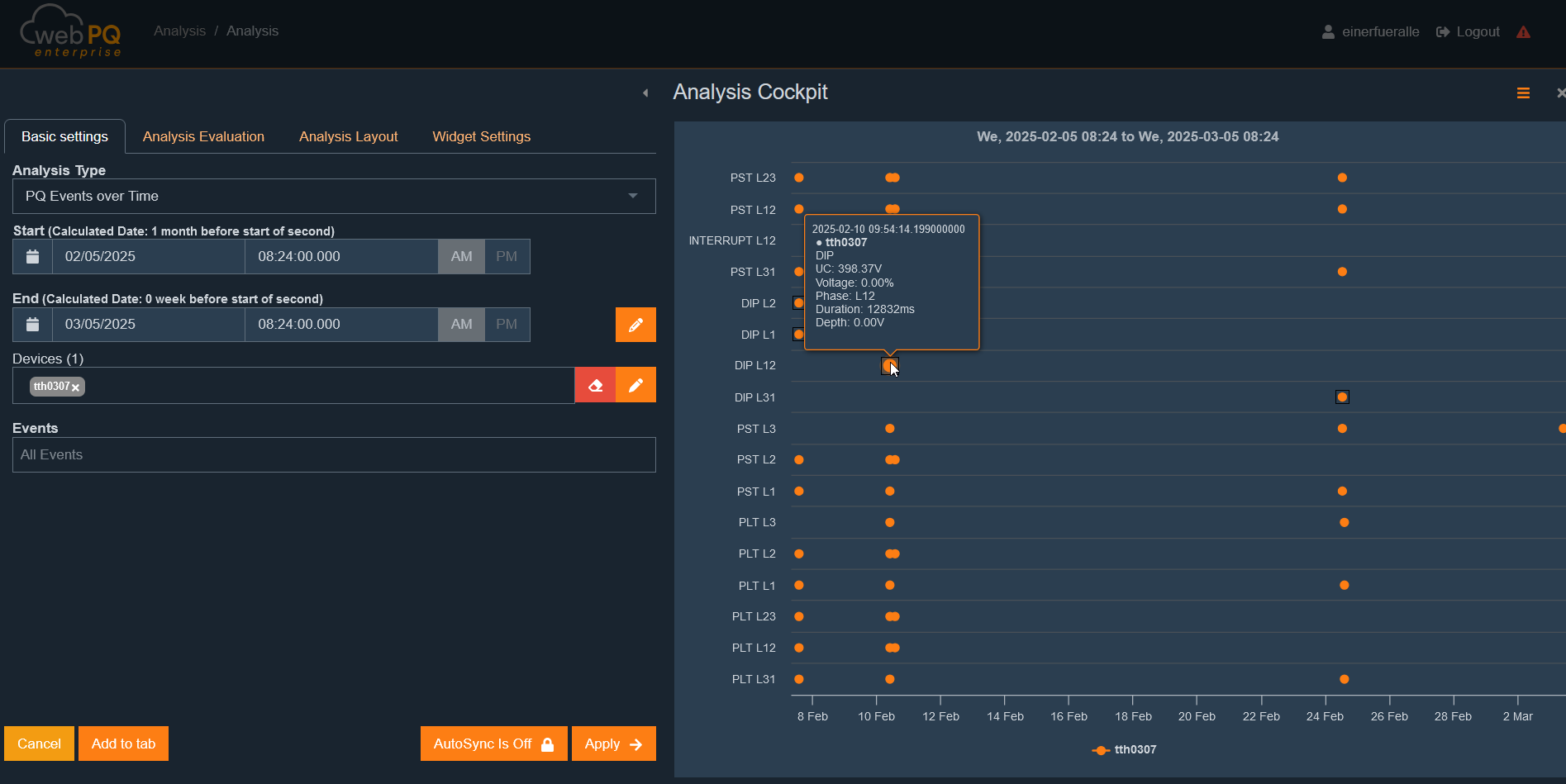

The PQ events over time diagram visualises recorded and selected power quality (PQ) events within the defined time range.

Retrieve detailed information:

Hovering the mouse over an event displays additional info, such as the height or depth of the event or other relevant measurement values.

Compare multiple PQ events:

The temporal view makes it easy to identify patterns and clusters of events.

Notable events can be further investigated via the Analysis Cockpit.

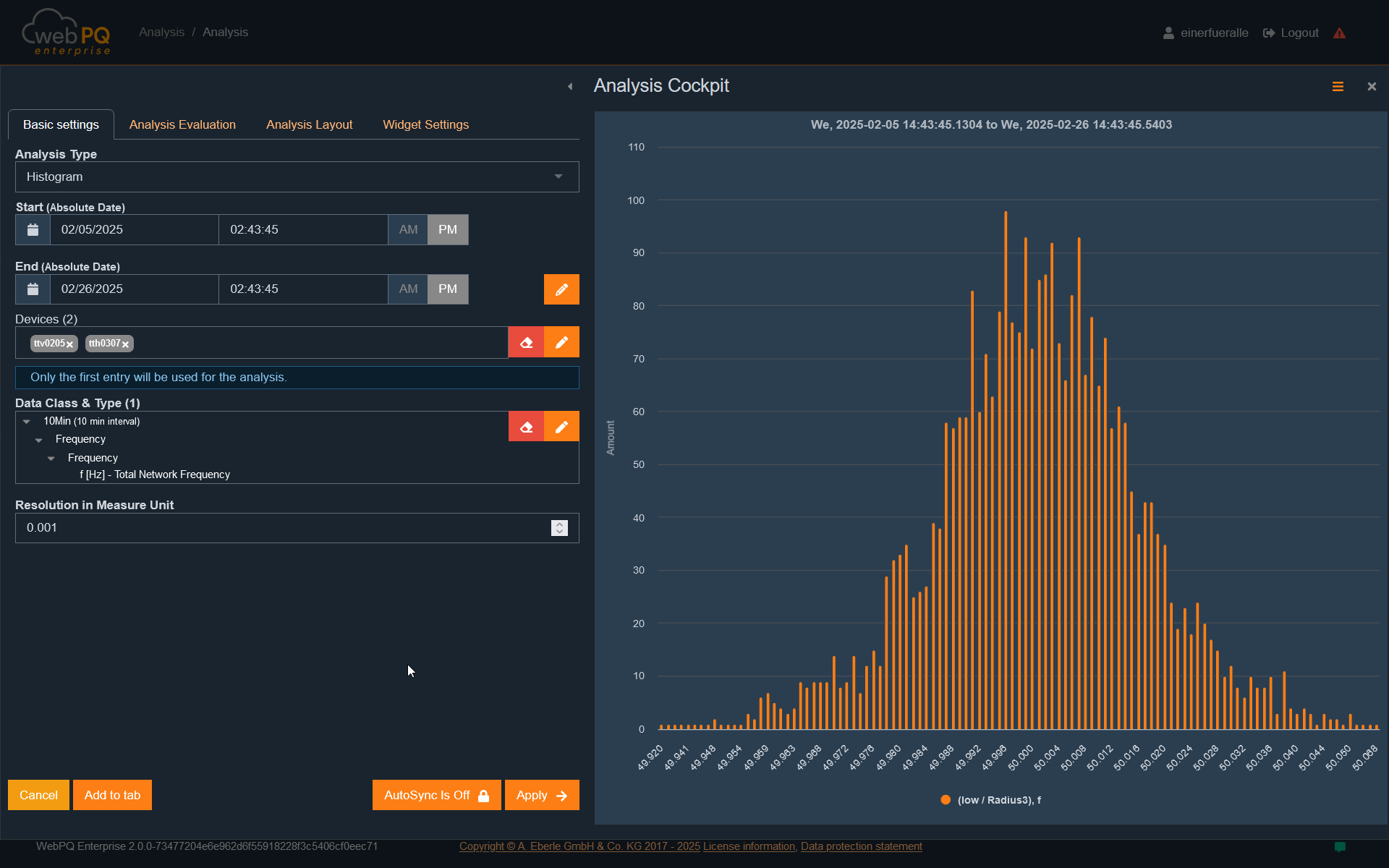

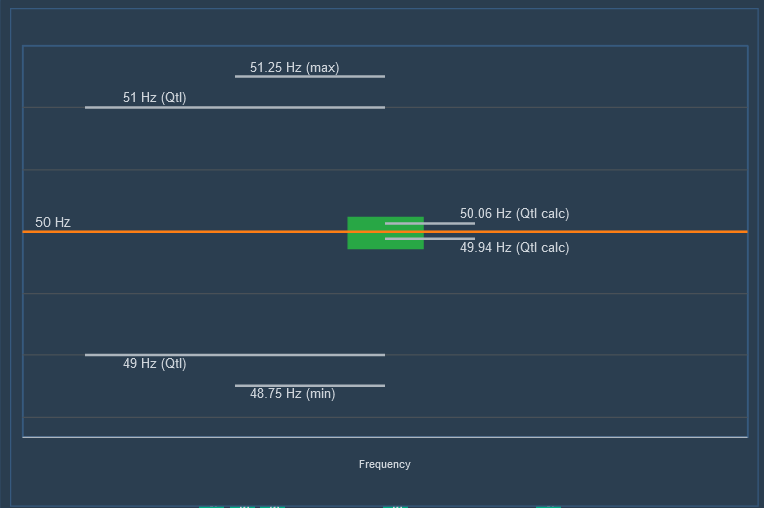

The histogram shows the statistical distribution of a selected measurement variable within a defined period.

Select measurement variable:

In this example, frequency#1 was chosen.

Calculate distribution:

Data was grouped into 10-mHz bins over the selected period #2 and displayed as a frequency distribution.

Application areas:

The histogram helps identify deviations from expected values.

Unexpected peaks or asymmetries may indicate anomalies or systematic errors.

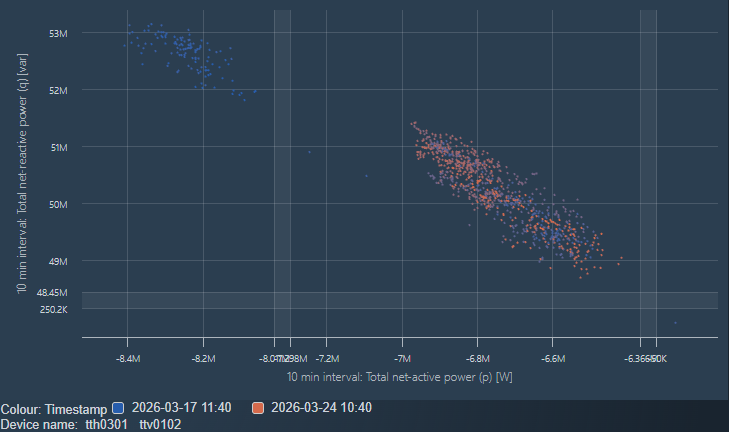

The scatter plot visualises the relationship between two selected axis values as a cloud of points. Each point represents one individual measurement record. This analysis type is especially useful for spotting correlations, clusters, outliers, and distributions quickly.

Unlike the level-time diagram, the scatter plot focuses on the relationship between two characteristics instead of their temporal progression. This makes it easier to compare measurement variables directly or structure data by device, tag category, or plot group.

Compare two measurement variables, for example active power against reactive power

Identify typical operating states through cluster formation

Find outliers or unusual operating states

Compare multiple measuring points or groups in one shared chart

X axis and Y axis: define which values are plotted against each other

Plot groups: allow multiple data sources or configurations to be combined in one view

Color axis: adds another visual distinction for points, for example by measurement variable, device, or group

Shape axis: provides an alternative visual grouping through different marker symbols

Automatic gap cutouts: compress large empty axis areas so relevant point clusters stay readable

The FFT Spectrum analysis is used to inspect the frequency spectrum of oscilloscopic measurement data. It is useful when periodic signal components, resonances, or dominant frequency bands need to be identified directly from time-domain recordings.

The selected device must provide oscilloscopic measurement data.

FFT-specific options become available only when suitable oscilloscopic data types are selected in the measurement-variable selection.

Historical FFT uses the selected analysis interval. Live FFT requires an active live-data stream.

Historical FFT is based on stored oscilloscopic data in the selected time range. It is suitable for analysing already recorded events or archived oscilloscopic measurements.

Select the device and the oscilloscopic measurement variables to evaluate.

Define the analysis interval in the Analysis Cockpit.

The resulting chart shows the frequency on the X-axis and the magnitude on the Y-axis.

Multiple selected data types are displayed together in the same spectrum view.

This analysis helps identify characteristic frequencies after a disturbance and compare the spectral content of different oscilloscopic channels.

In addition to historical FFT, WebPQ also provides Live FFT as a dedicated live analysis type.

Live FFT subscribes to live oscilloscopic data of one selected device.

The spectrum updates continuously as new live samples arrive.

The chart can be zoomed horizontally to focus on relevant frequency ranges.

Device naming follows the currently configured device-name and hierarchy display settings.

Live FFT is useful for observing changing spectral content while a signal is still being streamed.

Depending on the selected oscilloscopic data, additional FFT options are available in the parameter area:

Time Window Mode controls how the FFT window is derived from the signal.

A fixed time window width can be configured when the fixed-window mode is selected.

If no suitable oscilloscopic data is selected, FFT-specific controls remain hidden.

Detect dominant harmonic or interharmonic components in oscilloscopic signals.

Compare live and recorded spectral behaviour.

Investigate resonances, converter-related frequencies, and recurring oscillations.

Narrow down disturbance sources before switching to a more detailed time-domain analysis.

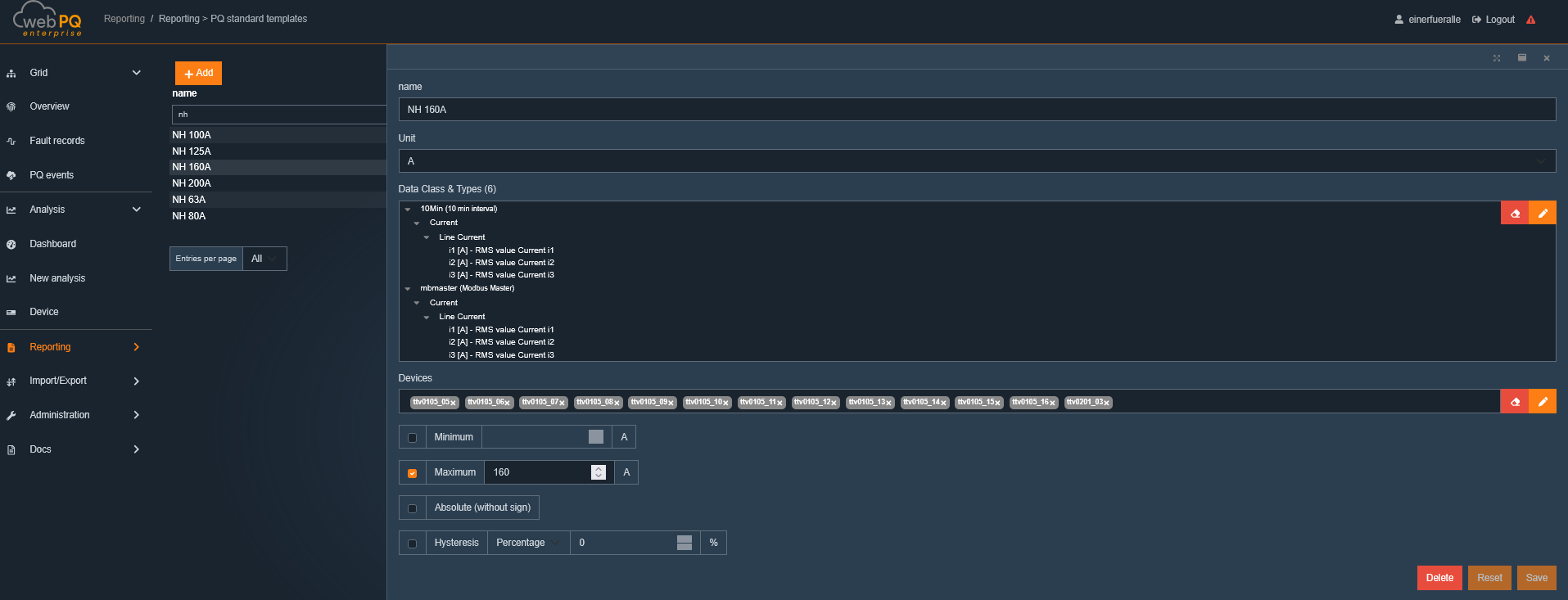

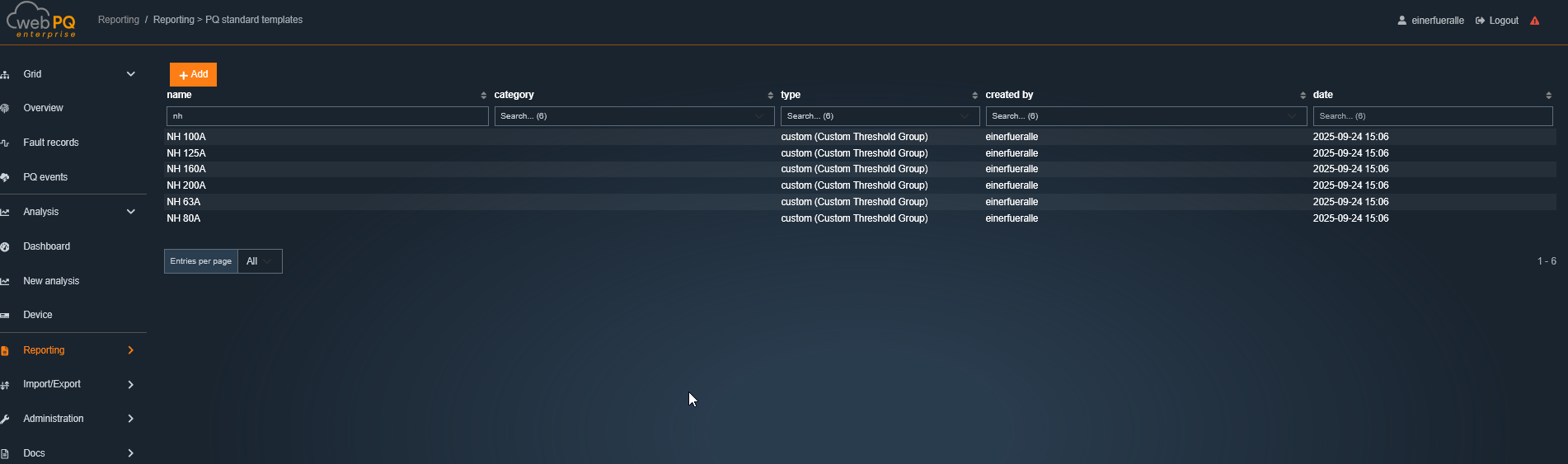

The Custom Threshold Utilization Report compares the maximum measured values of the selected interval with the configured maximum values of custom threshold settings. It is intended for quickly identifying devices that already use most of their individual threshold reserve.

At least one device and a valid analysis interval must be selected.

At least one custom threshold setting must also be selected.

A custom threshold only produces usable results if it is assigned to the device and matching data class, and if a maximum threshold value is configured.

The analysis is shown as a table. Depending on the selected view, it includes in particular:

the title of the evaluated measurement value,

the assigned threshold name,

the unit,

the measured maximum value in the selected interval,

the configured threshold maximum,

the utilization in percent,

and the remaining reserve.

The default sorting is by the highest utilization. With Advanced view, additional columns such as the unit can be shown.

Clicking the threshold name opens the corresponding custom threshold settings.

Clicking the measured maximum value opens the Analysis Cockpit with the corresponding time-series analysis and custom thresholds already enabled.

Sorting and filtering are available as long as the analysis is not rendered as a printed report.

Utilization is shown as a colored progress bar:

below 60%: green

60% to 74%: yellow

75% to 84%: orange

85% or more: red

This makes it easy to identify critical devices at a glance.

If a value cannot be calculated, the table shows a warning indicator with the corresponding explanation. Typical reasons are:

No valid measurement data is available in the selected interval.

No maximum threshold is configured in the selected custom threshold.

No matching custom threshold is assigned to the device and data class.

The available threshold data is not sufficient to calculate the result.

Identify measurement points with little remaining reserve to custom thresholds.

Compare several devices within one common time interval.

Jump directly from the overview into the matching time-series analysis or the threshold settings.

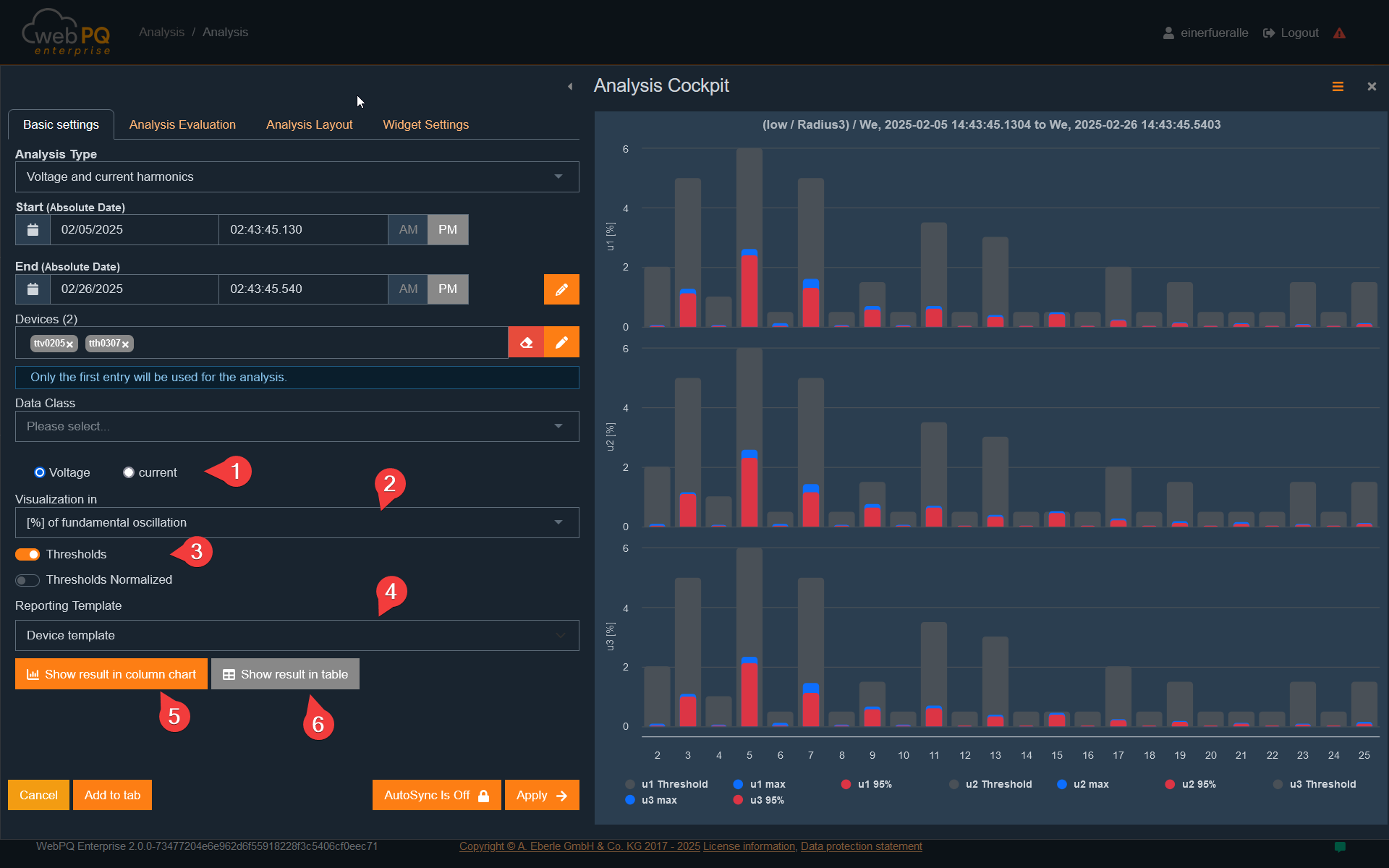

Voltage and current harmonics are displayed as bar charts to provide a quick overview of the entire frequency spectrum to be monitored.

Colour-coded bars for quick analysis:

The red area of a bar shows the percentage quantile set by the standard.

For low voltage, this is typically 95% of measurement values per week for harmonics.

The blue area represents the maximum measured value in the selected period.

Interactive detail view:

Clicking a specific harmonic switches from the bar overview to a detailed temporal view.

Switch between voltage and current harmonics:

Via #1, switch between voltage and current view.

The display can be adjusted depending on the measurement variable:

Voltage:

Relative to the fundamental wave [%] – Used in normative contexts.

As absolute value [V] – Used for troubleshooting.

Current:

Relative to nominal current [%] – Used in IEEE standards.

Relative to the fundamental wave [%] – Used in IEC standards.

As absolute value [A] – Used for troubleshooting and filter technologies.

Depending on the selected visualisation method, additional settings are shown or hidden to optimise analysis.

This analysis is useful for:

Detecting and evaluating harmonics that may cause disturbances or power quality issues.

Comparing harmonics over different periods to identify long-term trends.

Evaluating compliance with standards, e.g., EN 50160 or IEC 61000-2-4.

Measuring devices from A. Eberle GmbH provide current limit sets directly to the database. These limit sets form the basis for analyses and reports.

Since harmonic limits are percentage values based on recorded aggregation levels, alternative limit sets can be applied to existing data in the UI.

Select limit set:

Via #4, select a specific limit set for analysis.

In "Reports" > "PQ Standard Templates", limit sets can be customised and managed.

Display limits in visualisation:

When a limit set is selected under #3, it appears in the graphical view.

Users can see at a glance whether and to what extent values exceed limits.

Switch between representations:

With #5 and #6, switch between:

Tabular view (with or without limits)

Bar chart view.

Evaluate power quality: Ensure network parameters remain within standard limits (e.g., EN 50160, IEC 61000-2-4).

Compare limits: Apply different limit sets to the same data to analyse various criteria.

Detect limit violations: Identify critical deviations indicating disturbances or network problems.

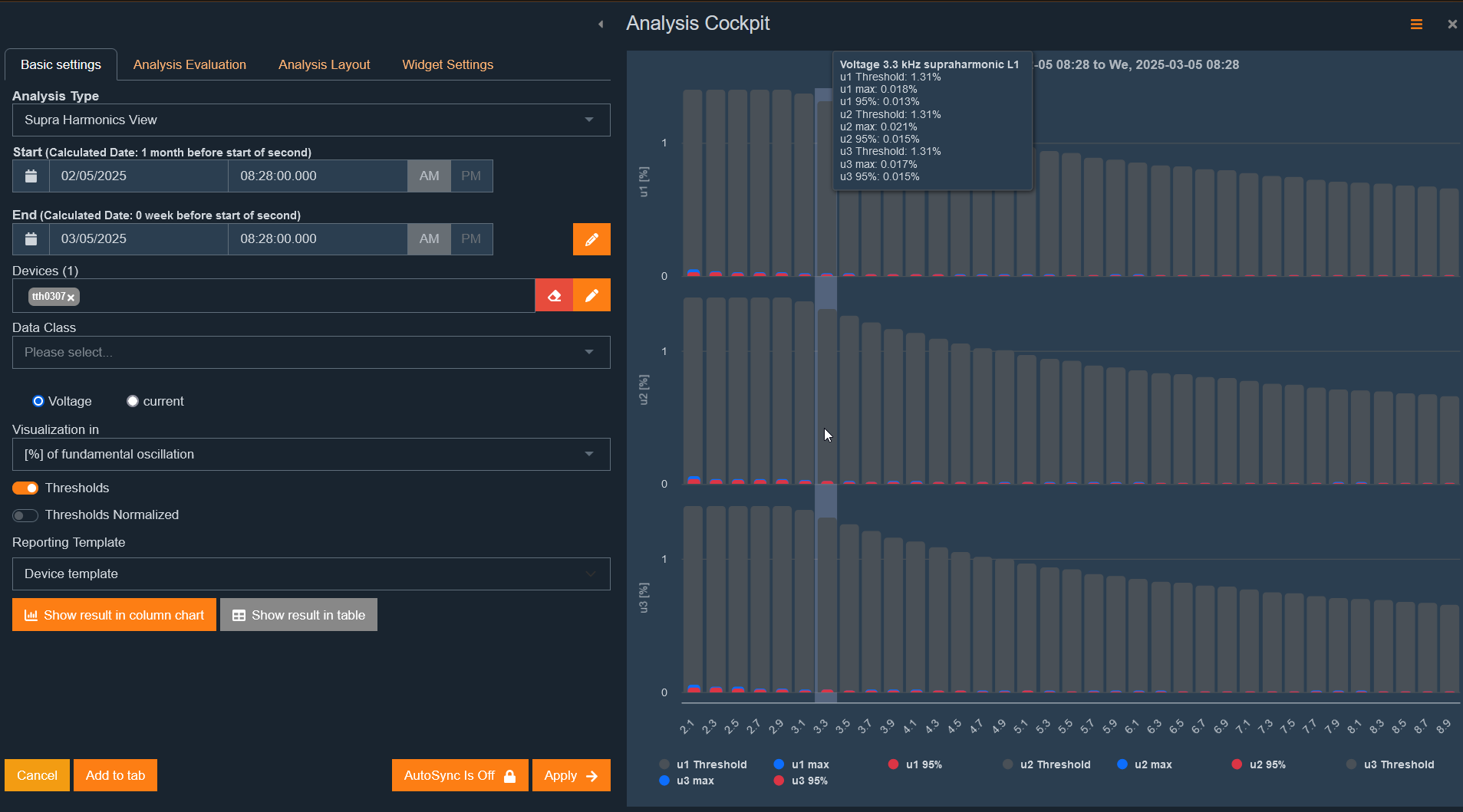

The "Supraharmonics" analysis shows – depending on device data – the supraharmonics in the context of the selected standard template and time range.

Determination follows IEC 61000-4-7 in 200-Hz frequency bands.

In the software, centre frequencies are always shown.

Example of frequency band division:

Centre frequency:2.3 kHz

Includes all 5-Hz spectral lines from 2205 Hz to 2400 Hz

Supraharmonics are visualised as bar charts.

The display can be adjusted according to standard requirements and analysis goals.

Supraharmonic analysis has the same functions as harmonic analysis:

Switch between visualisation types

Compare with limits

Tabular or bar chart view

Zoom and marker function for detailed analysis

Detect supraharmonics: Investigate higher frequency disturbances in the network.

Compare with standard limits: Check if measured supraharmonics are within permissible values.

Identify disturbance sources: Analyse frequency ranges with excessive supraharmonic voltages or currents.

Optimise filter solutions: Evaluate filter effectiveness to reduce disturbances.

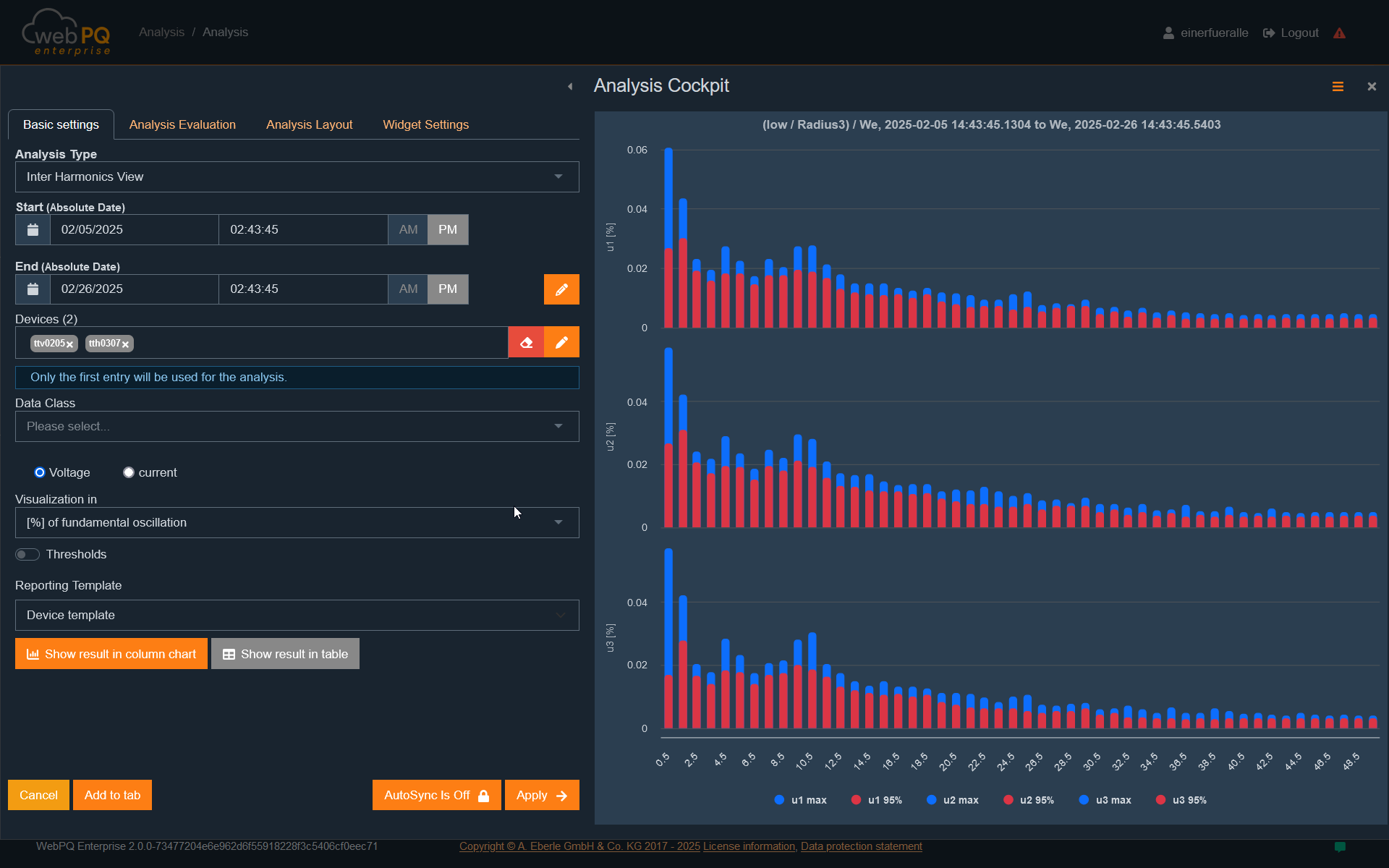

Interharmonic analysis investigates voltage and current components not in an integer ratio to the fundamental frequency.

Interharmonics are visualised as bar charts.

Various display types can be selected:

Relative to the fundamental wave [%]

As absolute value [V] or [A]

Compared to defined limits

This analysis is relevant for evaluating harmonic distortions caused by frequency converters, electronic loads, or nonlinear consumers.

Functionality matches harmonic analysis and offers:

Comparison with limits

Zoom and marker function for detailed analysis

Switch between visualisation types in the drill-in process

Detect nonlinear network loads

Evaluate network quality according to IEC 61000-4-7

Identify disturbances from frequency converters and inverters

Evaluate network resonances and unexpected oscillations

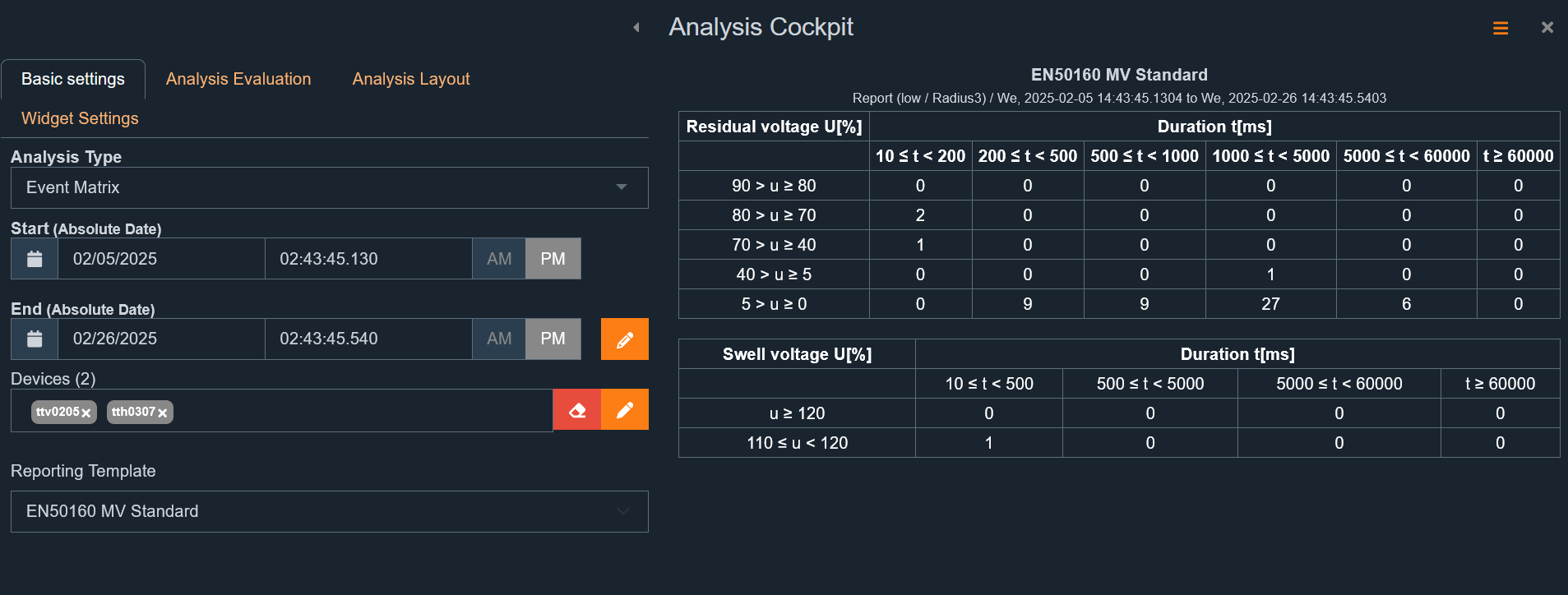

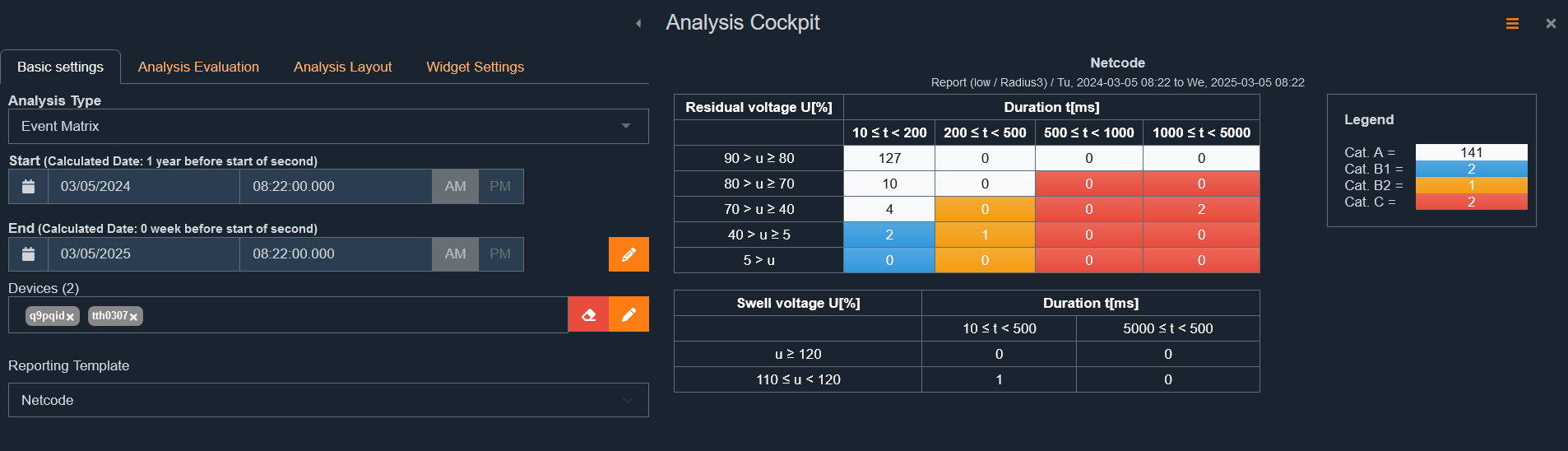

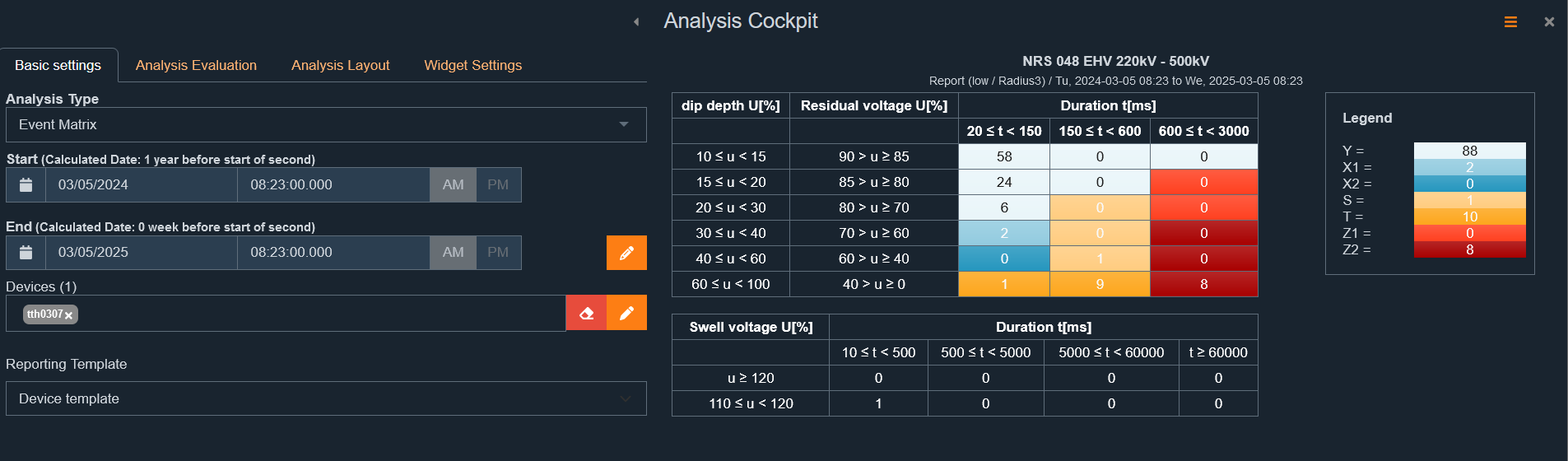

The normative report summarises all values required for evaluation according to the set standard templates in a clear analysis.

Results are available in two formats:

Bar chart – normalised to the respective measurement variable

Tabular report with detailed values

If no dedicated standard template is assigned to the selected device, the template provided by the device is used automatically.

The title is generated from the stored standard template of the device.

Depending on the template, specific measurement variables are included or excluded.

Examples:

A current EN50160 template contains individual harmonics up to H25 and no supraharmonics.

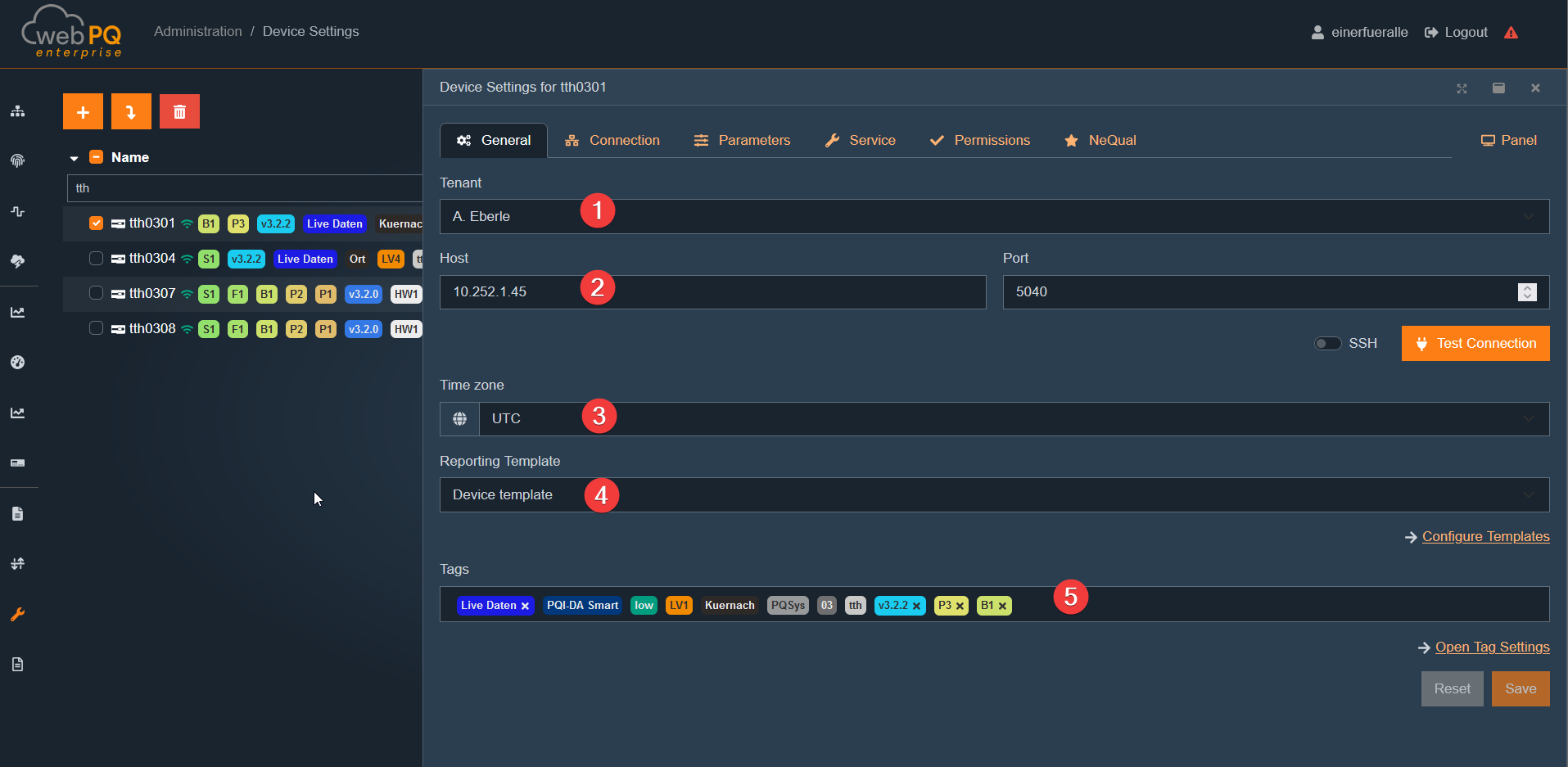

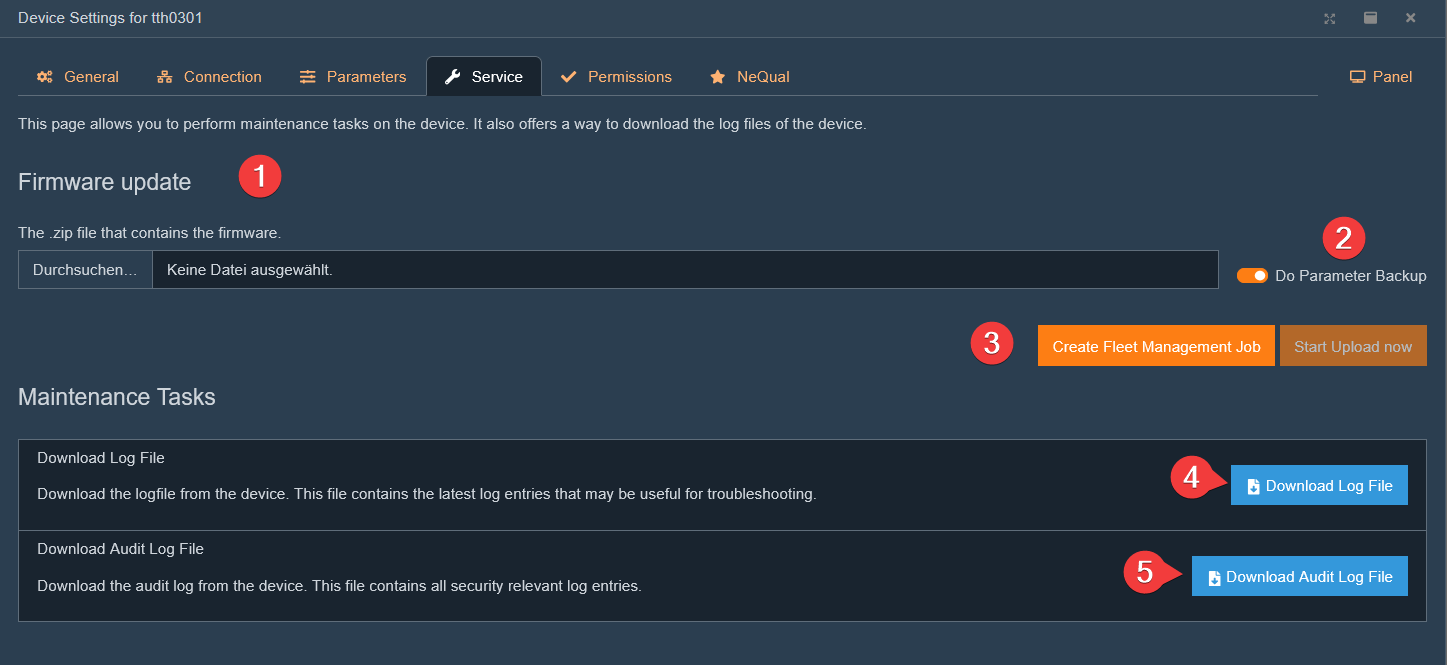

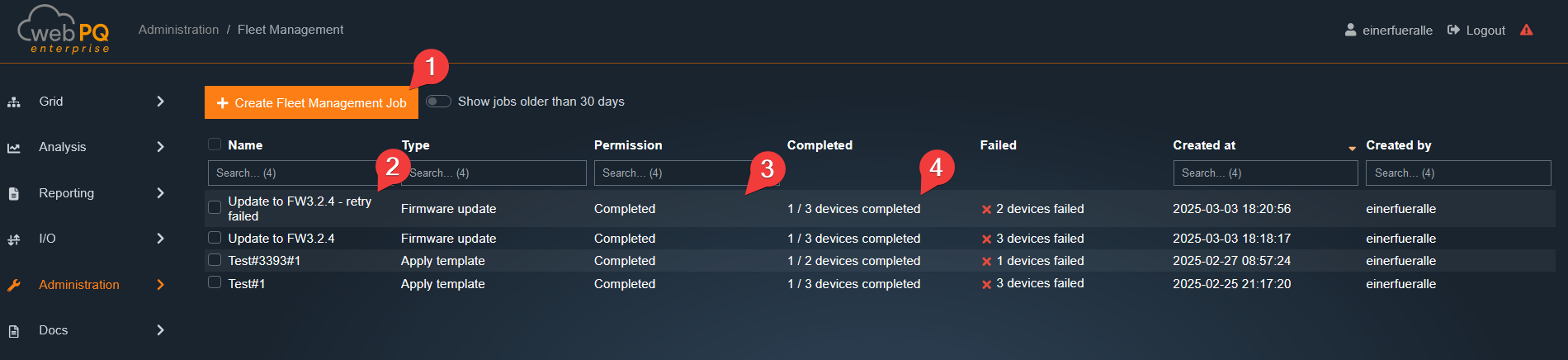

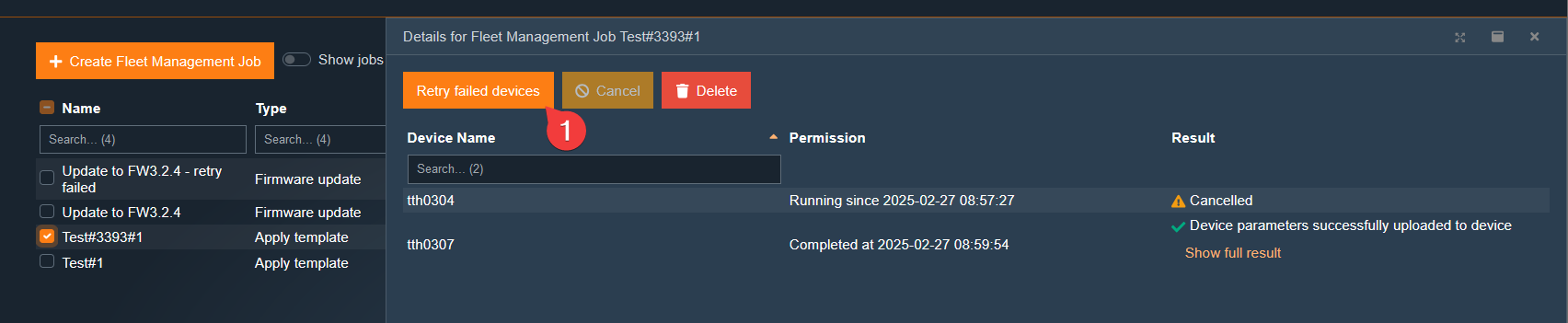

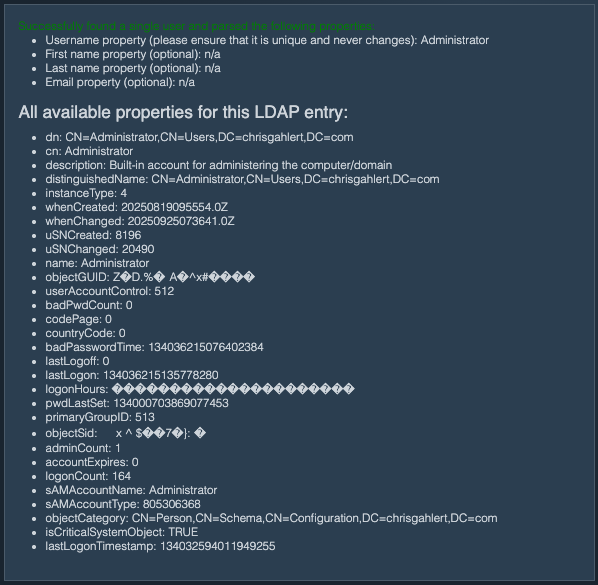

The IEC61000-2-2 standard for low voltage considers supraharmonics.